Of course! "Python livestream" is a broad topic, but it usually means one of two things:

- Building a Livestreaming Application: Creating a service where users can broadcast their camera/mic (like Twitch or YouTube Live) or watch streams. This involves server-side logic (handling video, WebSockets, authentication) and client-side UI.

- Consuming/Analyzing a Livestream: Writing a Python script to connect to an existing public livestream (e.g., from Twitch, YouTube, or RTSP) to capture video frames, analyze them (e.g., with AI/ML), and possibly save the footage.

Let's break down both approaches with practical code examples.

Approach 1: Building a Simple Livestreaming Application (WebRTC)

For building a live streaming app from scratch, the best technology is WebRTC (Web Real-Time Communication). It's a peer-to-peer protocol that allows for low-latency video/audio streaming directly in the browser.

A full WebRTC app involves:

- Frontend (Browser): Captures media and sends/receives streams using JavaScript.

- Backend (Python): Acts as a "signaling server" to help peers find each other and exchange connection information.

We'll use Flask for the backend and Socket.IO for real-time communication.

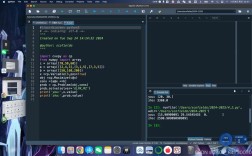

Step 1: Backend with Flask and Socket.IO

The backend's job is simple: manage rooms and relay messages between the broadcaster and the viewer.

app.py

import os

import time

from flask import Flask, render_template

from flask_socketio import SocketIO, emit

app = Flask(__name__)

# Use a secret key for session management

app.config['SECRET_KEY'] = 'your-secret-key'

# Initialize Socket.IO with async_mode='threading' or 'eventlet'

socketio = SocketIO(app, cors_allowed_origins="*")

# Store active rooms

rooms = {}

@socketio.on('connect')

def handle_connect():

"""A new client connects."""

print('Client connected')

@socketio.on('disconnect')

def handle_disconnect():

"""A client disconnects."""

print('Client disconnected')

# Here you could clean up rooms if a broadcaster leaves

@socketio.on('join')

def on_join(data):

"""A viewer joins a room."""

room = data['room']

if room not in rooms:

rooms[room] = {'viewers': 0}

rooms[room]['viewers'] += 1

# Join the client to the Socket.IO room

socketio.enter_room(room)

print(f'Viewer joined room {room}. Total viewers: {rooms[room]["viewers"]}')

# Notify others in the room that a new viewer has joined

emit('user_joined', {'count': rooms[room]['viewers']}, room=room)

@socketio.on('leave')

def on_leave(data):

"""A viewer leaves a room."""

room = data['room']

if room in rooms:

rooms[room]['viewers'] -= 1

socketio.leave_room(room)

print(f'Viewer left room {room}. Total viewers: {rooms[room]["viewers"]}')

# Notify others in the room

emit('user_left', {'count': rooms[room]['viewers']}, room=room)

# If no one is left, you can delete the room

if rooms[room]['viewers'] == 0:

del rooms[room]

@socketio.on('start_stream')

def on_start_stream(data):

"""The broadcaster signals they are ready."""

room = data['room']

print(f'Stream started in room {room}')

# Notify all viewers in this room that the stream is ready

emit('stream_started', room=room)

@socketio.on('offer')

def on_offer(data):

"""Relay the WebRTC offer from broadcaster to a viewer."""

room = data['room']

# Forward the offer to all viewers in the room

emit('offer', data, room=room, include_self=False)

@socketio.on('answer')

def on_answer(data):

"""Relay the WebRTC answer from a viewer back to the broadcaster."""

room = data['room']

# Forward the answer to the broadcaster only

emit('answer', data, room=room, include_self=True)

@socketio.on('ice_candidate')

def on_ice_candidate(data):

"""Relay ICE candidates between peers."""

room = data['room']

emit('ice_candidate', data, room=room, include_self=True)

@app.route('/')

def index():

return render_template('index.html')

if __name__ == '__main__':

# Use eventlet for production performance

# pip install eventlet

# socketio.run(app, debug=True, host='0.0.0.0', port=5000, use_reloader=False)

# For development, threading is fine

socketio.run(app, debug=True, host='0.0.0.0', port=5000)

Step 2: Frontend with HTML/JavaScript

The frontend will have two modes: "Broadcaster" and "Viewer".

templates/index.html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">Python WebRTC Stream</title>

<style>

body { font-family: sans-serif; text-align: center; }

video { border: 1px solid black; margin: 10px; }

#localVideo { width: 640px; height: 480px; }

#remoteVideo { width: 640px; height: 480px; }

input, button { padding: 10px; margin: 5px; font-size: 16px; }

</style>

</head>

<body>

<h1>Python WebRTC Livestream</h1>

<div id="setup">

<input type="text" id="roomId" placeholder="Enter Room ID">

<button id="broadcasterBtn">Broadcaster</button>

<button id="viewerBtn">Viewer</button>

</div>

<div id="broadcaster" style="display: none;">

<h2>Broadcaster</h2>

<video id="localVideo" autoplay playsinline></video>

<button id="startStreamBtn">Start Stream</button>

</div>

<div id="viewer" style="display: none;">

<h2>Viewer</h2>

<video id="remoteVideo" autoplay playsinline></video>

<button id="leaveBtn">Leave Stream</button>

</div>

<script src="https://cdn.socket.io/4.7.2/socket.io.min.js"></script>

<script src="static/webrtc.js"></script>

</body>

</html>

Step 3: WebRTC Logic in JavaScript

This is the most complex part. It handles media streams, peer connections, and signaling.

static/webrtc.js

const socket = io();

let localStream = null;

let peerConnection = null;

const configuration = {}; // Can add STUN/TURN servers here for NAT traversal

const setup = document.getElementById('setup');

const broadcasterDiv = document.getElementById('broadcaster');

const viewerDiv = document.getElementById('viewer');

const roomIdInput = document.getElementById('roomId');

const broadcasterBtn = document.getElementById('broadcasterBtn');

const viewerBtn = document.getElementById('viewerBtn');

const startStreamBtn = document.getElementById('startStreamBtn');

const leaveBtn = document.getElementById('leaveBtn');

const localVideo = document.getElementById('localVideo');

const remoteVideo = document.getElementById('remoteVideo');

let currentRoom = '';

let isBroadcaster = false;

// --- UI Event Handlers ---

broadcasterBtn.onclick = () => {

currentRoom = roomIdInput.value || 'default-room';

isBroadcaster = true;

setup.style.display = 'none';

broadcasterDiv.style.display = 'block';

socket.emit('join', { room: currentRoom });

};

viewerBtn.onclick = () => {

currentRoom = roomIdInput.value || 'default-room';

isBroadcaster = false;

setup.style.display = 'none';

viewerDiv.style.display = 'block';

socket.emit('join', { room: currentRoom });

};

startStreamBtn.onclick = startBroadcasting;

leaveBtn.onclick = leaveStream;

// --- WebRTC Functions ---

async function startBroadcasting() {

try {

localStream = await navigator.mediaDevices.getUserMedia({ video: true, audio: true });

localVideo.srcObject = localStream;

peerConnection = new RTCPeerConnection(configuration);

localStream.getTracks().forEach(track => {

peerConnection.addTrack(track, localStream);

});

peerConnection.onicecandidate = event => {

if (event.candidate) {

socket.emit('ice_candidate', {

candidate: event.candidate,

room: currentRoom

});

}

};

peerConnection.onconnectionstatechange = event => {

console.log('Connection state:', peerConnection.connectionState);

};

const offer = await peerConnection.createOffer();

await peerConnection.setLocalDescription(offer);

// Tell the signaling server the stream is starting

socket.emit('start_stream', { room: currentRoom });

// Send the offer to viewers

socket.emit('offer', {

offer: peerConnection.localDescription,

room: currentRoom

});

} catch (e) {

console.error("Error accessing media devices.", e);

}

}

async function joinStream() {

peerConnection = new RTCPeerConnection(configuration);

peerConnection.ontrack = event => {

remoteVideo.srcObject = event.streams[0];

};

peerConnection.onicecandidate = event => {

if (event.candidate) {

socket.emit('ice_candidate', {

candidate: event.candidate,

room: currentRoom

});

}

};

}

async function leaveStream() {

if (peerConnection) {

peerConnection.close();

}

if (localStream) {

localStream.getTracks().forEach(track => track.stop());

}

socket.emit('leave', { room: currentRoom });

// Reset UI

setup.style.display = 'block';

broadcasterDiv.style.display = 'none';

viewerDiv.style.display = 'none';

localVideo.srcObject = null;

remoteVideo.srcObject = null;

currentRoom = '';

}

// --- Socket.IO Event Handlers ---

socket.on('stream_started', () => {

console.log('Stream started signal received!');

if (!isBroadcaster) {

joinStream();

}

});

socket.on('offer', async (data) => {

if (isBroadcaster) return; // Only viewers should receive offers

if (!peerConnection) {

await joinStream();

}

await peerConnection.setRemoteDescription(new RTCSessionDescription(data.offer));

const answer = await peerConnection.createAnswer();

await peerConnection.setLocalDescription(answer);

socket.emit('answer', {

answer: peerConnection.localDescription,

room: currentRoom

});

});

socket.on('answer', async (data) => {

if (!isBroadcaster) return; // Only broadcaster should receive answers

await peerConnection.setRemoteDescription(new RTCSessionDescription(data.answer));

});

socket.on('ice_candidate', async (data) => {

if (!peerConnection) return;

try {

await peerConnection.addIceCandidate(new RTCIceCandidate(data.candidate));

} catch (e) {

console.error('Error adding received ice candidate', e);

}

});

How to Run This Example:

-

Install dependencies:

pip install flask flask-socketio # For better performance in production: # pip install eventlet

-

Create the project structure:

my-stream-app/ ├── app.py └── templates/ └── index.html -

Create the

staticfolder and addwebrtc.js:my-stream-app/ ├── app.py ├── static/ │ └── webrtc.js └── templates/ └── index.html -

Run the server:

python app.py

-

Open your browser and go to

http://localhost:5000. Open two browser tabs (or two different browsers). In one, enter a Room ID and click "Broadcaster". In the other, enter the same Room ID and click "Viewer". The broadcaster should see their own video, and the viewer should see the broadcaster's video.

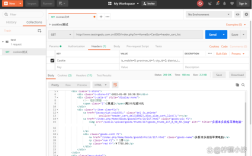

Approach 2: Consuming an Existing Livestream

This is often more straightforward and useful for data analysis. We'll use OpenCV to read a stream from a URL.

Scenario: Capture frames from a public RTSP or RTMP stream.

Many security cameras or streaming servers use RTSP (Real-Time Streaming Protocol). Some public streams use RTMP.

stream_consumer.py

import cv2

import time

# Replace with your actual stream URL

# RTSP example: 'rtsp://username:password@ip_address:port/stream_path'

# RTMP example: 'rtmp://live.twitch.tv/app/YOUR_STREAM_KEY'

# A public test stream (may be unstable)

STREAM_URL = 'http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/BigBuckBunny.mp4'

print(f"Connecting to stream: {STREAM_URL}")

# Create a VideoCapture object

cap = cv2.VideoCapture(STREAM_URL)

if not cap.isOpened():

print("Error: Could not open video stream.")

exit()

# Get stream properties (optional)

width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = cap.get(cv2.CAP_PROP_FPS)

print(f"Stream resolution: {width}x{height}, FPS: {fps}")

frame_count = 0

start_time = time.time()

try:

while True:

# Read a frame from the stream

ret, frame = cap.read()

if not ret:

print("Stream ended or error reading frame.")

break

# --- Do something with the frame ---

# Example 1: Display the frame

cv2.imshow('Livestream Feed', frame)

# Example 2: Process the frame (e.g., convert to grayscale)

gray_frame = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# cv2.imshow('Grayscale Feed', gray_frame)

# Example 3: Save a frame every second

if frame_count % int(fps) == 0:

filename = f"frame_{frame_count}.jpg"

cv2.imwrite(filename, frame)

print(f"Saved frame to {filename}")

# --- End of frame processing ---

# Check for 'q' key press to quit

if cv2.waitKey(1) & 0xFF == ord('q'):

print("Quitting...")

break

frame_count += 1

except KeyboardInterrupt:

print("Stream stopped by user.")

# Release resources

cap.release()

cv2.destroyAllWindows()

print("Stream consumer finished.")

How to Run This Example:

-

Install OpenCV:

pip install opencv-python

-

Save the code as

stream_consumer.py. -

Run the script:

python stream_consumer.py

A window will pop up showing the video stream from the URL. Press q to stop the script.

Important Notes for Consuming Streams:

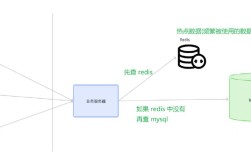

- Public vs. Private: This example uses a public video file. For a real RTSP/RTMP stream, you'll need the correct URL, which often requires authentication (username/password).

- Latency: Network latency will affect how "live" the stream is. OpenCV's reading is generally quite fast.

- Protocol Support: OpenCV's

VideoCapturehas good support for RTSP and basic RTMP. For more complex protocols or better performance, you might need to useffmpegas a backend or a dedicated library likepython-librtmp. - YouTube/Twitch: Directly capturing from YouTube/Twitch is often blocked by their terms of service. The "official" way is to use their APIs and their specific ingest/egest URLs if you are a partner. For analysis, you can sometimes find public RTMP/RTSP mirrors of popular streams.