Of course. This is a fundamental and crucial concept in concurrent programming. Let's break down what "thread-safe" means in Python, why it's important, and how to achieve it.

What is "Thread-Safe"?

In simple terms, a piece of code or an object is thread-safe if it can be used by multiple threads at the same time without causing any errors or corrupting its internal state.

Think of it like a shared whiteboard in an office:

- Not Thread-Safe: If two people try to write on the whiteboard at the exact same time, their words might get jumbled, or one might erase what the other is writing. The final result is unpredictable and incorrect.

- Thread-Safe: If you establish a rule like "only one person can write on the whiteboard at a time," you prevent the chaos. This rule ensures that even with multiple potential writers, the whiteboard's state remains consistent.

In programming, the "writers" are threads, and the "whiteboard" is a shared resource (like a variable, a list, or a file).

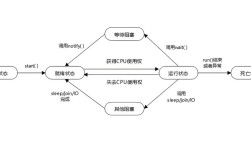

The Core Problem: The Global Interpreter Lock (GIL)

This is the most important concept to understand about threading in Python.

- What is the GIL? The GIL is a mutex (a lock) that protects access to Python objects, preventing multiple native threads from executing Python bytecode at the same time within a single process.

- What does it mean? In CPython (the standard Python implementation), even if you have a multi-core processor, only one thread can execute Python code at any given moment.

This often confuses newcomers. If there's only one thread executing, why do we need locks?

The GIL protects against race conditions, not just for performance.

While the GIL serializes the execution of Python bytecode, it does not make your code automatically thread-safe. Threads are still scheduled by the operating system, and a thread can be paused (preempted) by the OS at any point, even in the middle of a critical operation.

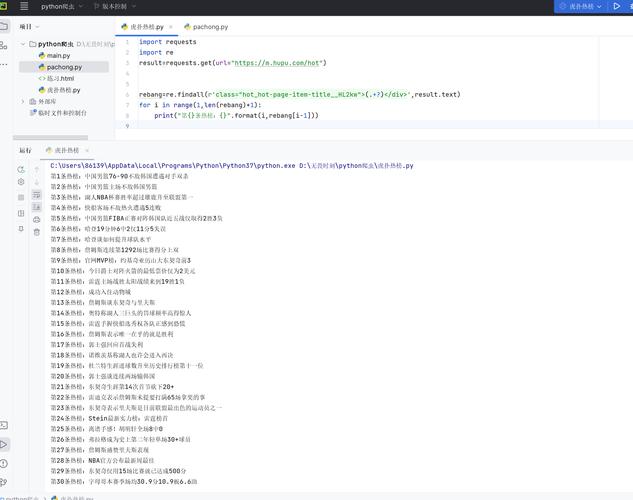

The Classic Race Condition Example

A race condition occurs when two or more threads try to access and modify a shared resource concurrently, and the final result depends on the unpredictable timing of the threads.

Let's look at a classic example: incrementing a counter.

import threading

# A shared resource that both threads will try to modify

counter = 0

def increment_counter():

"""Function to increment the global counter."""

global counter

for _ in range(1_000_000):

# This is NOT an atomic operation. It's actually three steps:

# 1. Read the current value of 'counter' into a register.

# 2. Add 1 to the value in the register.

# 3. Write the value from the register back to 'counter'.

counter += 1

# Create two threads that both run the same function

thread1 = threading.Thread(target=increment_counter)

thread2 = threading.Thread(target=increment_counter)

# Start the threads

thread1.start()

thread2.start()

# Wait for both threads to finish

thread1.join()

thread2.join()

print(f"Final counter value: {counter}")

What will the output be?

You might expect 2,000,000. However, due to the race condition, you will almost certainly get a number less than 2,000,000.

Why? Imagine this sequence of events:

- Thread 1 reads

counter(value is0). - The OS pauses Thread 1.

- Thread 2 reads

counter(value is still0). - Thread 2 increments its local value to

1and writes it back.counteris now1. - The OS resumes Thread 1. It still has the

0it read earlier. - Thread 1 increments its local value to

1and writes it back.counteris now1.

Result: Two increments were performed, but the counter only increased by one. This is the essence of a race condition. The more threads and more operations, the more likely this is to happen.

How to Achieve Thread-Safety in Python

To make your code thread-safe, you need to use synchronization primitives to control access to shared resources. The most common one is the Lock.

Solution: Using threading.Lock

A lock ensures that only one thread can execute a specific block of code (the "critical section") at a time. Other threads that try to acquire the lock will be blocked until it is released.

Let's fix the previous example with a Lock.

import threading

counter = 0

# Create a Lock object

lock = threading.Lock()

def increment_counter_safe():

"""Function to increment the global counter in a thread-safe way."""

global counter

for _ in range(1_000_000):

# Acquire the lock before accessing the shared resource

with lock:

# This block of code is the "critical section".

# Only one thread can execute it at a time.

counter += 1

# The rest of the code is the same

thread1 = threading.Thread(target=increment_counter_safe)

thread2 = threading.Thread(target=increment_counter_safe)

thread1.start()

thread2.start()

thread1.join()

thread2.join()

print(f"Final counter value: {counter}")

What will the output be now?

It will (almost certainly) be 2,000,000.

How does it work?

- Thread 1 acquires the lock. No other thread can acquire it until Thread 1 releases it.

- Thread 1 safely executes

counter += 1. - Thread 1 releases the lock.

- Thread 2 can now acquire the lock and safely execute its increment.

- This pattern repeats for all 1,000,000 iterations of each thread.

The with lock: statement is a clean way to use a lock. It automatically acquires the lock before entering the block and guarantees it will be released when the block is exited, even if an error occurs.

Other Thread-Safety Tools

threading.RLock(Reentrant Lock): A special kind of lock that a thread can acquire multiple times without causing a deadlock. This is useful if a thread needs to call a function that also uses the same lock it already holds.queue.Queue: This is the highly recommended way to pass data between threads. AQueueis designed to be thread-safe. One thread can put items into the queue, and another can get them out, without you needing to manage any locks yourself. It handles all the synchronization internally.threading.Semaphore: Controls access to a pool of resources. It allows a fixed number of threads to access a resource at the same time.threading.Event: A simple way for one thread to signal an event to other threads. Threads can wait for the event to be "set".

Built-in Thread-Safe Types

Some of Python's built-in types have internal locking and are therefore thread-safe for basic operations.

queue.Queue: As mentioned, this is the prime example.collections.deque: Appending and popping from either end is atomic and thread-safe.list,dict,set: These are NOT thread-safe for compound operations.

Why aren't lists/dicts thread-safe? Consider this code with two threads:

my_list = [] thread1 = threading.Thread(target=my_list.append, args=(1,)) thread2 = threading.Thread(target=my_list.append, args=(2,)) # ... start and join threads

This might work, but it's not guaranteed. The internal implementation of append involves checking the list's size and allocating memory if needed. If thread 1 is paused in the middle of this, thread 2 might corrupt the list's internal state.

The Rule: If you are performing a single, atomic operation (like my_list.append(x) or my_dict[key] = value), it's generally safe. But if you are doing a read-modify-write sequence (like my_list.append(my_list[-1] + 1)), you must use a lock.

Summary & Best Practices

| Concept | Description | Python Tool |

|---|---|---|

| Thread-Safety | Code that works correctly with multiple concurrent threads. | threading.Lock, queue.Queue |

| GIL | A mutex that allows only one thread to execute Python bytecode at a time. | Built-in to CPython |

| Race Condition | A bug caused by the unpredictable timing of thread execution on a shared resource. | Fixed with synchronization |

| Lock | A mechanism that enforces mutual exclusion, ensuring only one thread can access a resource at a time. | threading.Lock |

| Atomic Operation | An operation that cannot be interrupted by another thread. | queue.Queue operations, simple on primitives (but be careful!) |

Best Practices:

- Prefer

queue.Queue: For passing data between producer and consumer threads, always usequeue.Queue. It's the safest, most idiomatic, and least error-prone solution. - Minimize Shared State: The best way to avoid race conditions is to avoid them altogether. Design your program so that threads work on independent data as much as possible.

- Keep Critical Sections Small: The code inside a

with lock:block should be as short as possible. Don't do I/O, long computations, or anything that isn't strictly necessary for protecting the shared resource. - Be Aware of the GIL: Remember that the GIL makes CPU-bound tasks (like heavy math or loops) not run in parallel. For CPU-bound work, consider the

multiprocessingmodule, which creates separate processes and bypasses the GIL. For I/O-bound tasks (like network requests or file access), threading is very effective because a thread can wait for I/O while another thread runs.