Of course! Let's dive into using Faster R-CNN with Python. This is a cornerstone model in modern object detection.

I'll guide you through everything from the basic concepts to a complete, runnable code example using the popular torchvision library.

What is Faster R-CNN?

Faster R-CNN is a state-of-the-art object detection model. Unlike simple image classification (which just says "what's in the image"), object detection answers two questions for each object:

- Where? It provides bounding box coordinates (x, y, width, height) around the object.

- What? It provides a class label for the object (e.g., "car", "dog", "person").

Faster R-CNN is famous for being fast and accurate because it introduced a key innovation: the Region Proposal Network (RPN).

The Two-Stage Process

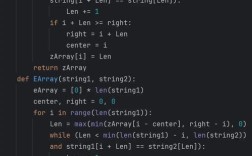

-

Stage 1: Region Proposal Network (RPN)

(图片来源网络,侵删)

(图片来源网络,侵删)- The RPN scans the feature map (extracted by a backbone like ResNet) and proposes "regions of interest" (RoIs). These are likely bounding boxes where an object might be.

- It does this very efficiently, without needing to run a slow algorithm like Selective Search.

-

Stage 2: Detection Head (RoI Pooling / RoI Align + Classifier)

- The proposed regions from the RPN are then "cropped" from the feature map.

- A small network (the "head") takes these cropped regions and classifies them into one of the object classes or as "background".

- It also refines the bounding box coordinates to be more precise.

Prerequisites

You'll need PyTorch and torchvision installed. If you don't have them, open your terminal or command prompt and run:

pip install torch torchvision

For a more complete setup, you might also want Pillow for image handling and matplotlib for visualization:

pip install Pillow matplotlib

A Complete Python Example (Using torchvision)

This example will show you how to:

- Load a pre-trained Faster R-CNN model.

- Load a sample image.

- Perform inference (detect objects).

- Visualize the results.

Let's break it down step-by-step.

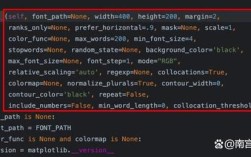

Step 1: Import Libraries

import torch

import torchvision

from torchvision.models.detection import fasterrcnn_resnet50_fpn

from torchvision.transforms import functional as F

from PIL import Image

import matplotlib.pyplot as plt

import matplotlib.patches as patches

# Check if a GPU is available

device = torch.device('cuda') if torch.cuda.is_available() else torch.device('cpu')

print(f"Using device: {device}")

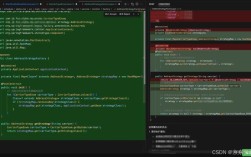

Step 2: Load a Pre-trained Model

torchvision makes it incredibly easy to load a pre-trained Faster R-CNN model. We'll use the one with a ResNet-50 backbone and Feature Pyramid Network (FPN), which is a great balance of speed and accuracy.

# Load the pre-trained Faster R-CNN model model = fasterrcnn_resnet50_fpn(pretrained=True) # Set the model to evaluation mode # This is important because layers like dropout behave differently during training and inference model.eval() # Move the model to the appropriate device (GPU if available) model.to(device)

Step 3: Load and Preprocess an Image

The model expects a tensor as input. We need to load an image and convert it to the required format.

# Load an image from a URL or a local file

# Let's use an image from a URL for this example

image_url = "http://images.cocodataset.org/val2025/000000039769.jpg"

image = Image.open(image_url).convert("RGB")

# Convert the PIL image to a PyTorch tensor

# The model expects a list of tensors, so we wrap it in a list

input_tensor = F.to_tensor(image)

input_tensor = input_tensor.to(device) # Move input tensor to the device

input_list = [input_tensor] # The model expects a list of images

Step 4: Perform Inference

Now, we pass the preprocessed image to the model. The output will be a list of dictionaries, where each dictionary corresponds to an input image.

# Run inference

with torch.no_grad(): # We don't need to calculate gradients for inference

predictions = model(input_list)

Step 5: Understand the Output

The predictions list can be a bit complex. Let's inspect it. For a single image, it will look like this:

# The output is a list of dictionaries. We only have one image, so we take the first element.

prediction = predictions[0]

# The dictionary contains:

# - 'boxes': The coordinates of the detected bounding boxes [x1, y1, x2, y2]

# - 'labels': The class index for each detected box

# - 'scores': The confidence score for each detection (0 to 1)

boxes = prediction['boxes'].cpu().numpy() # Move to CPU and convert to numpy for easier handling

labels = prediction['labels'].cpu().numpy()

scores = prediction['scores'].cpu().numpy()

print(f"Found {len(boxes)} boxes.")

print("Sample boxes:", boxes[:3])

print("Sample labels:", labels[:3])

print("Sample scores:", scores[:3])

Step 6: Filter Predictions and Visualize

The model detects many objects with varying confidence levels. We should filter out low-confidence predictions (e.g., scores < 0.9). Then, we can use matplotlib to draw the bounding boxes and labels on the image.

# Set a confidence threshold

confidence_threshold = 0.9

# Filter out boxes with low confidence

keep = scores >= confidence_threshold

boxes = boxes[keep]

labels = labels[keep]

scores = scores[keep]

# COCO class names

COCO_INSTANCE_CATEGORY_NAMES = [

'__background__', 'person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus',

'train', 'truck', 'boat', 'traffic light', 'fire hydrant', 'N/A', 'stop sign',

'parking meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow',

'elephant', 'bear', 'zebra', 'giraffe', 'N/A', 'backpack', 'umbrella', 'N/A', 'N/A',

'handbag', 'tie', 'suitcase', 'frisbee', 'skis', 'snowboard', 'sports ball',

'kite', 'baseball bat', 'baseball glove', 'skateboard', 'surfboard', 'tennis racket',

'bottle', 'N/A', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl',

'banana', 'apple', 'sandwich', 'orange', 'broccoli', 'carrot', 'hot dog', 'pizza',

'donut', 'cake', 'chair', 'couch', 'potted plant', 'bed', 'N/A', 'dining table',

'N/A', 'N/A', 'toilet', 'N/A', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell phone',

'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'N/A', 'book',

'clock', 'vase', 'scissors', 'teddy bear', 'hair drier', 'toothbrush'

]

# Create a plot

fig, ax = plt.subplots(1, figsize=(12, 9))

ax.imshow(image)

# Draw each box and label

for box, label, score in zip(boxes, labels, scores):

x1, y1, x2, y2 = box

width = x2 - x1

height = y2 - y1

# Create a Rectangle patch

rect = patches.Rectangle((x1, y1), width, height, linewidth=2, edgecolor='r', facecolor='none')

ax.add_patch(rect)

# Add the label text

class_name = COCO_INSTANCE_CATEGORY_NAMES[label]

label_text = f"{class_name}: {score:.2f}"

ax.text(x1, y1 - 10, label_text, color='r', fontsize=12, bbox=dict(facecolor='yellow', alpha=0.5))

plt.axis('off')"Faster R-CNN Object Detection")

plt.show()

When you run this code, you should see an image of a cat and a dog on a couch with red bounding boxes drawn around them.

Key Parameters and Customization

Confidence Threshold

As seen in the example, confidence_threshold is crucial. Setting it too low will result in many false positives, while setting it too high might miss true objects. A value between 0.5 and 0.9 is a good starting point.

NMS (Non-Maximum Suppression)

The model can sometimes predict multiple, slightly overlapping boxes for the same object. Non-Maximum Suppression is a technique to solve this. It works by:

- Taking the box with the highest score.

- Removing all other boxes that have a high "Intersection over Union" (IoU) with the chosen box.

- Repeating the process for the remaining boxes.

The torchvision models apply NMS by default, but you can control its behavior with the nms_thresh parameter when calling the model. A lower nms_thresh (e.g., 0.4) is more aggressive at removing overlapping boxes.

# Example of passing NMS threshold

# predictions = model(input_list, post_process={"nms_thresh": 0.4})

Using Your Own Data (Fine-Tuning)

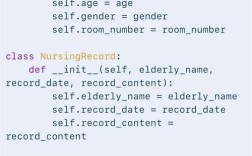

Detecting objects from the COCO dataset is great, but for real-world applications, you'll need to fine-tune the model on your own custom dataset. This is a more advanced topic, but the general steps are:

- Prepare Your Dataset: Organize your images and their corresponding bounding box annotations (usually in a format like Pascal VOC or COCO JSON). A common format is a list of dictionaries for each image, containing

file_name,width,height, andannotations(where each annotation hasbboxandcategory_id). - Create a Custom Dataset Class: Inherit from

torch.utils.data.Datasetand implement__len__and__getitem__. The__getitem__method should load an image and its annotations, convert them to the format the model expects (tensors for boxes and labels), and return them. - Modify the Model: You need to replace the classifier and regression heads of the pre-trained model with new ones that have the correct number of output classes for your dataset.

- Train the Model: Use a standard PyTorch training loop with an optimizer (like SGD or Adam) and a loss function (which is built into the Faster R-CNN model in

torchvision).

Popular Alternatives

While Faster R-CNN is excellent, other models have gained popularity for different use cases:

- YOLO (You Only Look Once): A one-stage detector that is extremely fast. It's ideal for real-time applications like video processing or self-driving cars, but can be slightly less accurate than two-stage detectors like Faster R-CNN.

- RetinaNet: Another one-stage detector that uses a technique called Focal Loss to overcome the class imbalance problem, making it very accurate and a strong competitor to Faster R-CNN.

- DETR (DEtection TRansformer): A modern "end-to-end" object detector that uses a Transformer architecture, removing the need for hand-designed components like the RPN and NMS. It's very powerful and represents the cutting edge of research.

This guide should give you a solid foundation for using Faster R-CNN in Python. Happy coding