Of course! Using Python with LMDB for Caffe is a common and powerful technique, primarily for two tasks:

- Reading LMDB Databases: Inspecting the contents of a dataset that has been converted into the LMDB format.

- Creating LMDB Databases: Pre-processing your image data and writing it into the LMDB format for faster training.

Let's break down both processes.

What is LMDB?

LMDB (Lightning Memory-Mapped Database) is a high-performance, memory-mapped key-value store. It's the recommended database format for Caffe because:

- Speed: It's extremely fast for both reading and writing, as it uses memory mapping, avoiding the overhead of traditional file I/O.

- Data Locality: All data is stored in a single file, which helps the CPU cache work more efficiently.

- Simplicity: It's a single binary file, making it easy to manage and move around.

Part 1: Reading an Existing LMDB Database

This is useful for debugging, checking your data, or understanding the structure of a dataset you've downloaded.

You'll need the caffe and lmdb Python libraries.

pip install caffe lmdb

Here is a Python script to iterate through an LMDB database, decode the datum protobuf, and display the image and label.

import lmdb

import caffe

import numpy as np

import cv2 # Or matplotlib for image display

import sys

def read_lmdb(lmdb_path, limit=5):

"""

Reads and displays contents from an LMDB database.

Args:

lmdb_path (str): Path to the LMDB directory.

limit (int): Number of entries to display.

"""

# Open the LMDB environment

env = lmdb.open(lmdb_path, readonly=True, lock=False)

with env.begin() as txn:

# Get a cursor to iterate over the data

cursor = txn.cursor()

count = 0

for key, value in cursor:

if count >= limit:

break

# The key is the image ID (as a string)

key_str = key.decode('ascii')

print(f"--- Entry {count + 1} ---")

print(f"Key (Image ID): {key_str}")

# The value is a serialized caffe.proto.Datum

datum = caffe.proto.caffe_pb2.Datum()

datum.ParseFromString(value)

# Get the label

label = datum.label

print(f"Label: {label}")

# Get the image data

# Check if the image is stored as encoded data (e.g., JPEG) or raw

if datum.encoded:

import io

from PIL import Image

# Decode the image from the Datum's 'data' field

img_data = io.BytesIO(datum.data)

img = Image.open(img_data)

img_array = np.array(img)

else:

# The image is stored as raw float/int data

# We need to reshape it based on the datum's shape

# For example, if it's a 3-channel 224x224 image:

# shape = (datum.channels, datum.height, datum.width)

# img_array = np.frombuffer(datum.data, dtype=np.float32).reshape(shape)

# For simplicity, let's assume it's a single channel and reshape based on height/width

# This part is highly dependent on how your data was written!

# A common case is a 3-channel image stored as float32 BGR.

# Let's reshape it assuming it's a 3-channel image.

# This requires knowing the original dimensions.

# For demonstration, let's assume it's a 256x256 RGB image.

# You MUST adjust this to match your data.

try:

# Try to reshape based on common dimensions

img_data = np.frombuffer(datum.data, dtype=np.float32)

# Example: 224x224 RGB image

img_array = img_data.reshape(3, 224, 224).transpose(1, 2, 0) # HWC for OpenCV

except ValueError:

print("Could not reshape image data. Check dimensions in Datum.")

img_array = np.frombuffer(datum.data)

print(f"Image Shape: {img_array.shape}")

print(f"Image Data Type: {img_array.dtype}")

# Display the image using OpenCV

cv2.imshow(f"Image {count + 1} (Label: {label})", img_array)

cv2.waitKey(0)

cv2.destroyAllWindows()

count += 1

env.close()

if __name__ == '__main__':

if len(sys.argv) < 2:

print("Usage: python read_lmdb.py <path_to_lmdb_dir>")

sys.exit(1)

lmdb_path = sys.argv[1]

read_lmdb(lmdb_path)

How to run:

python read_lmdb.py /path/to/your/lmdb_folder

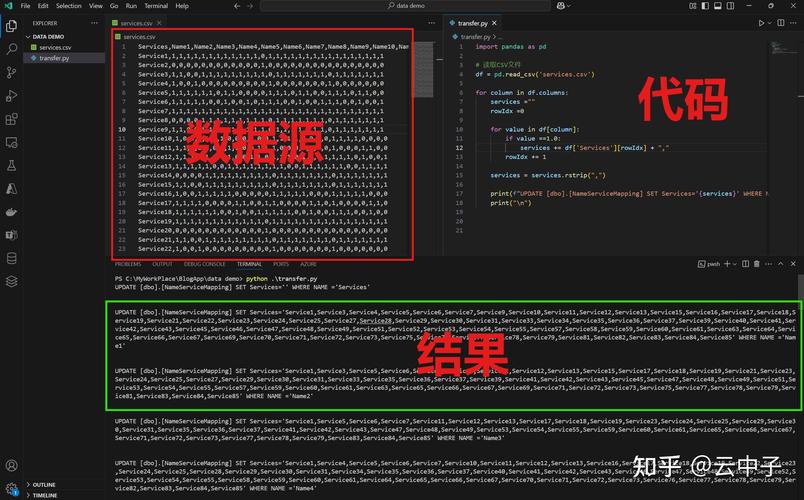

Part 2: Creating an LMDB Database from Images

This is the more common use case. You have a folder of images and labels, and you want to convert them into a single LMDB file for Caffe.

This process typically involves:

- Pre-processing: Resizing images, converting color (RGB to BGR), and normalizing pixel values (e.g., scaling to [0,1] or subtracting the ImageNet mean).

- Writing to LMDB: Iterating through your dataset, pre-processing each image, and storing it as a

caffe.proto.Datummessage in the LMDB.

Here's a script that does this. It assumes you have a directory of images and a corresponding text file with labels.

File Structure:

/path/to/my_dataset/

├── images/

│ ├── 1.jpg

│ ├── 2.jpg

│ └── ...

└── labels.txtlabels.txt would contain the label for each image, in order:

0

1

0

...Python Script to Create LMDB:

import lmdb

import caffe

import numpy as np

import cv2

import os

import sys

import shutil

def make_lmdb(image_folder, label_file, output_lmdb_path, image_width=256, image_height=256):

"""

Creates an LMDB database from a folder of images and a label file.

Args:

image_folder (str): Path to the folder containing images.

label_file (str): Path to the text file containing labels.

output_lmdb_path (str): Path where the LMDB database will be created.

image_width (int): Target width for resizing images.

image_height (int): Target height for resizing images.

"""

# Check if the LMDB directory already exists and remove it

if os.path.exists(output_lmdb_path):

print(f"Removing existing LMDB directory at {output_lmdb_path}")

shutil.rmtree(output_lmdb_path)

# Create a new LMDB environment

# map_size sets the maximum size of the database in bytes.

# 1e12 is 1 TB, adjust as needed.

map_size = int(1e12)

env = lmdb.open(output_lmdb_path, map_size=map_size)

# Read all labels into a list

with open(label_file, 'r') as f:

labels = [int(line.strip()) for line in f.readlines()]

# Get a sorted list of image files

image_files = sorted([f for f in os.listdir(image_folder) if f.lower().endswith(('.png', '.jpg', '.jpeg'))])

if len(image_files) != len(labels):

raise ValueError("Number of images does not match the number of labels.")

print(f"Writing {len(image_files)} entries to LMDB...")

with env.begin(write=True) as txn:

for i, img_filename in enumerate(image_files):

# --- 1. Load and Pre-process Image ---

img_path = os.path.join(image_folder, img_filename)

img = cv2.imread(img_path)

if img is None:

print(f"Warning: Could not read image {img_path}. Skipping.")

continue

# Resize image

img = cv2.resize(img, (image_width, image_height), interpolation=cv2.INTER_LINEAR)

# Convert BGR to RGB (Caffe typically expects RGB)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

# Normalize pixel values to [0, 1]

img = img.astype(np.float32) / 255.0

# --- 2. Create Caffe Datum ---

datum = caffe.proto.caffe_pb2.Datum()

datum.channels = img.shape[2]

datum.height = img.shape[0]

datum.width = img.shape[1]

# Store the image data as a raw byte string

datum.data = img.tobytes()

datum.label = labels[i]

# --- 3. Write to LMDB ---

# The key is the image filename or an index. Using the filename is good for debugging.

key = img_filename.encode('ascii')

serialized_datum = datum.SerializeToString()

txn.put(key, serialized_datum)

if (i + 1) % 100 == 0:

print(f"Processed {i + 1}/{len(image_files)} images...")

env.close()

print(f"Successfully created LMDB at {output_lmdb_path}")

if __name__ == '__main__':

if len(sys.argv) < 4:

print("Usage: python create_lmdb.py <image_folder> <label_file> <output_lmdb_path>")

sys.exit(1)

image_folder_path = sys.argv[1]

label_file_path = sys.argv[2]

lmdb_output_path = sys.argv[3]

make_lmdb(image_folder_path, label_file_path, lmdb_output_path)

How to run:

python create_lmdb.py /path/to/my_dataset/images /path/to/my_dataset/labels.txt /path/to/output_lmdb

Important Considerations

- Datum Shape: When you read the data back, you must know the original dimensions (

height,width,channels) to correctly reshape the raw byte array. The script above assumes you know this. - Data Type: Be consistent with your data types. If you write floats, read floats. If you write integers (e.g., for labels), read integers. The

datum.datafield is for the image, whiledatum.labelis for the integer label. - Mean Image: For many Caffe models, you need to subtract a mean image (e.g., the ImageNet mean) during pre-processing. You would do this right after loading the image with

cv2.imreadand before normalizing. The mean is typically anumpyarray of shape(channels, height, width). - Caffe's

convert_imagesetTool: Caffe provides a command-line toolcaffe/build/tools/convert_imagesetwhich is highly optimized for creating LMDBs. For large datasets, it's often faster than a pure Python script. If you have Caffe compiled, you should prefer using it. The usage is:# Example for ImageNet-style data # /path/to/caffe/build/tools/convert_imageset \ # /path/to/root/of/images/ \ # /path/to/train.txt \ # File with image paths relative to root # /path/to/output_lmdb \ # --shuffle \ # --backend=lmdb