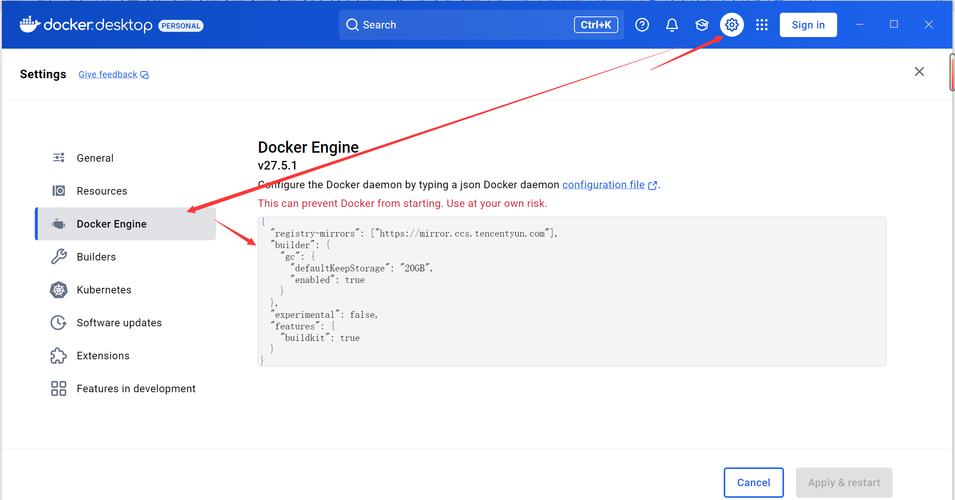

Of course! Setting up a Python environment with Docker is a powerful and standard practice for creating reproducible, isolated, and portable applications. Here’s a comprehensive guide covering everything from basic concepts to a complete, production-ready example.

Why Use Docker for Python Development?

- Reproducibility ("It works on my machine!"): Docker containers package your application, its dependencies (Python, libraries, system packages), and the runtime environment into a single, consistent unit. This ensures it runs the same way everywhere, from your laptop to a production server.

- Isolation: Your project's dependencies don't conflict with other projects or your system's Python installation. Each project gets its own clean, self-contained environment.

- Portability: You can easily share your application with others by sharing the Dockerfile and requirements.txt. They can build the exact same environment with a single command.

- Scalability: Docker is the standard for container orchestration platforms like Kubernetes, making it easy to scale your application horizontally.

The Core Components

To create a Python environment in Docker, you'll typically use three files:

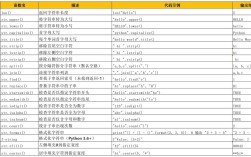

Dockerfile: A text file with instructions on how to build a Docker image. It's the blueprint for your environment.requirements.txt: A simple text file listing your Python package dependencies (e.g.,Flask==2.0.1,pandas==1.3.0)..dockerignore: A text file that specifies files and directories to be ignored when building the image (similar to.gitignore).

Step-by-Step Guide: A Simple Python App

Let's create a basic "Hello World" Flask application to demonstrate the process.

Step 1: Project Structure

First, organize your project directory like this:

my-python-app/

├── app/

│ └── main.py

├── Dockerfile

├── requirements.txt

└── .dockerignoreStep 2: Create the Application Code

app/main.py

This is a simple web server using Flask.

from flask import Flask

app = Flask(__name__)

@app.route('/')

def hello():

return "Hello from a Dockerized Python App!"

if __name__ == '__main__':

# Run the app on host 0.0.0.0 and port 5000

app.run(host='0.0.0.0', port=5000)

Step 3: Define Dependencies

requirements.txt

List the Python libraries your app needs.

Flask==2.0.1

# Add other dependencies here, e.g.:

# requests

# numpyStep 4: Create the Dockerfile

This is the most important file. We'll create an optimized one using a multi-stage build, which is a best practice.

Dockerfile

# Stage 1: Builder # Use an official Python runtime as a parent image FROM python:3.9-slim as builder # Set the working directory in the container WORKDIR /app # Copy the requirements file into the container COPY requirements.txt . # Install any needed packages specified in requirements.txt # --no-cache-dir reduces image size RUN pip install --no-cache-dir --user -r requirements.txt # Stage 2: Final Image # Use a clean, minimal base image FROM python:3.9-slim # Set the working directory WORKDIR /app # Copy installed packages from the builder stage # The path /root/.local is where packages are installed by default with `pip install --user` COPY --from=builder /root/.local /root/.local # Copy the application code into the container COPY app/ . # Add the local bin directory to the PATH # This allows you to run the installed packages (like flask) directly ENV PATH=/root/.local/bin:$PATH # Command to run the application # The `--host=0.0.0.0` part makes the server accessible from outside the container CMD ["python", "main.py"]

Explanation of the Dockerfile:

FROM python:3.9-slim: We use an official Python image.slimis a smaller variant of the full image, which is good for production.WORKDIR /app: Sets the default directory for all subsequent commands likeCOPYandRUN.COPY requirements.txt .: Copies only the requirements file first. This is a key optimization: Docker caches layers. Ifrequirements.txtdoesn't change, Docker will reuse the cached layer wherepip installwas run, speeding up builds.RUN pip install ...: Installs the Python dependencies.--from=builder: This is the magic of the multi-stage build. It copies only the installed packages from the first stage (builder), not the build cache or temporary files, resulting in a much smaller final image.ENV PATH=...: Makes the installed packages (likeflask) available as commands.CMD ["python", "main.py"]: The default command to run when a container starts from this image.

Step 5: Create the .dockerignore File

This prevents unnecessary files from being copied into the image, keeping it small and build fast.

.dockerignore

# Git files

.git

.gitignore

# Python cache and virtual environments

__pycache__/

*.pyc

*.pyo

*.pyd

.Python

venv/

.env

.venv

# IDE files

.vscode/

.idea/

*.swp

*.swoStep 6: Build and Run the Docker Image

-

Open your terminal in the

my-python-appdirectory. -

Build the image: The

-tflag tags the image with a name (e.g.,my-python-app).docker build -t my-python-app .

You will see Docker execute each step in the

Dockerfile. -

Run the container: The

-pflag maps a port on your host machine (the first5000) to a port in the container (the second5000).docker run -p 5000:5000 my-python-app

-

Test it! Open your web browser and navigate to

http://localhost:5000. You should see "Hello from a Dockerized Python App!".

Advanced Topics & Best Practices

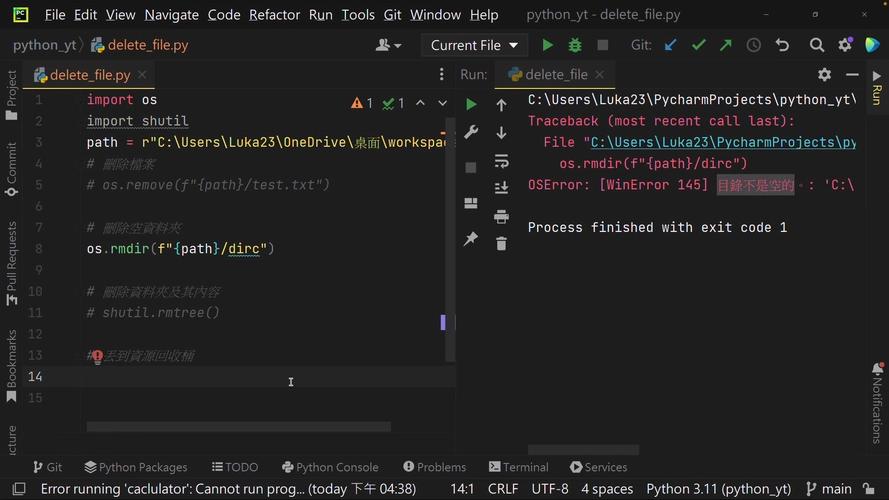

Interactive Development (Live Coding)

For development, rebuilding the image on every code change is slow. Instead, you can mount your local code directory into the container.

-

Stop the previous container (

docker stop <container_id>orCtrl+Cin the terminal where it's running). -

Run a new container in interactive mode:

# The -v flag mounts your local app directory into the container's /app directory # The -it flag runs it in interactive mode docker run -it --rm -p 5000:5000 -v $(pwd)/app:/app my-python-app

-v $(pwd)/app:/app: Mounts the localappfolder to the container's/appfolder. Changes on your host are instantly reflected in the container.--rm: Automatically removes the container when you stop it. Great for development.

Now, you can edit main.py on your host, and the changes will be live in the running container.

Using Docker Compose (For Multi-Service Apps)

Docker Compose is a tool for defining and running multi-container Docker applications. It uses a docker-compose.yml file to manage all your services (e.g., your web app, a database, a Redis cache).

While overkill for our single app, here's what a docker-compose.yml would look like:

docker-compose.yml

version: '3.8'

services:

web:

build: . # Build the image from the Dockerfile in the current directory

ports:

- "5000:5000"

volumes:

- ./app:/app # Mount the code for live-reloading

# You might also set environment variables here

# environment:

# - FLASK_ENV=development

To run with Compose:

# Build and start the services in the background docker-compose up --build -d # View logs docker-compose logs -f # Stop and remove all containers docker-compose down

Environment Variables

Don't hardcode secrets or configuration in your code. Use environment variables.

app/main.py (modified)

import os

from flask import Flask

app = Flask(__name__)

# Get a message from an environment variable, with a default value

message = os.environ.get('APP_MESSAGE', 'Hello from a Dockerized Python App!')

@app.route('/')

def hello():

return message

if __name__ == '__main__':

app.run(host='0.0.0.0', port=5000)

You can pass these variables when running the container:

# Using docker run docker run -p 5000:5000 -e APP_MESSAGE="Hello from a custom message!" my-python-app # Using docker-compose # (as shown in the example above)

This is the foundation for creating robust, portable, and scalable Python applications using Docker.