Of course! The fit and predict methods are two of the most fundamental concepts in machine learning with Python, especially when using libraries like Scikit-learn.

Let's break them down with a clear analogy, a simple code example, and a detailed explanation.

The Analogy: A Student Learning for an Exam

Imagine you're training a student to take a math exam.

-

fit()- The Learning Phase:- You give the student a textbook (this is your training data).

- The student reads the textbook, studies the examples, and tries to understand the underlying patterns and formulas. This is the "fitting" process. The model is learning the relationship between the problems (features) and the solutions (labels).

- The student doesn't take the exam yet; they are just building their knowledge. After this phase, the "trained student" (the model) is ready.

-

predict()- The Testing Phase: (图片来源网络,侵删)

(图片来源网络,侵删)- Now, you give the student a new, unseen practice exam (this is your test data or new data).

- The student uses the knowledge they gained from the textbook to answer the questions on this new exam.

- The answers the student writes down are the predictions. You can then compare these predictions to the actual correct answers to see how well the student learned.

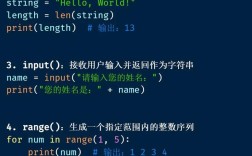

Code Example: Predicting House Prices

Let's use a simple linear regression model to predict house prices based on their size. This is a classic "Hello, World!" for machine learning.

We'll follow these standard steps:

- Import necessary libraries.

- Prepare the data (features

Xand targety). - Split the data into training and testing sets.

- Create a machine learning model.

- Fit the model to the training data.

- Predict prices for the test data.

- Evaluate the model's performance.

# 1. Import necessary libraries

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LinearRegression

from sklearn.metrics import mean_squared_error

# 2. Prepare the data

# Let's say we have data on house sizes (in square feet) and their prices (in $1000s)

# X = Features (the input data)

# y = Target (the output we want to predict)

X = np.array([[1500], [1600], [1700], [1800], [1900], [2000], [2100], [2200]])

y = np.array([300, 320, 340, 360, 380, 400, 420, 440])

# 3. Split the data into training and testing sets

# We train the model on the training set and test it on the testing set.

# test_size=0.3 means 30% of the data will be used for testing.

# random_state ensures we get the same split every time we run the code.

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

print(f"Training data (X_train): \n{X_train}")

print(f"Training labels (y_train): \n{y_train}\n")

print(f"Test data (X_test): \n{X_test}")

print(f"Test labels (y_test): \n{y_test}\n")

# 4. Create a machine learning model

# We are using a simple Linear Regression model.

model = LinearRegression()

# 5. Fit the model to the training data

# This is where the "learning" happens.

# The model studies the relationship between X_train (house sizes) and y_train (prices).

print("Fitting the model...")

model.fit(X_train, y_train)

print("Model fitting complete.\n")

# 6. Predict prices for the test data

# Now we use the trained model to make predictions on the unseen test data.

print("Making predictions on the test data...")

y_pred = model.predict(X_test)

print(f"Predicted prices: {y_pred}")

print(f"Actual prices: {y_test}\n")

# 7. Evaluate the model's performance

# We compare the predicted prices (y_pred) with the actual prices (y_test).

# A lower MSE means the model's predictions are closer to the actual values.

mse = mean_squared_error(y_test, y_pred)

print(f"Mean Squared Error (MSE): {mse}")

# You can also see the model's learned parameters

print(f"Coefficient (slope): {model.coef_[0]}") # How much the price changes per sq ft

print(f"Intercept: {model.intercept_}") # The base price

Detailed Breakdown

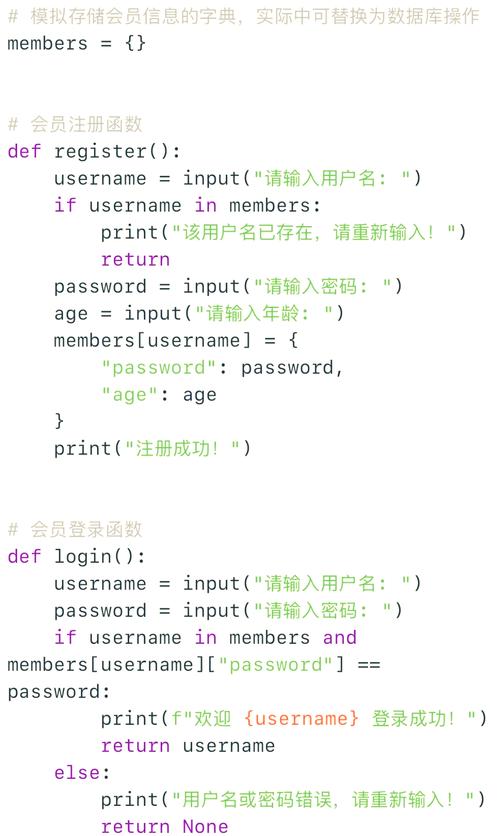

model.fit(X_train, y_train)

- Purpose: To train the model. This is the learning step.

- Arguments:

X_train: The features of the training data. These are the input variables (e.g., house size, number of bedrooms, age of the house).y_train: The target labels of the training data. This is the output you want the model to learn to predict (e.g., house price).

- What it does: The algorithm iterates through the training data, adjusts its internal parameters (like the slope and intercept in a line), and tries to find a pattern or mathematical function that best maps

X_traintoy_train. For example, in linear regression, it calculates the best-fit line that minimizes the distance between the line and the actual data points.

model.predict(X_test)

- Purpose: To use the trained model to make predictions on new, unseen data.

- Arguments:

X_test: The features of the data you want to make predictions for. This data must have the same format asX_train.

- What it does: It takes the data you provide (

X_test) and passes it through the function that the model learned during thefitphase. It doesn't change the model's parameters; it simply uses them to generate an output (y_pred). For our house price example, it would take a house size and output a predicted price.

Key Concepts and Best Practices

- Never

fiton your test data! The whole point of the test set is to simulate new, unseen data. If youfiton it, you're essentially "cheating" by letting the model learn the answers to the test. This will give you an overly optimistic and misleading score of your model's performance. - Data Splitting: The

train_test_splitfunction is crucial for evaluating your model's ability to generalize. A common split is 80% for training and 20% for testing. - Model State: After you call

fit(), the object (e.g.,model) is now a trained model. You can save this object to a file and use it later in a different application to make predictions without needing to retrain it. - Other Common Methods:

model.score(X_test, y_test): A convenient method that calculates a common evaluation metric (like R² score for regression) and returns it. It's equivalent to comparingy_predtoy_test.model.transform(X): Used by some models (likeStandardScalerorPCA) to apply a transformation to data based on parameters learned duringfit. It doesn't learn anything new.

Summary Table

| Method | Purpose | When to Use | Example |

|---|---|---|---|

fit() |

Train the model. Learn patterns from data. | Only on your training data (X_train, y_train). |

model.fit(X_train, y_train) |

predict() |

Make predictions on new, unseen data. | On your test data (X_test) or new data you want to label. |

predictions = model.predict(X_test) |