Of course! The term "Python Linux pipe" can refer to two main, but related, concepts:

- Python as a Consumer of Linux Pipes: Using Python to read data from a standard Unix pipe (stdin) or write data to one (stdout). This is the most common and fundamental use case.

- Python as a Creator of Linux Pipes: Using Python's

subprocessmodule to create new pipes and connect them between commands, just like you would in a shell with .

Let's break down both concepts with clear examples.

Concept 1: Python as a Consumer of a Pipe (Reading from stdin)

This is when you have a command in your Linux shell that produces output, and you want to pipe that output directly to a Python script for processing.

How it Works

When you run a command like some_command | python my_script.py, the shell does two things:

- It runs

python my_script.py. - It runs

some_command. - It connects the standard output (

stdout) ofsome_commandto the standard input (stdin) ofpython my_script.py.

Inside your Python script, you can read from sys.stdin to get the data from the preceding command.

Example: Counting unique IP addresses from an Apache log

Let's say you have a large Apache access log file access.log. You want to find the 10 most frequent IP addresses.

The Python Script (count_ips.py)

This script will read lines from stdin, extract the IP address from each line, count the occurrences, and print the top 10.

# count_ips.py

import sys

from collections import Counter

def main():

# sys.stdin is a file-like object connected to the pipe

# We can iterate over it line by line

ip_counter = Counter()

# sys.stdin is an iterator, so we can loop through it directly

for line in sys.stdin:

# A typical Apache log line looks like:

# 192.168.1.100 - - [10/Oct/2025:13:55:36 +0000] "GET /index.html HTTP/1.1" 200 1024

# The IP address is the first item.

try:

ip = line.split()[0]

ip_counter[ip] += 1

except IndexError:

# Skip malformed lines

continue

# Get the 10 most common IPs

top_10_ips = ip_counter.most_common(10)

print("--- Top 10 IP Addresses ---")

for ip, count in top_10_ips:

print(f"{ip}: {count}")

if __name__ == "__main__":

main()

The Linux Command

Now, in your terminal, you can use grep to filter for successful requests (HTTP 200), then pipe that to your Python script.

# First, let's create a dummy log file for demonstration cat > access.log << EOF 192.168.1.100 - - [10/Oct/2025:13:55:36 +0000] "GET /index.html HTTP/1.1" 200 1024 10.0.0.5 - - [10/Oct/2025:13:55:37 +0000] "GET /about.html HTTP/1.1" 200 2048 192.168.1.100 - - [10/Oct/2025:13:55:38 +0000] "GET /image.jpg HTTP/1.1" 200 5120 10.0.0.5 - - [10/Oct/2025:13:55:39 +0000] "GET /index.html HTTP/1.1" 200 1024 8.8.8.8 - - [10/Oct/2025:13:55:40 +0000] "GET /api/data HTTP/1.1" 404 100 192.168.1.100 - - [10/Oct/2025:13:55:41 +0000] "GET /index.html HTTP/1.1" 200 1024 10.0.0.5 - - [10/Oct/2025:13:55:42 +0000] "GET /contact.html HTTP/1.1" 200 1500 EOF # The pipe command grep " 200 " access.log | python3 count_ips.py

Output:

--- Top 10 IP Addresses ---

192.168.1.100: 3

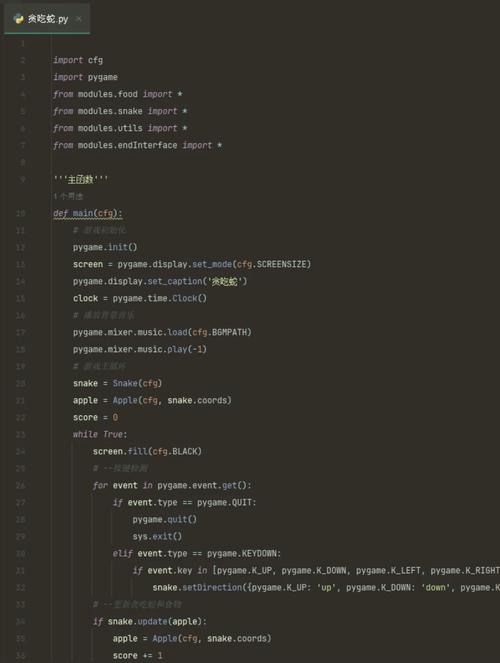

10.0.0.5: 2Concept 2: Python as a Creator of Pipes (Using subprocess)

Sometimes, you need to orchestrate a pipeline of commands from within a Python script. The subprocess module is the modern, powerful way to do this. The key is using subprocess.PIPE.

The subprocess.PIPE is a special value that you can pass to the stdin, stdout, or stderr arguments of subprocess.Popen. It tells Popen to create a new pipe that your Python program can read from or write to.

How it Works

You typically use Popen to launch the first process in the pipeline, capturing its stdout. Then you launch the second process, feeding it the stdout of the first as its stdin. Finally, you read the stdout of the last process.

Example: Finding the 5 largest files in a directory

Let's replicate the du -sh * | sort -rh | head -n 5 command using Python.

The Python Script (find_largest_files.py)

# find_largest_files.py

import subprocess

import sys

def main():

try:

# --- Step 1: Run `du -sh *` ---

# This command lists file/directory sizes in human-readable format.

# We capture its stdout so we can pipe it to the next command.

du_process = subprocess.Popen(

['du', '-sh', '*'],

stdout=subprocess.PIPE, # Capture the output

stderr=subprocess.PIPE # Also capture errors to avoid them showing up

)

# --- Step 2: Run `sort -rh` ---

# This command sorts the output from `du` in reverse human order (largest first).

# Its stdin is connected to the stdout of the `du_process`.

sort_process = subprocess.Popen(

['sort', '-rh'],

stdin=du_process.stdout, # Feed the output of `du` into `sort`

stdout=subprocess.PIPE

)

# Important: Allow `du_process` to receive a SIGPIPE if `sort_process` exits.

# This is what happens in a real shell pipe.

du_process.stdout.close()

# --- Step 3: Run `head -n 5` ---

# This command takes the first 5 lines from the sorted output.

# Its stdin is connected to the stdout of the `sort_process`.

head_process = subprocess.Popen(

['head', '-n', '5'],

stdin=sort_process.stdout,

stdout=subprocess.PIPE

)

# Important: Allow `sort_process` to receive a SIGPIPE.

sort_process.stdout.close()

# --- Step 4: Get the final output ---

# The output of `head_process` is the final result of our pipeline.

# We can read it directly.

final_output, final_error = head_process.communicate()

if final_output:

print("--- Top 5 Largest Files/Directories ---")

# decode from bytes to string and print

print(final_output.decode('utf-8'))

# Check for errors from any of the processes

if du_process.returncode != 0:

print(f"du command failed with error: {du_process.stderr.read().decode('utf-8')}", file=sys.stderr)

if sort_process.returncode != 0:

print(f"sort command failed with error: {sort_process.stderr.read().decode('utf-8')}", file=sys.stderr)

except FileNotFoundError:

print("Error: 'du', 'sort', or 'head' command not found. Make sure they are installed.", file=sys.stderr)

except Exception as e:

print(f"An error occurred: {e}", file=sys.stderr)

if __name__ == "__main__":

main()

The Linux Command

You can run this Python script directly.

# Make sure you have some files/dirs in the current directory echo "hello world" > file1.txt mkdir -p a_very_long_directory_name_for_testing echo "more data" > a_very_long_directory_name_for_testing/file2.txt python3 find_largest_files.py

Sample Output:

--- Top 5 Largest Files/Directories ---

4.0K ./a_very_long_directory_name_for_testing

13 ./file1.txtKey Takeaways and Best Practices

-

For Consuming a Pipe (Concept 1):

- Use

sys.stdinas a file-like object. - Iterate over it with a

forloop (for line in sys.stdin:) for memory efficiency, especially with large inputs. - This is simple, efficient, and the standard way to make Python scripts chainable in a shell.

- Use

-

For Creating a Pipe (Concept 2):

- Use

subprocess.Popen. - Pass

subprocess.PIPEtostdin,stdout, orstderrto create a connection. - Chain the processes by assigning the

stdoutof one to thestdinof the next. - Crucially, close the

stdoutof the previous process after assigning it (proc.stdout.close()). This allows the pipe to be properly terminated if the next process exits early (e.g.,headfinishes). - Use

communicate()to read the final output. This is safer than.read()as it avoids deadlocks by consuming all output at once. - Always check

returncodeandstderrfor errors in your subprocesses.

- Use

By mastering these two concepts, you can make Python a powerful and flexible component in your Linux command-line toolkit.