Of course! Using auto_arima in Python is one of the most popular and effective ways to automatically find the best parameters for an ARIMA time series model. It saves you from the tedious process of manually identifying the orders (p, d, q).

Here's a comprehensive guide covering:

- What is

auto_arima? - Installation

- A Complete Step-by-Step Example

- Explanation of Key Parameters

- Interpreting the Results

- Making Forecasts

- Important Considerations and Best Practices

What is auto_arima?

auto_arima is a function from the pmdarima library. It automates the process of finding the optimal parameters for an ARIMA (AutoRegressive Integrated Moving Average) model.

- ARIMA(p, d, q) models are composed of three parts:

- p (AR order): The number of lag observations included in the model (autoregression).

- d (I order): The number of times the raw observations are differenced (to make the time series stationary).

- q (MA order): The size of the moving average window.

Instead of you manually plotting ACF/PACF charts and trying different combinations, auto_arima searches through a range of possible values for p, d, and q and selects the combination that yields the best model based on a chosen information criterion (usually AIC).

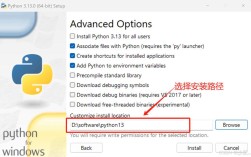

Installation

First, you need to install the pmdarima library. It's also highly recommended to have statsmodels and matplotlib installed.

pip install pmdarima pip install statsmodels matplotlib pandas numpy

A Complete Step-by-Step Example

Let's walk through a complete workflow using a sample dataset. We'll use the famous "Airline Passengers" dataset, which has a clear trend and seasonality.

Step 1: Import Libraries and Load Data

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from pmdarima import auto_arima

from statsmodels.tsa.seasonal import seasonal_decompose

# Load the data

# You can download it from: https://www.kaggle.com/datasets/rakannimer/air-passengers

# Or use this direct link for a simple CSV

url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/airline-passengers.csv'

df = pd.read_csv(url, parse_dates=['Month'], index_col='Month')

# Check the data

print(df.head())

df.plot(figsize=(12, 6))'Airline Passengers Over Time')

plt.ylabel('Number of Passengers')

plt.show()

Step 2: Decompose the Time Series (Optional but Recommended)

This helps us visualize the trend, seasonality, and residuals. It gives us a good idea of whether we should use a seasonal model like SARIMA.

# Decompose the time series decomposition = seasonal_decompose(df['Passengers'], model='multiplicative', period=12) fig = decomposition.plot() fig.set_size_inches(14, 7) plt.show()

You'll see a clear upward trend and a strong seasonal pattern that repeats every 12 months. This strongly suggests we should use a SARIMA (Seasonal ARIMA) model, which auto_arima can handle automatically by finding seasonal parameters (P, D, Q, m).

Step 3: Train the auto_arima Model

This is the core step. We'll let auto_arima find the best parameters for us.

# We use the 'error' action to ignore any model-fitting errors and continue.

# 'trace=True' prints the fitting progress of each model.

# 'seasonal=True' enables seasonal differencing.

# m=12 is the seasonal period (for monthly data with a yearly pattern).

# stepwise=True speeds up the search process by iteratively adding/removing terms.

stepwise_fit = auto_arima(

df['Passengers'],

error_action='ignore', # don't want to know if an order doesn't work

suppress_warnings=True, # don't want convergence warnings

seasonal=True, # set to seasonal

m=12, # seasonal period (12 for monthly data)

stepwise=True, # faster, stepwise search

trace=True # print the result of each fit

)

# To print the summary of the best model found

print(stepwise_fit.summary())

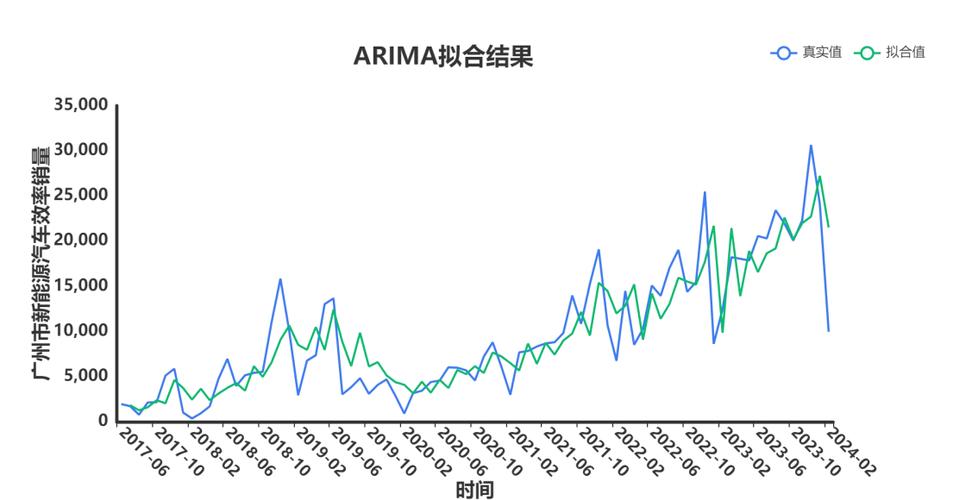

Step 4: Interpret the Results

After running the code above, you'll see a log of models being tried and their AIC scores. At the end, auto_arima will output a summary of the best model it found.

Example Output:

Fit ARIMA: order=(1, 1, 1) seasonal_order=(0, 1, 1, 12); AIC=1022.723, BIC=1035.820, Fit time=0.529 seconds

Fit ARIMA: order=(0, 1, 0) seasonal_order=(0, 1, 1, 12); AIC=1033.528, BIC=1040.418, Fit time=0.114 seconds

... (more models) ...

Fit ARIMA: order=(1, 1, 0) seasonal_order=(1, 1, 1, 12); AIC=1008.891, BIC=1022.503, Fit time=0.847 seconds

Fit ARIMA: order=(0, 1, 1) seasonal_order=(1, 1, 1, 12); AIC=1009.599, BIC=1023.211, Fit time=1.051 seconds

Total fit time: 20.343 seconds

SARIMAX Results

==============================================================================

Dep. Variable: Passengers No. Observations: 144

Model: SARIMAX(1, 1, 0) Log Likelihood -501.446

Date: ... AIC 1008.891

Time: ... BIC 1022.503

Sample: 01-01-1949 HQIC 1014.416

- 12-01-1960

Covariance Type: opg

==============================================================================

coef std err z P>|z| [0.025 0.975]

------------------------------------------------------------------------------

ar.L1 0.4041 0.089 4.543 0.000 0.230 0.578

ma.S.L12 -0.6137 0.079 -7.788 0.000 -0.768 -0.459

sigma2 48.2370 3.436 14.037 0.000 41.497 54.977

===================================================================================

Ljung-Box (Q): 8.33 Jarque-Bera (JB): 6.19

Prob(Q): 0.80 Prob(JB): 0.045

Heteroskedasticity (H): 1.22 Skew: -0.43

Prob(H) (two-sided): 0.31 Kurtosis: 3.34

===================================================================================

Warnings:

[1] Covariance matrix calculated using the outer product of gradients (complex-step).What to look for in the summary:

- Model:

SARIMAX(1, 1, 0)x(0, 1, 1, 12)- This is the best model found.- Non-seasonal part (p,d,q):

(1, 1, 0) - Seasonal part (P,D,Q,m):

(0, 1, 1, 12)

- Non-seasonal part (p,d,q):

- AIC (Akaike Information Criterion): This is the key metric

auto_arimauses to compare models. Lower is better. It balances model fit with complexity. - Coefficients (

ar.L1,ma.S.L12): These are the fitted values for the AR and MA terms. TheP>|z|column shows the p-value. You want this to be less than 0.05, indicating the term is statistically significant. - Sigma2: This is the variance of the residuals (the model's error term).

- Ljung-Box (Q): Tests for autocorrelation in the residuals. A high p-value (

Prob(Q) > 0.05) is good, meaning the residuals look like white noise (no pattern left to model).

Step 5: Make Forecasts

Now that we have our trained model (stepwise_fit), we can use it to make predictions.

# Make predictions for the next 12 months

n_periods = 12

forecast, conf_int = stepwise_fit.predict(n_periods=n_periods, return_conf_int=True)

# Create a pandas series for the forecast values

index_of_forecast = pd.date_range(df.index[-1] + pd.DateOffset(months=1), periods=n_periods, freq='MS')

forecast_series = pd.Series(forecast, index=index_of_forecast)

# Plot the results

plt.figure(figsize=(12, 6))

plt.plot(df['Passengers'], label='Observed')

plt.plot(forecast_series, label='Forecast', color='red')

plt.fill_between(conf_int.index,

conf_int[:, 0],

conf_int[:, 1], color='pink', alpha=0.3, label='95% Confidence Interval')'Airline Passengers Forecast')

plt.xlabel('Date')

plt.ylabel('Passengers')

plt.legend()

plt.show()

This plot will show the historical data, the forecasted values for the next year, and the confidence interval around the forecast.

Explanation of Key auto_arima Parameters

| Parameter | Description | Default |

|---|---|---|

y |

The time series data (a Pandas Series or NumPy array). | Required |

start_p, start_q |

Starting values for p and q to begin the search. |

2 |

max_p, max_q |

Maximum values for p and q to search. |

5 |

d, D |

The order of differencing and seasonal differencing. If None, auto_arima will try to find it using a test for stationarity (like the KPSS test). |

None |

m |

The number of periods in a season. Crucial for seasonal data (e.g., 12 for monthly, 4 for quarterly). | 1 (no seasonality) |

seasonal |

Whether to fit a seasonal ARIMA. Set to True if you suspect seasonality. |

False |

stepwise |

If True, performs a stepwise search to find the best model, which is much faster. If False, it will try all combinations. |

True |

information_criterion |

The metric to use for model selection. Common choices are 'aic', 'bic', or 'hqic'. |

'aic' |

trace |

If True, prints the status of the search. |

False |

error_action |

What to do if a model fails to fit. 'ignore' is common to prevent the search from stopping. |

'warn' |

suppress_warnings |

If True, suppresses warnings during model fitting. |

False |

Important Considerations and Best Practices

-

Stationarity is Key:

auto_arimais smart, but it still works best when your data has a stable mean and variance. If your trend or seasonality is extremely strong, it's often good practice to apply a transformation (like a log or Box-Cox) before feeding it toauto_arima. Thepmdarimalibrary can even handle the Box-Cox transformation for you with thetransformationparameter. -

Data Splitting: For a more rigorous evaluation, you should split your time series data into a training set and a testing set. Fit

auto_arimaonly on the training set, then forecast the test set and compare your forecast to the actual values to calculate an error metric (like MAE or RMSE).# Example of train/test split train_size = int(len(df) * 0.8) train, test = df.iloc[:train_size], df.iloc[train_size:] # Fit on training data only model = auto_arima(train, seasonal=True, m=12, trace=True, error_action='ignore') # Forecast on the test set forecast, conf_int = model.predict(n_periods=len(test), return_conf_int=True) # Evaluate (e.g., using Mean Absolute Error) from sklearn.metrics import mean_absolute_error mae = mean_absolute_error(test, forecast) print(f"MAE: {mae}") -

Understand the Model: Don't just blindly use the output. Look at the summary. Check the p-values of the coefficients and the Ljung-Box test. A model with insignificant coefficients or correlated residuals might not be the best, even if it has a low AIC.

-

stepwise=Trueis a Good Default: The stepwise algorithm is much faster than an exhaustive search (stepwise=False). For most problems, it will find a model that is very close to the absolute best.