Of course! The term "bottleneck" in Python refers to a specific part of a program that is responsible for the majority of its execution time or resource consumption. Identifying and optimizing these bottlenecks is a critical skill for writing high-performance Python applications.

Here’s a comprehensive guide covering what bottlenecks are, how to find them, and how to fix them.

What is a Bottleneck?

A bottleneck is the limiting factor that prevents a system from achieving higher performance. In software, this is almost always a piece of code that is disproportionately slow compared to the rest of the application.

Analogy: Imagine a highway with four lanes. For most of the journey, cars flow smoothly. However, there's a single lane section that causes a massive traffic jam. That single lane is the bottleneck. No matter how fast the cars are or how good the rest of the highway is, the overall speed is limited by that bottleneck.

In Python, bottlenecks can be caused by:

- Slow Algorithms: Using an O(n²) algorithm when an O(n log n) one is available.

- I/O Operations: Reading/writing files, making network requests, or querying a database.

- Inefficient Loops: Repeatedly calling slow Python functions inside a loop.

- Unnecessary Computations: Recalculating the same values over and over.

- Memory Issues: Frequent memory allocation/deallocation or using too much memory, causing swapping.

How to Find Bottlenecks (Profiling)

You can't optimize what you can't measure. The process of finding bottlenecks is called profiling. Python has several excellent built-in and third-party tools for this.

A. The Built-in timeit Module

Best for measuring the execution time of a small, specific snippet of code.

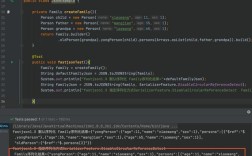

import timeit

# A slow, nested loop function

def slow_function():

total = 0

for i in range(1000):

for j in range(1000):

total += i * j

return total

# A fast, equivalent function using list comprehension (for demonstration)

def fast_function():

return sum(i * j for i in range(1000) for j in range(1000))

# Time the slow function

time_slow = timeit.timeit(slow_function, number=10)

print(f"Slow function took: {time_slow:.4f} seconds")

# Time the fast function

time_fast = timeit.timeit(fast_function, number=10)

print(f"Fast function took: {time_fast:.4f} seconds")

B. The Built-in cProfile Module

This is the most common and powerful tool for profiling an entire script. It gives you a detailed breakdown of how many times each function was called and how much time it spent.

How to use it:

- Save your code in a file (e.g.,

my_app.py). - Run it from the command line.

# Run the profiler and output the results to a file python -m cProfile -o profile_output.txt my_app.py # To see the results directly in the terminal (less detailed) python -m cProfile my_app.py

How to read the output: The output has several key columns:

ncalls: Number of calls.percall:tottime/ncallsorcumtime/ncalls.tottime: Total time spent in this function (excluding sub-functions).cumtime: Cumulative time spent in this function and all sub-functions. This is the most important column for finding bottlenecks.

You can sort the output by cumtime to see which functions are taking the most time overall.

# Use a script to sort the profile output by cumulative time

import pstats

p = pstats.Stats('profile_output.txt')

p.sort_stats('cumulative').print_stats(10) # Print the top 10 offenders

C. The line_profiler Third-Party Module

cProfile tells you which function is slow, but line_profiler tells you which line within that function is slow. This is incredibly useful for pinpointing the exact source of a bottleneck.

Installation:

pip install line_profiler

How to use it:

- Add the

@profiledecorator to any function you want to analyze (you don't need to import it). - Run the profiler from the command line.

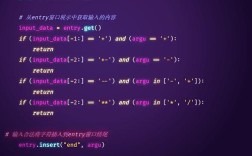

# my_app.py

# @profile # <-- Add this decorator

def process_data(data):

result = []

for item in data:

# This line is likely a bottleneck

processed_item = [x * 2 for x in item]

result.append(processed_item)

return result

if __name__ == "__main__":

large_data = [[i for i in range(1000)] for _ in range(1000)]

process_data(large_data)

# First, compile the script for profiling kernprof -l -v my_app.py # Output will show line-by-line timing information

Common Bottlenecks in Python and How to Fix Them

Once you've identified the bottleneck, here are common scenarios and their solutions.

Bottleneck 1: Slow Loops and Inefficient Iteration

Python loops are inherently slower than compiled languages like C or Rust. If you're doing heavy math or data manipulation in a pure Python loop, it's a prime candidate for optimization.

Problem:

import math

def compute_sines_slow(data):

results = []

for x in data:

# math.sin is a C function, but the loop overhead is high

results.append(math.sin(x))

return results

Solutions:

-

Use List/Generator Comprehensions: More "Pythonic" and often faster than a manual

forloop.def compute_sines_faster(data): return [math.sin(x) for x in data] -

Use NumPy (for Numerical Data): This is the gold standard for numerical and scientific computing in Python. NumPy operations are executed in highly optimized C code under the hood, avoiding Python loop overhead entirely.

import numpy as np # Assuming data is a list, convert it to a NumPy array def compute_sines_numpy(data): np_data = np.array(data) return np.sin(np_data) # This single line replaces the entire loopPerformance Gain: NumPy can be 10x to 100x faster or more for these kinds of operations.

Bottleneck 2: I/O-Bound Operations

If your program spends most of its time waiting for the disk, a network, or a database, it's I/O-bound. The CPU is idle while waiting for the I/O to complete.

Problem: Reading a large file line by line and processing it.

def process_large_file_slow(filepath):

results = []

with open(filepath, 'r') as f:

for line in f:

# Some processing that is fast, but the bottleneck is reading the file

processed_line = line.strip().upper()

results.append(processed_line)

return results

Solutions:

-

Use Buffered I/O (Default): Python's file objects are already buffered. The

with open(...)statement is generally efficient. The real bottleneck might be the slowness of the disk itself. -

Use Multi-threading/Async I/O: Since the program is waiting, you can use other threads to perform I/O operations concurrently.

threadingmodule: Good for concurrent I/O tasks. Note: Due to the Global Interpreter Lock (GIL), only one thread can execute Python bytecode at a time. However, for I/O-bound tasks, the thread releases the GIL while waiting, so other threads can run.asynciomodule: The modern, preferred way for high-concurrency I/O in Python. It uses a single thread and an event loop to manage many tasks, making it very lightweight.

Bottleneck 3: Inefficient Algorithms

Choosing the wrong algorithm is a fundamental performance issue. No amount of micro-optimization can fix an algorithm with a poor time complexity.

Problem: Finding an item in a list using a linear search. This is O(n). If the list has 1 million items, you might have to check all 1 million in the worst case.

my_list = list(range(1000000))

def find_item_slow(lst, item):

for i, val in enumerate(lst):

if val == item:

return i

return -1

Solution: Use a data structure with a faster lookup time. A set or dictionary provides O(1) (average case) lookup time.

my_list = list(range(1000000))

my_set = set(my_list) # Create a set from the list once

def find_item_fast(my_set, item):

# Checking for membership in a set is extremely fast

return item in my_set # Returns True or False

Performance Gain: For a list of 1 million items, the set lookup is thousands of times faster than the list search.

Summary: A Practical Workflow

- Profile Before Optimizing: Never guess where the bottleneck is. Use

cProfileto find the slow function. - Drill Down with Line Profiling: Use

line_profilerto find the exact slow line within that function. - Analyze the Cause: Is it a slow algorithm? A Python loop? I/O?

- Apply the Right Tool:

- Numerical Loops? -> Use NumPy.

- Algorithmic Problem? -> Choose a better data structure (set, dict) or algorithm.

- I/O Problem? -> Use

asyncioorthreading. - General Python Slowness? -> For extreme cases, consider using libraries like Numba (for JIT compilation of numerical code) or even rewriting the bottleneck in Cython or C.