Of course! "Feature Engineering" is one of the most crucial skills in a data scientist's toolkit. It's the art and science of using your domain knowledge to create new input features (predictor variables) from your raw data to make machine learning models perform better.

Let's break down Python Feature Engineering into a comprehensive guide, covering the concepts, techniques, and essential libraries.

What is Feature Engineering?

In simple terms, feature engineering is the process of transforming raw data into features that better represent the underlying problem to the predictive models, resulting in improved model accuracy.

Think of it as cooking: your raw data is the raw ingredients (vegetables, meat, spices). Feature engineering is the process of washing, chopping, marinating, and combining them to create a delicious dish (a high-performing model).

Why is it so important?

- Improves Model Performance: Better features often lead to more accurate and robust models.

- Helps Models Learn: Well-engineered features make it easier for models to find patterns in the data.

- Reduces Complexity: Sometimes, a few well-chosen features can replace hundreds of less useful ones, simplifying the model.

- Adds Domain Knowledge: It's where you inject your understanding of the problem into the model.

The Core Concepts of Feature Engineering

Feature engineering can be broadly divided into several key areas:

- Handling Missing Values: Not all data is perfect. We need a strategy for missing data.

- Feature Transformation: Changing the scale or distribution of features.

- Feature Encoding: Converting categorical data into numerical data.

- Feature Creation (Deriving New Features): Creating new features from existing ones.

- Feature Selection: Selecting the most important features and discarding the rest.

Essential Python Libraries for Feature Engineering

You'll primarily use these libraries:

pandas: For data manipulation and analysis. It's the foundation for almost all feature engineering tasks.numpy: For numerical operations, often used in conjunction with pandas.scikit-learn: The go-to library for machine learning in Python. It provides powerful tools for scaling, encoding, and feature selection.seaborn&matplotlib: For data visualization, which is critical for understanding your data before and after engineering.

Handling Missing Values

Missing values can be a major problem for many ML algorithms. Here are common strategies:

- Deletion: Remove rows or columns with missing values.

- Imputation: Fill in the missing values with a statistical measure (mean, median, mode) or a constant.

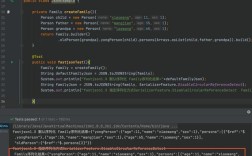

Example with pandas:

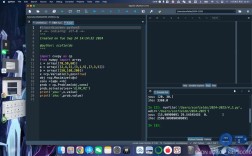

import pandas as pd

import numpy as np

# Create a sample DataFrame with missing values

data = {'Age': [25, 30, 35, np.nan, 40],

'Salary': [50000, 60000, np.nan, 80000, 90000],

'Department': ['HR', 'IT', 'HR', np.nan, 'IT']}

df = pd.DataFrame(data)

print("Original DataFrame:")

print(df)

# --- Strategy 1: Deletion ---

# Drop rows with any missing values

df_dropped_rows = df.dropna()

print("\nDataFrame after dropping rows with missing values:")

print(df_dropped_rows)

# Drop columns with any missing values

df_dropped_cols = df.dropna(axis=1)

print("\nDataFrame after dropping columns with missing values:")

print(df_dropped_cols)

# --- Strategy 2: Imputation ---

# Fill missing numerical values with the median

df['Age'].fillna(df['Age'].median(), inplace=True)

df['Salary'].fillna(df['Salary'].median(), inplace=True)

# Fill missing categorical values with the mode (most frequent value)

df['Department'].fillna(df['Department'].mode()[0], inplace=True)

print("\nDataFrame after imputation:")

print(df)

Feature Transformation

Many algorithms (like Linear Regression, SVM, KNN) perform better when numerical features are on a similar scale.

- Standardization (Z-score normalization): Rescales features to have a mean of 0 and a standard deviation of 1. Use when your data follows a Gaussian distribution.

z = (x - mean) / std_dev - Normalization (Min-Max scaling): Rescales features to a range between 0 and 1. Use when you don't have outliers or when you need a bounded range.

x_norm = (x - min) / (max - min)

Example with scikit-learn:

from sklearn.preprocessing import StandardScaler, MinMaxScaler

# Sample data

data = {'Feature1': [10, 20, 30, 40, 50],

'Feature2': [100, 200, 300, 400, 500]}

df = pd.DataFrame(data)

# --- Standardization ---

scaler_std = StandardScaler()

df_std = pd.DataFrame(scaler_std.fit_transform(df), columns=df.columns)

print("\nStandardized Data:")

print(df_std)

# --- Normalization ---

scaler_norm = MinMaxScaler()

df_norm = pd.DataFrame(scaler_norm.fit_transform(df), columns=df.columns)

print("\nNormalized Data:")

print(df_norm)

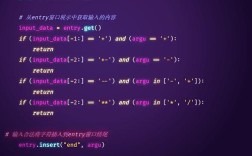

Feature Encoding

Machine learning models work with numbers, not text. We need to convert categorical features into numerical ones.

- One-Hot Encoding: Creates a new binary (0/1) column for each category. Ideal for nominal data (where order doesn't matter, e.g., 'Country', 'Department').

- Label Encoding: Assigns a unique integer to each category. Ideal for ordinal data (where order matters, e.g., 'Low', 'Medium', 'High').

Example with pandas and scikit-learn:

# Sample data

data = {'Color': ['Red', 'Green', 'Blue', 'Red'],

'Size': ['S', 'M', 'L', 'M']}

df = pd.DataFrame(data)

# --- One-Hot Encoding (using pandas) ---

df_one_hot = pd.get_dummies(df, columns=['Color', 'Size'], drop_first=True) # drop_first to avoid multicollinearity

print("\nOne-Hot Encoded Data:")

print(df_one_hot)

# --- Label Encoding (using scikit-learn) ---

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

df['Size_Label'] = le.fit_transform(df['Size'])

print("\nLabel Encoded Data:")

print(df)

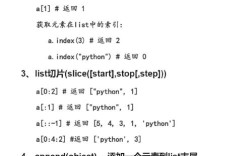

Feature Creation (Deriving New Features)

This is where creativity shines. You create new features based on domain knowledge or simple logic.

- Date/Time Features: Extracting day, month, year, day of the week from a timestamp.

- Binning/Discretization: Converting a continuous variable into categorical bins (e.g., age groups: 0-18, 19-35, 36+).

- Interaction Features: Combining two or more features (e.g.,

total_price = price * quantity).

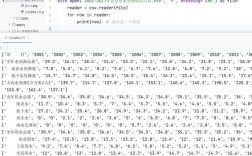

Example with pandas:

# Sample data with a date

data = {'Date': ['2025-01-15', '2025-02-20', '2025-03-10', '2025-04-05'],

'Sales': [100, 150, 120, 180]}

df = pd.DataFrame(data)

# Convert to datetime

df['Date'] = pd.to_datetime(df['Date'])

# --- Extract Date Features ---

df['Year'] = df['Date'].dt.year

df['Month'] = df['Date'].dt.month

df['DayOfWeek'] = df['Date'].dt.dayofweek # Monday=0, Sunday=6

print("\nDataFrame with new date features:")

print(df)

# --- Binning ---

df['Sales_Category'] = pd.cut(df['Sales'], bins=[0, 120, 160, np.inf], labels=['Low', 'Medium', 'High'])

print("\nDataFrame with binned sales:")

print(df)

Feature Selection

Not all features are useful. Too many features can lead to overfitting and increased computational cost. Feature selection helps you keep the most informative ones.

- Filter Methods: Use statistical tests (like correlation) to select features before modeling.

- Wrapper Methods: Use a specific machine learning model to evaluate the usefulness of feature subsets (e.g., Recursive Feature Elimination).

- Embedded Methods: Feature selection is built into the model training process (e.g., Lasso Regression, Random Forest feature importances).

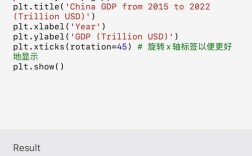

Example with scikit-learn (Filter Method - Correlation):

import seaborn as sns

import matplotlib.pyplot as plt

# Create a more complex DataFrame

data = {'A': np.random.rand(100),

'B': np.random.rand(100) * 2,

'C': np.random.rand(100) * 3,

'D': np.random.rand(100) * 4,

'Target': np.random.rand(100) * 5}

df_corr = pd.DataFrame(data)

# Add a highly correlated feature

df_corr['E'] = df_corr['Target'] * 0.9 + np.random.rand(100) * 0.1

# Calculate correlation matrix

corr_matrix = df_corr.corr()

# Plot heatmap

plt.figure(figsize=(10, 8))

sns.heatmap(corr_matrix, annot=True, cmap='coolwarm')'Correlation Matrix')

plt.show()

# Select features highly correlated with the target

target_corr = corr_matrix['Target'].sort_values(ascending=False)

print("\nFeatures sorted by correlation with Target:")

print(target_corr)

A Practical Feature Engineering Workflow

Here's a step-by-step process you can follow:

- Understand the Data & Problem: What are you trying to predict? What do the columns mean? (Domain knowledge is key!)

- Explore and Visualize: Use

df.info(),df.describe(), and plots (seaborn,matplotlib) to understand distributions, missing values, and relationships. - Handle Missing Values: Decide on a strategy (imputation or deletion) and apply it.

- Encode Categorical Variables: Use one-hot or label encoding as appropriate.

- Create New Features: Use your creativity and domain knowledge to derive new, potentially powerful features.

- Transform Numerical Features: Scale or normalize your numerical features if necessary.

- Select Relevant Features: Use correlation, model-based importance, or other techniques to reduce dimensionality.

- Validate: After building your model, always check its performance on a hold-out test set to ensure your engineering efforts actually improved things.

Important Considerations

- Data Leakage: Be extremely careful not to use information from the test set (or future data) to transform your training data. For example, you should

fityour scaler on the training data only, and thentransformboth the training and test data using that same fitted scaler. - Automation: Libraries like

Feature-engineandsklearn-compose.ColumnTransformercan help automate and streamline these pipelines. - It's an Iterative Process: Feature engineering is not a one-time task. You'll try something, build a model, see the results, and then go back to refine your features.