Of course! This is an excellent question because it touches on a common point of confusion for people starting with computer vision.

Let's break it down. The core idea is:

OpenCV is a general-purpose computer vision library, while a CNN (Convolutional Neural Network) is a type of deep learning model. OpenCV can use CNNs, but it doesn't create them in the same way as TensorFlow or PyTorch.

Here’s a detailed guide covering everything from the relationship between them to practical code examples.

The Relationship: OpenCV vs. CNNs

Think of it like this:

- OpenCV (Open Source Computer Vision Library): It's your ultimate toolbox for image and video processing. It can read/write images, perform filtering, edge detection, geometric transformations, feature detection (like SIFT or ORB), and much more. It's been the go-to library for traditional computer vision for over a decade.

- CNN (Convolutional Neural Network): This is a powerful machine learning model architecture, specifically designed for processing grid-like data such as images. It's the "brain" behind most modern image recognition, object detection, and segmentation tasks. It learns features automatically from data instead of you manually defining them (like you would with an edge detector in OpenCV).

How do they work together?

-

Pre-processing with OpenCV: Before feeding an image into a CNN, you often need to prepare it. OpenCV is perfect for this. You might:

- Read an image from a file.

- Resize it to the input size the CNN expects.

- Convert its color format (e.g., from BGR to RGB).

- Normalize the pixel values.

-

Inference with OpenCV: OpenCV has a highly optimized

dnnmodule (cv2.dnn). This module can load pre-trained models (like those from TensorFlow, Caffe, or PyTorch) and perform "inference"—that is, use the trained CNN to make predictions on new data. This is incredibly fast and efficient. -

Post-processing with OpenCV: After the CNN makes a prediction, you might want to visualize the results. OpenCV is excellent for this. You can:

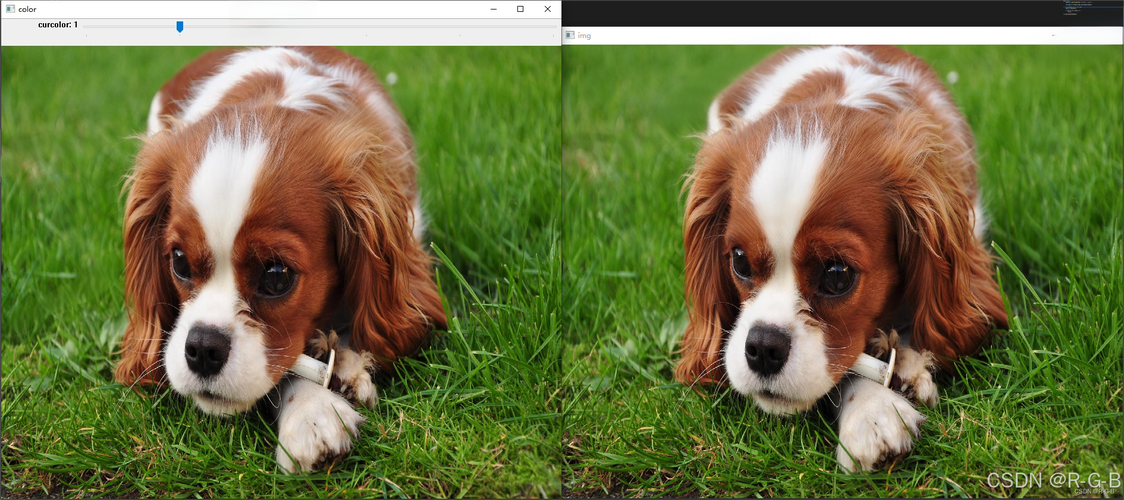

(图片来源网络,侵删)

(图片来源网络,侵删)- Draw bounding boxes around detected objects.

- Put text labels on the image.

- Overlay segmentation masks.

Practical Workflow: Using OpenCV with a Pre-trained CNN

This is the most common and practical use case. We'll use OpenCV to load a pre-trained model (like MobileNet) for object detection.

Step 1: Install Necessary Libraries

You'll need OpenCV, and for downloading the model, you might want requests.

pip install opencv-python numpy requests

Step 2: Download a Pre-trained Model

For this example, we'll use the Caffe version of the MobileNet SSD model. It's fast and great for real-time applications. You need two files:

- The model architecture file (

.prototxt). - The pre-trained model weights (

.caffemodel).

Here's a Python script to download them if you don't have them:

import os

import requests

def download_file(url, filename):

print(f"Downloading {filename}...")

response = requests.get(url, stream=True)

response.raise_for_status()

with open(filename, 'wb') as f:

for chunk in response.iter_content(chunk_size=8192):

f.write(chunk)

print(f"Downloaded {filename} successfully.")

# Model files

prototxt_url = "https://raw.githubusercontent.com/chuanqi305/MobileNet-SSD/master/deploy.prototxt"

caffemodel_url = "https://github.com/chuanqi305/MobileNet-SSD/raw/master/mobilenet_iter_73000.caffemodel"

if not os.path.exists("deploy.prototxt"):

download_file(prototxt_url, "deploy.prototxt")

if not os.path.exists("mobilenet_iter_73000.caffemodel"):

download_file(caffemodel_url, "mobilenet_iter_73000.caffemodel")

Step 3: Load the Model and Perform Inference

Now, let's write the main script to load an image, run it through the CNN, and display the results.

import cv2

import numpy as np

# --- 1. Load the pre-trained model ---

# The .prototxt file describes the architecture of the network

# The .caffemodel file contains the trained weights

net = cv2.dnn.readNetFromCaffe("deploy.prototxt", "mobilenet_iter_73000.caffemodel")

# --- 2. Load and prepare the image ---

image = cv2.imread("example.jpg") # Replace with your image path

if image is None:

print(f"Error: Could not load image. Check the path.")

exit()

# Get the image dimensions

(h, w) = image.shape[:2]

# --- 3. Pre-process the image for the CNN ---

# Create a "blob" from the image. A blob is a 4D numpy array.

# It's a pre-processed image that the CNN can understand.

# - mean=(104.0, 177.0, 123.0): These are the mean values for BGR channels used to train the model.

# - scalefactor=1.0: We don't need to scale the pixel values further.

# - size=(300, 300): The CNN expects an image of this size.

# - swapRB=True: Swaps the R and B channels because the model was trained on RGB images.

# OpenCV loads images in BGR format by default.

blob = cv2.dnn.blobFromImage(cv2.resize(image, (300, 300)), 0.007843, (300, 300), 127.5)

# --- 4. Set the blob as the input and perform forward pass (inference) ---

print("Performing object detection...")

net.setInput(blob)

detections = net.forward()

# --- 5. Post-process the detections and draw on the image ---

# Confidence threshold: Only consider detections with a confidence higher than this.

confidence_threshold = 0.2

# Loop over the detections

for i in range(detections.shape[2]):

confidence = detections[0, 0, i, 2]

# Filter out weak detections

if confidence > confidence_threshold:

# Get the bounding box coordinates for the object

box = detections[0, 0, i, 3:7] * np.array([w, h, w, h])

(startX, startY, endX, endY) = box.astype("int")

# Draw the bounding box on the image

cv2.rectangle(image, (startX, startY), (endX, endY), (0, 255, 0), 2)

# Draw the label (confidence score)

label = f"Confidence: {confidence:.2f}"

y = startY - 15 if startY - 15 > 15 else startY + 15

cv2.putText(image, label, (startX, y), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 255, 0), 2)

# --- 6. Display the output image ---

cv2.imshow("Object Detection", image)

cv2.waitKey(0)

cv2.destroyAllWindows()

# Optional: Save the result

cv2.imwrite("output.jpg", image)

Training a CNN from Scratch (with TensorFlow/Keras)

While OpenCV's dnn module is great for inference, it is not designed for training neural networks. For that, you need a dedicated deep learning framework like TensorFlow/Keras or PyTorch.

Here’s a simple example of how you would define and train a CNN using TensorFlow/Keras. This shows the "creation" part of the process.

import tensorflow as tf

from tensorflow.keras import layers, models

import numpy as np

# --- 1. Define the CNN Model Architecture ---

# A simple CNN for classifying images into 10 categories (e.g., CIFAR-10)

model = models.Sequential([

# Convolutional Base

layers.Conv2D(32, (3, 3), activation='relu', input_shape=(32, 32, 3)),

layers.MaxPooling2D((2, 2)),

layers.Conv2D(64, (3, 3), activation='relu'),

layers.MaxPooling2D((2, 2)),

layers.Conv2D(64, (3, 3), activation='relu'),

# Classifier Head

layers.Flatten(),

layers.Dense(64, activation='relu'),

layers.Dense(10) # 10 output units for 10 classes

])

# --- 2. Compile the Model ---

# Configure the model for training

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

model.summary()

# --- 3. Load and Prepare the Data ---

# Using the built-in CIFAR-10 dataset

(train_images, train_labels), (test_images, test_labels) = tf.keras.datasets.cifar10.load_data()

# Normalize pixel values to be between 0 and 1

train_images, test_images = train_images / 255.0, test_images / 255.0

# --- 4. Train the Model ---

print("\nTraining the model...")

history = model.fit(train_images, train_labels, epochs=10,

validation_data=(test_images, test_labels))

# --- 5. Evaluate the Model ---

print("\nEvaluating the model...")

test_loss, test_acc = model.evaluate(test_images, test_labels, verbose=2)

print(f"\nTest accuracy: {test_acc}")

# --- 6. Save the Trained Model ---

# This model can then be loaded by OpenCV's dnn module

model.save('my_cnn_model.keras')

print("\nModel saved as 'my_cnn_model.keras'")

Summary: When to Use What

| Task | Best Tool | Why? |

|---|---|---|

| Reading/Writing Images | OpenCV (cv2.imread, cv2.imwrite) |

Fast, supports a wide variety of formats, and handles metadata well. |

| Image Pre-processing | OpenCV (cv2.resize, cv2.cvtColor, etc.) |

Provides all necessary functions to prepare images for a CNN. |

| CNN Model Training | TensorFlow/Keras, PyTorch | These frameworks are built from the ground up to define, train, and optimize neural networks. |

| CNN Model Inference | OpenCV (cv2.dnn) or TensorFlow/Keras |

OpenCV: Extremely fast, great for production/deployment. TensorFlow/Keras: More flexible, easier to integrate into a TF pipeline. |

| Visualizing Results | OpenCV (cv2.rectangle, cv2.putText) |

The standard for drawing annotations on images and videos. |

Key Takeaway

- Use OpenCV for all the "around" tasks: handling images, preprocessing them, and visualizing the results.

- Use a dedicated deep learning framework like TensorFlow/Keras or PyTorch to define, train, and save your CNN models.

- Use OpenCV's

dnnmodule to load your saved, trained model and run it efficiently for inference in your applications.