Of course! "Python timeseries" is a broad topic, but it's a fundamental skill in data science, finance, and many scientific fields. Here’s a comprehensive guide covering the essential concepts, libraries, and a practical workflow.

What is a Time Series?

A time series is a sequence of data points recorded or indexed in time order. The most common example is a stock's price over time, but it can be anything that's measured over intervals:

- Daily, hourly, or minute-by-minute weather temperature

- Monthly sales figures

- Yearly population counts

- Sensor readings from an IoT device

- Audio signals

The key characteristic is that the order of the data points matters.

Core Concepts in Time Series Analysis

Before diving into code, it's crucial to understand these concepts:

- Time-Based Indexing: The data must have a time-based index (e.g., a

DateTimeindex in Pandas). This allows for powerful time-specific operations like resampling and slicing. - Trend: The long-term progression of the series (e.g., increasing sales over several years).

- Seasonality: Periodic and cyclical patterns that repeat at fixed intervals (e.g., ice cream sales peaking every summer).

- Stationarity: A time series is stationary if its statistical properties (mean, variance, autocorrelation) are constant over time. Most classic time series models (like ARIMA) assume the data is stationary. Many real-world series are not, so they must be transformed (e.g., by differencing or logging).

- Autocorrelation: The correlation of a time series with its own past and future values. For example, today's stock price is likely correlated with yesterday's price.

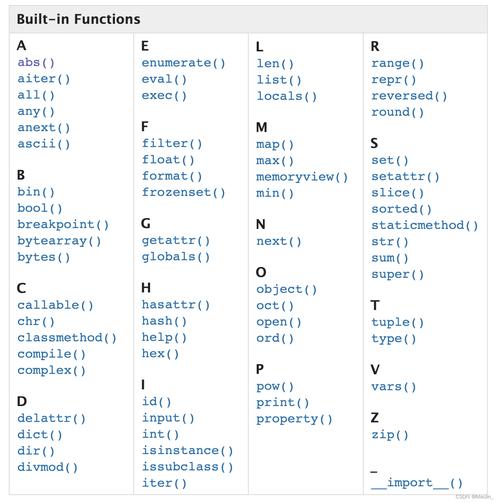

Key Python Libraries for Time Series

Here are the main tools you'll use, with a brief explanation of each.

| Library | Purpose | Key Features |

|---|---|---|

| Pandas | Core Data Manipulation | The foundation. Provides DatetimeIndex, powerful time-series slicing, resampling, rolling windows, and basic plotting. |

| NumPy | Numerical Computing | The engine under Pandas. Handles the n-dimensional arrays used to store time series data efficiently. |

| Matplotlib & Seaborn | Data Visualization | Matplotlib is the foundational plotting library. Seaborn provides high-level, aesthetically pleasing statistical plots. |

| Statsmodels | Statistical Modeling | The go-to library for classical time series analysis. Includes ARIMA, SARIMAX, seasonal decomposition, and statistical tests for stationarity. |

| Scikit-learn | Machine Learning | Used for applying machine learning models (like Random Forests or Gradient Boosting) to time series data, often after feature engineering. |

| Prophet | Forecasting (by Meta/FB) | A high-level library designed for business time series with strong seasonality and holiday effects. It's very easy to use and robust. |

| Darts | Advanced Forecasting | A modern library that provides a unified API for multiple forecasting models (including deep learning like N-BEATS, TFT, and Transformer models). |

A Practical Time Series Workflow: From Data to Forecast

Let's walk through a complete example using Pandas and Matplotlib. We'll analyze and forecast a classic dataset: the number of international airline passengers per month.

Step 1: Setup and Data Loading

First, make sure you have the necessary libraries installed:

pip install pandas numpy matplotlib statsmodels

Now, let's load the data. The dataset is conveniently available in statsmodels.

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import statsmodels.api as sm

# Load the dataset

# The dataset is a classic example from Box & Jenkins (1976)

# It contains monthly totals of international airline passengers from 1949 to 1960.

df = sm.datasets.get_rdataset('AirPassengers').data

# Convert to a proper time series object

# The 'time' column is in a 'year-period' format (e.g., "1949-01")

df['date'] = pd.to_datetime(df['time'], format='%Y-%m')

df.set_index('date', inplace=True)

df.drop('time', axis=1, inplace=True)

# Rename the value column for clarity

df.rename(columns={'value': 'passengers'}, inplace=True)

print(df.head())

print("\nData Info:")

df.info()

Output:

passengers

date

1949-01-01 112

1949-02-01 118

1949-03-01 132

1949-04-01 129

1949-05-01 121

Data Info:

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 144 entries, 1949-01-01 to 1960-12-01

Data columns (total 1 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 passengers 144 non-null int64

dtypes: int64(1)

memory usage: 2.2 KBNotice the DatetimeIndex. This is the key to all time series operations in Pandas.

Step 2: Visualization and Exploration

Always plot your data first to get a visual understanding.

# Plot the time series

plt.figure(figsize=(12, 6))

plt.plot(df.index, df['passengers'], label='Monthly Passengers')'International Airline Passengers (1949-1960)')

plt.xlabel('Date')

plt.ylabel('Number of Passengers')

plt.legend()

plt.grid(True)

plt.show()

You will immediately see an upward trend and a clear yearly seasonality (the peaks repeat every 12 months).

Step 3: Decomposing the Time Series

We can use statsmodels to break the series into its constituent parts: trend, seasonality, and residuals (noise).

# Decompose the time series # The period is 12 for yearly seasonality decomposition = sm.tsa.seasonal_decompose(df['passengers'], model='multiplicative', period=12) # Plot the decomposition fig = decomposition.plot() fig.set_size_inches(12, 8) plt.show()

The plot will show three subplots:

- Observed: The original data.

- Trend: The long-term progression, showing a clear upward trend.

- Seasonal: The repeating yearly pattern.

- Residual: The "noise" left over after removing the trend and seasonality.

Step 4: Checking for Stationarity

Many models require stationary data. We can use the Augmented Dickey-Fuller (ADF) test from statsmodels.

- Null Hypothesis (H0): The time series is non-stationary.

- Alternative Hypothesis (H1): The time series is stationary.

If the p-value is less than a significance level (e.g., 0.05), we reject the null hypothesis and conclude the series is stationary.

from statsmodels.tsa.stattools import adfuller

def check_stationarity(timeseries):

result = adfuller(timeseries, autolag='AIC')

print('ADF Statistic: %f' % result[0])

print('p-value: %f' % result[1])

print('Critical Values:')

for key, value in result[4].items():

print('\t%s: %.3f' % (key, value))

print("Stationarity Check on Original Data:")

check_stationarity(df['passengers'])

Output:

ADF Statistic: 0.815369

p-value: 0.991880

Critical Values:

1%: -3.481

5%: -2.886

10%: -2.579The p-value is very high (0.99), so we fail to reject the null hypothesis. The data is non-stationary.

Step 5: Making the Data Stationary (Differencing)

A common technique to make a series stationary is differencing—subtracting the previous observation from the current one.

# First-order differencing

df['passengers_diff'] = df['passengers'].diff().dropna()

# Plot the differenced data

plt.figure(figsize=(12, 6))

plt.plot(df.index, df['passengers_diff'], label='Differenced Passengers')'Differenced Monthly Passengers')

plt.xlabel('Date')

plt.ylabel('Difference')

plt.legend()

plt.grid(True)

plt.show()

# Check stationarity again

print("\nStationarity Check on Differenced Data:")

check_stationarity(df['passengers_diff'].dropna())

The plot of the differenced data looks much more like random noise around zero. The ADF test should now confirm stationarity.

Step 6: Forecasting with a Model (ARIMA Example)

ARIMA (AutoRegressive Integrated Moving Average) is a classic model. The "I" stands for "Integrated," which refers to the differencing step we just performed.

We'll use auto_arima from the pmdarima library, which automatically finds the best parameters (p, d, q) for the model.

pip install pmdarima

from pmdarima import auto_arima

# Use auto_arima to find the best ARIMA model

# The model is already differenced once, so d=1. We can let auto_arima find p and q.

# We also account for seasonality with m=12

model = auto_arima(df['passengers'],

seasonal=True,

m=12,

d=1, # We already differenced once

D=1, # Seasonal differencing

trace=True,

error_action='ignore',

suppress_warnings=True)

print(model.summary())

auto_arima will test various combinations and output the best model. For this data, it will likely find a SARIMAX(0,1,1)(0,1,1,12) model, which is a seasonal ARIMA model.

Step 7: Evaluating the Forecast

Now, let's use the trained model to make predictions and compare them to the actual data.

# Split data into training and testing sets

# Use the last 12 months for testing

train = df['passengers'].iloc[:-12]

test = df['passengers'].iloc[-12:]

# Re-fit the model on the training data

final_model = auto_arima(train, seasonal=True, m=12, trace=False, suppress_warnings=True)

# Make predictions

forecast = final_model.predict(n_periods=12)

# Create a DataFrame for the forecast

forecast_df = pd.DataFrame(forecast, index=test.index, columns=['Forecast'])

# Plot the results

plt.figure(figsize=(12, 6))

plt.plot(train.index, train, label='Training Data')

plt.plot(test.index, test, label='Actual Test Data')

plt.plot(forecast_df.index, forecast_df['Forecast'], label='Forecast', color='red')'Airline Passengers Forecast vs Actual')

plt.xlabel('Date')

plt.ylabel('Number of Passengers')

plt.legend()

plt.grid(True)

plt.show()

You will see a plot where the red forecast line closely follows the blue actual test data line, indicating a good model fit.

Advanced Topics

- Deep Learning for Time Series: For complex patterns, models like LSTMs (Long Short-Term Memory networks) and Transformers are very powerful. Libraries like

Darts,TensorFlow, andPyTorchare used for this. - Feature Engineering: Create new features from the time index, such as:

df['month'] = df.index.monthdf['year'] = df.index.yeardf['day_of_week'] = df.index.dayofweekThese can be fed into machine learning models likescikit-learn'sRandomForestRegressor.

- Prophet for Business Forecasts: If you have business data with holidays and known events, Prophet is incredibly simple and effective.

# from prophet import Prophet # df_prophet = df.reset_index().rename(columns={'date': 'ds', 'passengers': 'y'}) # model = Prophet(seasonality_mode='multiplicative') # model.fit(df_prophet) # future = model.make_future_dataframe(periods=12, freq='M') # forecast = model.predict(future) # model.plot(forecast)