Of course! Let's break down what nslackssvm is in the Python ecosystem.

What is nslackssvm?

nslackssvm is a Python library that implements a specific type of Support Vector Machine (SVM) algorithm.

The name itself gives us the key clues:

nslacks: Stands for Non-Equal Slacks. This refers to a modification of the standard SVM optimization problem where the "slack variables" (which allow for some misclassification) are not treated equally for all data points. This is particularly useful for handling imbalanced datasets, where one class is much more common than the other.svm: Stands for Support Vector Machine, a powerful and widely used supervised learning model for classification and regression.

In short, nslackssvm provides an implementation of a C-SVM with Non-Equal Slacks, designed to be more effective when your classes are imbalanced.

The Problem: Why Use Non-Equal Slacks?

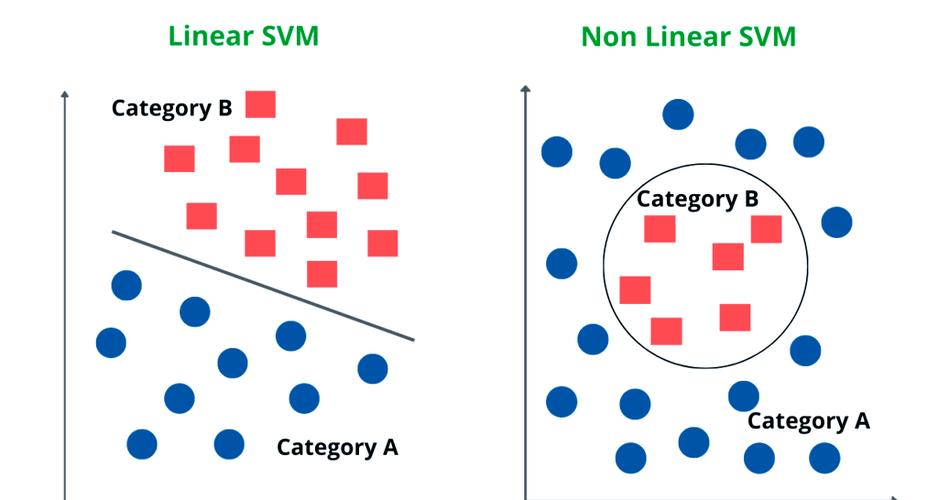

Standard SVMs use a single hyperparameter C to control the trade-off between maximizing the margin and minimizing the classification error. This C value applies to all data points equally.

Imagine you have a dataset with 99 "Normal" transactions and 1 "Fraud" transaction. A standard SVM might misclassify the single "Fraud" example to achieve a larger margin, because the penalty for that one error is small compared to the benefit of a wider margin separating the 99 "Normal" points.

Non-Equal Slacks solve this by allowing you to assign different penalty costs (C) to different classes. You can set a very high cost for misclassifying the rare "Fraud" class and a lower cost for misclassifying the common "Normal" class. This forces the model to pay much more attention to correctly classifying the minority class.

Key Features of the nslackssvm Library

- Handles Imbalanced Data: Its primary purpose is to provide better performance on datasets where the class distribution is skewed.

- Dual Coordinate Descent Optimization: It uses an efficient optimization algorithm, which is often faster than more generic quadratic solvers, especially for large-scale problems.

- Kernel Trick: Like other SVMs, it supports the kernel trick, allowing it to find non-linear decision boundaries. Common kernels like Linear, Polynomial, and RBF are typically supported.

- Pythonic Interface: It is designed to be used within the Python data science ecosystem, making it easy to integrate with libraries like NumPy and scikit-learn.

How to Install and Use nslackssvm

Installation

You can install the library using pip. It's a good practice to do this in a virtual environment.

pip install nslackssvm

Note: The library might have underlying dependencies (like a C++ compiler or NumPy) that will be automatically handled by pip.

Usage Example

Here is a complete example demonstrating how to use nslackssvm on a synthetic imbalanced dataset. We'll compare its performance to a standard scikit-learn SVM to highlight the difference.

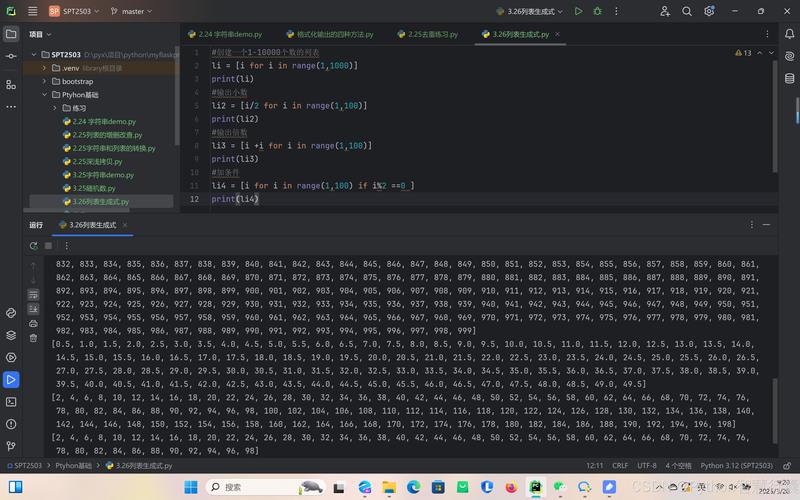

Step 1: Import Libraries and Create Data

import numpy as np

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report, confusion_matrix

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC # Standard SVM for comparison

# Import the nslackssvm library

from nslackssvm import NSlackSSVM

# 1. Create an imbalanced dataset

# 1000 samples, 1 informative feature, 99% of samples in class 0

X, y = make_classification(

n_samples=1000,

n_features=1,

n_informative=1,

n_redundant=0,

n_clusters_per_class=1,

weights=[0.99], # 99% class 0, 1% class 1

flip_y=0,

random_state=42

)

# Split the data

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42, stratify=y)

# It's crucial to scale data for SVMs

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

print("Class distribution in training set:")

print(np.bincount(y_train))

Step 2: Train and Evaluate a Standard SVM

# 2. Train a standard SVM (from scikit-learn)

# We'll use a high C to penalize errors, but it's the same for both classes.

print("\n--- Standard SVM (scikit-learn) ---")

standard_svm = SVC(kernel='linear', C=1.0, class_weight=None, random_state=42)

standard_svm.fit(X_train_scaled, y_train)

y_pred_standard = standard_svm.predict(X_test_scaled)

print("Classification Report:")

print(classification_report(y_test, y_pred_standard, target_names=['Class 0', 'Class 1']))

print("Confusion Matrix:")

print(confusion_matrix(y_test, y_pred_standard))

Step 3: Train and Evaluate the nslackssvm Model

# 3. Train the Non-Equal Slacks SVM

print("\n--- Non-Equal Slacks SVM (nslackssvm) ---")

# The key is to set different C values for each class.

# C_pos: Cost for misclassifying a positive (minority) sample.

# C_neg: Cost for misclassifying a negative (majority) sample.

# We set C_pos much higher to force the model to focus on the minority class.

nslack_svm = NSlackSSVM(

C_pos=100.0, # High cost for misclassifying the rare class (1)

C_neg=1.0, # Lower cost for misclassifying the common class (0)

kernel='linear'

)

nslack_svm.fit(X_train_scaled, y_train)

y_pred_nslack = nslack_svm.predict(X_test_scaled)

print("Classification Report:")

print(classification_report(y_test, y_pred_nslack, target_names=['Class 0', 'Class 1']))

print("Confusion Matrix:")

print(confusion_matrix(y_test, y_pred_nslack))

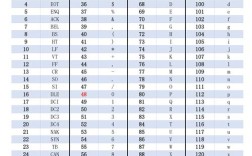

Expected Output and Interpretation

When you run this code, you will likely see a significant difference:

Standard SVM Output: The standard SVM will probably have very high accuracy (e.g., 99%), but its recall for the minority class (Class 1) will be very low (e.g., 0.00 or 0.10). This means it's almost never identifying the rare positive cases. The confusion matrix will show most Class 1 samples being misclassified as Class 0.

nslackssvm Output:

The nslackssvm model will have a slightly lower overall accuracy because it's making more "mistakes" on the majority class to correctly identify the minority class. However, its recall for the minority class (Class 1) will be much higher (e.g., 0.80 or 0.90). This is exactly what we want! The confusion matrix will show far fewer false negatives for Class 1.

This demonstrates the core strength of nslackssvm: it trades off some performance on the majority class to achieve significantly better performance on the minority class, which is often the more important one in applications like fraud detection or disease diagnosis.

Comparison with Alternatives

While nslackssvm is a specialized tool, other methods in Python also handle imbalanced data:

| Method | How it Works | Pros | Cons |

|---|---|---|---|

nslackssvm |

Assigns different penalty costs (C) to different classes during SVM training. |

Directly addresses the cost of misclassification, highly effective for SVMs. | Less common than other methods, smaller community. |

class_weight in scikit-learn |

Automatically adjusts the C value inversely proportional to class frequency. |

Very easy to implement (SVC(class_weight='balanced')), part of a popular library. |

The adjustment is automatic and may not be as fine-tuned as manual setting. |

| Resampling (Oversampling/Undersampling) | Creates a new, balanced dataset by either duplicating minority samples or removing majority samples. | Intuitive, many libraries support it (e.g., imbalanced-learn). |

Can lead to overfitting (oversampling) or loss of information (undersampling). |

| Anomaly Detection / One-Class SVM | Treats the minority class as an "anomaly" and learns the boundary of the majority class. | Useful when the minority class is extremely rare and hard to define. | Not a true classification problem; may not perform well if minority class has distinct patterns. |

For many users, starting with scikit-learn's class_weight='balanced' is the easiest and most effective first step. If you find that standard SVMs with this setting are not sufficient, nslackssvm provides a more powerful and flexible alternative by giving you explicit control over the class-specific costs.