Of course! statsmodels is a powerful and essential Python library for statistical modeling, hypothesis testing, and data exploration. It's the go-to library when you need to understand the statistical underpinnings of your data, not just build a predictive model.

Here’s a comprehensive guide covering what it is, why it's important, and how to use it with clear examples.

What is statsmodels?

statsmodels is a Python package that provides classes and functions for the estimation of many different statistical models, as well as for conducting statistical tests, and statistical data exploration.

Key Philosophy: Unlike scikit-learn, which is primarily focused on prediction, statsmodels is focused on inference. It provides rich statistical output like p-values, confidence intervals, R-squared, AIC/BIC, and detailed ANOVA tables to help you understand the relationships in your data.

Installation and Basic Setup

First, you need to install it. It's highly recommended to also install pandas for data handling and matplotlib & seaborn for plotting.

pip install statsmodels pandas matplotlib seaborn

You'll typically import it like this:

import numpy as np

import pandas as pd

import statsmodels.api as sm

import statsmodels.formula.api as smf

import matplotlib.pyplot as plt

import seaborn as sns

# Set a nice style for plots

sns.set_style("whitegrid")

Core Concepts: Endog vs. Exog

statsmodels uses specific terminology for variables:

- Endogenous (

endog): The dependent variable. The variable you are trying to predict or explain. (e.g.,y). - Exogenous (

exog): The independent or explanatory variables. The variables you are using to predict the endogenous variable. (e.g.,X).

When you build a model, you'll almost always need to add a constant to your exogenous variables using sm.add_constant(). This adds a column of ones to your data, which represents the intercept term (β₀) in the linear model.

Key Features and Examples

Let's dive into the most common use cases.

Example Dataset: The mtcars Dataset

We'll use the classic mtcars dataset, which is conveniently included in statsmodels. It contains data on car mileage, horsepower, weight, etc.

# Load the dataset

mtcars = sm.datasets.get_rdataset("mtcars", "ISLR").data

# Display the first few rows

print(mtcars.head())

| mpg | cyl | disp | hp | drat | wt | qsec | vs | am | gear | carb | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 6 | 160 | 110 | 90 | 620 | 46 | 0 | 1 | 4 | 4 |

| 1 | 0 | 6 | 160 | 110 | 90 | 875 | 02 | 0 | 1 | 4 | 4 |

| 2 | 8 | 4 | 108 | 93 | 85 | 320 | 61 | 1 | 1 | 4 | 1 |

| 3 | 4 | 6 | 258 | 110 | 08 | 215 | 44 | 1 | 0 | 3 | 1 |

| 4 | 7 | 8 | 360 | 175 | 15 | 440 | 02 | 0 | 0 | 3 | 2 |

A. Linear Regression (OLS - Ordinary Least Squares)

This is the most fundamental statistical model. We want to see how car weight (wt) and horsepower (hp) affect miles per gallon (mpg).

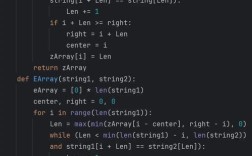

Method 1: Using Arrays (Numpy/Pandas)

# Define dependent (endog) and independent (exog) variables y = mtcars['mpg'] X = mtcars[['wt', 'hp']] # IMPORTANT: Add a constant (intercept) to the exogenous variables X = sm.add_constant(X) # Create and fit the OLS model model = sm.OLS(y, X) results = model.fit() # Print the summary of the model print(results.summary())

How to Interpret the Summary Output:

- R-squared: 0.869. This means that ~87% of the variation in

mpgis explained bywtandhp. A high value is good. - coef (Coefficient):

const: 37.2275. This is the intercept. It's the predictedmpgif bothwtandhpwere zero.wt: -5.3445. For every one unit increase in weight (wt),mpgis predicted to decrease by 5.3445, holdinghpconstant.hp: -0.0178. For every one unit increase in horsepower (hp),mpgis predicted to decrease by 0.0178, holdingwtconstant.

- P>|t| (p-value):

- A low p-value (typically < 0.05) indicates that the coefficient is statistically significant. Here, both

wt(p=0.000) andhp(p=0.008) are highly significant, meaning they have a real relationship withmpg.

- A low p-value (typically < 0.05) indicates that the coefficient is statistically significant. Here, both

Method 2: Using Formulas (R-like syntax)

This is often more intuitive and readable. The formula mpg ~ wt + hp means "model mpg as a function of wt and hp".

# Using the formula API

model_formula = smf.ols('mpg ~ wt + hp', data=mtcars)

results_formula = model_formula.fit()

# The results are identical

print(results_formula.summary())

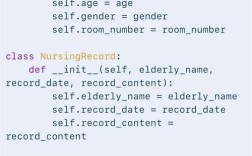

B. Generalized Linear Models (GLM)

What if your dependent variable isn't continuous? For example, if it's binary (0/1) or a count. GLMs extend linear regression to these cases.

Example: Logistic Regression

Let's predict whether a car has an automatic transmission (am=1) or manual (am=0) based on weight (wt) and horsepower (hp).

# The dependent variable must be binary for logistic regression y = mtcars['am'] X = mtcars[['wt', 'hp']] X = sm.add_constant(X) # Use the GLM family with Binomial link function (logit) logit_model = sm.GLM(y, X, family=sm.families.Binomial()) logit_results = logit_model.fit() print(logit_results.summary())

Interpretation:

The coefficients are in log-odds. To interpret them as odds ratios, you exponentiate them (np.exp(results.params)).

- A negative coefficient for

wtsuggests that as weight increases, the log-odds of having an automatic transmission decrease (i.e., heavier cars are more likely to be manual).

C. Analysis of Variance (ANOVA)

ANOVA tests if there are statistically significant differences between the means of three or more independent groups.

Example: Does the number of cylinders (cyl) affect mpg?

First, we need to fit a model, then we can perform an ANOVA on it.

# Fit an OLS model with 'cyl' as a predictor

# C(cyl) tells statsmodels to treat 'cyl' as a categorical variable

model_anova = smf.ols('mpg ~ C(cyl)', data=mtcars).fit()

# Perform the ANOVA

anova_table = sm.stats.anova_lm(model_anova, typ=2)

print(anova_table)

Interpretation:

- PR(>F) (p-value): The p-value is extremely low (2.39e-09). This means we can reject the null hypothesis that the average

mpgis the same for cars with 4, 6, and 8 cylinders. There is a statistically significant difference inmpgacross the cylinder groups.

D. Time Series Analysis

statsmodels is a leader in time series analysis.

Example: Autoregressive (AR) Model

Let's model the US population data included in the library.

# Get the US population dataset

pop = sm.datasets.get_rdataset("uspop", "datasets").data

# Convert to a time series index

pop.index = pd.to_datetime(pop['year'], format='%Y')

pop = pop['population']

# Plot the data

pop.plot(figsize=(12, 6))"US Population Over Time")

plt.ylabel("Population")

plt.show()

# Fit an AR(1) model (an autoregressive model of order 1)

# The trend='c' includes a constant term

ar_model = sm.tsa.ARIMA(pop, order=(1, 0, 0)) # (p, d, q) -> (1, 0, 0) is AR(1)

ar_results = ar_model.fit()

print(ar_results.summary())

Interpretation: The summary for an ARIMA model will show the coefficient for the AR(1) term. A value close to 1 would indicate strong persistence in the series (each value is highly dependent on the previous one).

statsmodels vs. scikit-learn

This is a crucial distinction for any Python data scientist.

| Feature | statsmodels |

scikit-learn |

|---|---|---|

| Primary Goal | Inference (understanding relationships, statistical tests) | Prediction (building models to predict new data) |

| Output | Detailed statistical summaries (p-values, R-squared, confidence intervals) | Model object with a .predict() method. Focus on performance metrics (accuracy, MSE, etc.). |

| API | Formula-based (smf.ols('y ~ x', data=df)) and array-based. |

Primarily object-oriented, based on fit() and predict(). |

| Typical Use Case | "Is variable X significant in predicting Y?" | "What is the most accurate model to predict Y from X?" |

| Example Models | OLS, GLM, ANOVA, ARIMA, t-tests | Linear Regression, SVM, Random Forest, Gradient Boosting, K-Means Clustering |

When to use which?

- Use

statsmodelswhen you are in the exploratory phase, trying to understand the data, test hypotheses, and build a statistical model. - Use

scikit-learnwhen your goal is to build a predictive pipeline, perform cross-validation, tune hyperparameters, and deploy a model for making predictions on new, unseen data.

You often use them together! For example, you might use statsmodels to identify the most significant predictors and then use scikit-learn to build a final, highly predictive model.

Official Resources

- API Documentation: https://www.statsmodels.org/stable/index.html

- User Guide: https://www.statsmodels.org/stable/user-guide.html (Excellent for tutorials and deeper dives into specific models).