Of course! Python is an excellent language for probability, statistics, and data analysis. It has a rich ecosystem of libraries that make complex calculations and simulations accessible.

Here's a comprehensive guide to Python for probability, broken down into key concepts, essential libraries, and practical examples.

Core Concepts & The Math Behind It

Before diving into code, it's helpful to recall some fundamental probability concepts:

- Probability of an Event: A number between 0 and 1, representing the likelihood of an event occurring.

- Random Variable: A variable whose possible values are outcomes of a random phenomenon (e.g., the result of a die roll).

- Probability Distribution: A function that describes the likelihood of obtaining the possible values of a random variable.

- Discrete: For countable outcomes (e.g., dice rolls, coin flips).

- Continuous: For uncountable outcomes (e.g., height, weight).

- Expected Value: The long-run average value of repetitions of the experiment it represents.

- Variance & Standard Deviation: Measures of how spread out the values are from the expected value.

Essential Python Libraries for Probability

You'll primarily use three libraries: random, numpy, and scipy.

A. random: The Foundation

The built-in random module is great for simple simulations and generating random numbers.

random.random(): Generates a random float between 0.0 and 1.0.random.randint(a, b): Generates a random integer betweenaandb(inclusive).random.choice(sequence): Picks a random element from a sequence (list, tuple, etc.).random.shuffle(sequence): Shuffles a sequence in place.

B. numpy: Numerical Powerhouse

NumPy is the cornerstone of numerical computing in Python. Its random module is much more powerful and faster than the built-in random for array operations.

np.random.rand(d0, d1, ..., dn): Creates an array of given shape and fills it with random samples from a uniform distribution over [0, 1).np.random.randn(d0, d1, ..., dn): Creates an array of given shape and fills it with random samples from the standard normal distribution (mean=0, std=1).np.random.randint(low, high, size): Generates random integers from a low (inclusive) to high (exclusive) distribution.np.random.choice(a, size): Generates a random sample from a given 1-D array.

C. scipy.stats: The Statistics Library

SciPy builds on NumPy and provides a vast collection of probability distributions and statistical functions. This is where you'll find the "official" distributions.

scipy.stats.norm: The normal (Gaussian) distribution.scipy.stats.binom: The binomial distribution.scipy.stats.poisson: The Poisson distribution.scipy.stats.uniform: The uniform distribution.- And many more...

Each distribution object has useful methods:

.rvs(size=N): Generate N random variates (samples)..pdf(x): Probability Density Function (for continuous distributions)..pmf(k): Probability Mass Function (for discrete distributions)..cdf(x): Cumulative Distribution Function (P(X <= x))..mean(),.var(),.std(): Get the mean, variance, and standard deviation.

Practical Examples

Let's put these libraries to work.

Example 1: Simulating a Coin Flip

This is a simple example of a Bernoulli trial with a 50% chance of success (heads).

import random

def flip_coin():

"""Simulates a single coin flip."""

return random.choice(['Heads', 'Tails'])

# Simulate 10 coin flips

print("10 Coin Flips:")

for _ in range(10):

print(flip_coin())

Example 2: Simulating a 6-Sided Die Roll

Here, we'll use NumPy to simulate many rolls and then calculate the average. The expected value for a fair die is (1+2+3+4+5+6)/6 = 3.5.

import numpy as np

# Simulate 10,000 die rolls

num_rolls = 10000

rolls = np.random.randint(1, 7, size=num_rolls)

# Calculate the average (mean)

average_roll = np.mean(rolls)

print(f"Simulated {num_rolls} die rolls.")

print(f"Average roll: {average_roll:.2f}") # Should be close to 3.5

print(f"Expected average: 3.5")

Example 3: Analyzing a Binomial Distribution

A binomial distribution models the number of successes in a fixed number of independent trials (e.g., number of heads in 10 coin flips).

Let's model 10 coin flips and find the probability of getting exactly 7 heads. The probability of heads is p=0.5.

from scipy.stats import binom

# Parameters

n_flips = 10 # Number of trials

p_heads = 0.5 # Probability of success on each trial

k_success = 7 # Number of desired successes

# Calculate the probability of getting exactly 7 heads

prob_7_heads = binom.pmf(k_success, n_flips, p_heads)

print(f"Probability of getting exactly {k_success} heads in {n_flips} flips: {prob_7_heads:.4f}")

# Let's simulate this to see if it matches

num_simulations = 100000

count_7_heads = 0

for _ in range(num_simulations):

# Simulate 10 flips (1 for heads, 0 for tails)

flips = np.random.choice([0, 1], size=n_flips, p=[1-p_heads, p_heads])

if np.sum(flips) == k_success:

count_7_heads += 1

simulated_prob = count_7_heads / num_simulations

print(f"Simulated probability: {simulated_prob:.4f}")

Example 4: Working with a Normal Distribution

The normal distribution is everywhere (heights, test scores, measurement errors). Let's say IQ scores are normally distributed with a mean of 100 and a standard deviation of 15.

Question: What is the probability that a randomly selected person has an IQ between 115 and 130?

from scipy.stats import norm

import matplotlib.pyplot as plt

# Parameters of the normal distribution

mu = 100 # Mean

sigma = 15 # Standard deviation

# Calculate the probability of IQ being between 115 and 130

# P(115 < X < 130) = P(X < 130) - P(X < 115)

prob = norm.cdf(130, mu, sigma) - norm.cdf(115, mu, sigma)

print(f"Probability of IQ between 115 and 130: {prob:.4f}")

# --- Visualization ---

# Create a range of x values

x = np.linspace(mu - 4*sigma, mu + 4*sigma, 1000)

# Calculate the PDF for these x values

y = norm.pdf(x, mu, sigma)

# Plot the distribution

plt.figure(figsize=(10, 6))

plt.plot(x, y, label='Normal Distribution (IQ Scores)')

# Shade the area of interest

plt.fill_between(x, y, where=(x > 115) & (x < 130), color='blue', alpha=0.3, label='P(115 < IQ < 130)')

'Normal Distribution of IQ Scores')

plt.xlabel('IQ Score')

plt.ylabel('Probability Density')

plt.legend()

plt.grid(True)

plt.show()

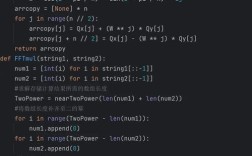

Example 5: Monte Carlo Simulation - Estimating Pi

This is a classic and powerful demonstration of using random sampling to solve a problem.

Concept: We can use the ratio of areas of a circle inscribed in a square to estimate Pi.

- Imagine a square with side length 2, and a circle with radius 1 inside it.

- The area of the square is

2 * 2 = 4. - The area of the circle is

pi * r^2 = pi * 1^2 = pi. - The ratio of the circle's area to the square's area is

pi / 4. - If we randomly throw "darts" at the square, the probability that a dart lands inside the circle is

pi / 4. - We can simulate this and count the ratio of darts inside the circle to total darts to estimate

pi.

import numpy as np

def estimate_pi(num_points):

"""Estimates Pi using a Monte Carlo simulation."""

# Generate random points in the square [-1, 1] x [-1, 1]

x = np.random.uniform(-1, 1, num_points)

y = np.random.uniform(-1, 1, num_points)

# Calculate the distance from the origin (0,0) for each point

distances = np.sqrt(x**2 + y**2)

# Count points that are inside the circle (distance <= 1)

points_inside_circle = np.sum(distances <= 1)

# The ratio of points inside the circle to total points is an estimate of pi/4

estimated_pi = 4 * points_inside_circle / num_points

return estimated_pi

# Run the simulation with a large number of points

num_points = 1000000

pi_estimate = estimate_pi(num_points)

print(f"Estimated Pi with {num_points:,} points: {pi_estimate:.6f}")

print(f"Actual value of Pi: {np.pi:.6f}")

Next Steps & Advanced Topics

Once you're comfortable with the basics, you can explore:

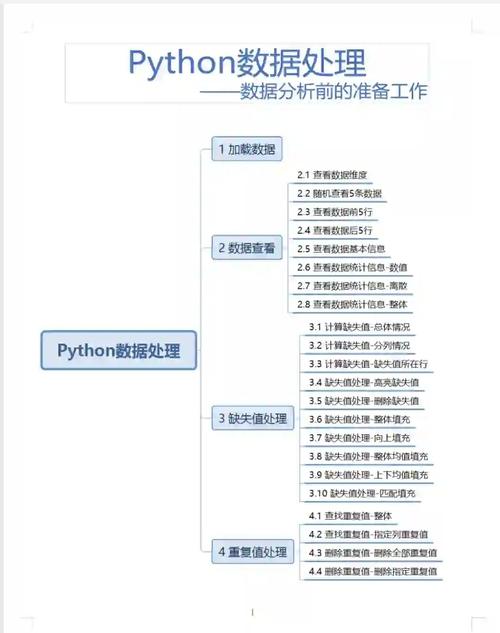

- Pandas: For analyzing real-world datasets and calculating descriptive statistics (mean, median, standard deviation, etc.).

- Matplotlib & Seaborn: For creating more sophisticated statistical plots like histograms, box plots, and violin plots.

- Hypothesis Testing: Using

scipy.statsto perform tests like t-tests, chi-squared tests, and ANOVA to determine if observed results are statistically significant. - Bayesian Statistics: Libraries like

PyMCorStanallow for building complex probabilistic models to update beliefs as new data comes in. This is a huge and powerful field.