Of course! Here is a comprehensive guide to using Python User Agents, covering why they are important, the most popular libraries, and practical examples.

What is a User Agent?

A User Agent (UA) is a string that a web browser or application sends to a web server to identify itself. It typically includes information like:

- The browser's name and version (e.g.,

Chrome/119.0) - The operating system (e.g.,

Windows NT 10.0) - The rendering engine (e.g.,

AppleWebKit/537.36) - Device type (sometimes implied)

Example User Agent String:

Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36

Why Should You Use User Agents in Python?

- Web Scraping: Some websites block bots or scrapers. By rotating user agents, you can mimic different browsers and avoid being blocked.

- Accessing Content: Some websites serve different content to mobile users vs. desktop users. You can use a mobile user agent to get the mobile version of a site.

- API Testing: You might want to test how your API behaves when requests come from different types of clients (e.g., a mobile app, a web browser, a script).

- Bypassing Caching: Using a different user agent can sometimes force a server to return a fresh response instead of a cached one.

Method 1: The Standard Library (fake_useragent)

This is the most popular and straightforward library for generating random user agents. It's a great starting point for any scraping project.

Installation

pip install fake_useragent

Basic Usage

The library is very simple to use. You just need to import it and call the method.

from fake_useragent import UserAgent

# Create a UserAgent object

ua = UserAgent()

# --- Get a random user agent ---

random_ua = ua.random

print(f"Random User Agent: {random_ua}")

# --- Get a specific browser user agent ---

chrome_ua = ua.chrome

print(f"Chrome User Agent: {chrome_ua}")

firefox_ua = ua.firefox

print(f"Firefox User Agent: {firefox_ua}")

safari_ua = ua.safari

print(f"Safari User Agent: {safari_ua}")

# --- Get a specific OS user agent ---

windows_ua = ua.windows

print(f"Windows User Agent: {windows_ua}")

android_ua = ua['android']

print(f"Android User Agent: {android_ua}")

Practical Example: Using fake_useragent with requests

This is the most common use case. We'll create a function that fetches a URL using a random user agent on each request.

import requests

from fake_useragent import UserAgent

import time

def fetch_with_random_ua(url):

"""

Fetches a URL using a random user agent.

Includes error handling and a delay to be polite to the server.

"""

ua = UserAgent()

headers = {

'User-Agent': ua.random,

'Accept-Language': 'en-US, en;q=0.9'

}

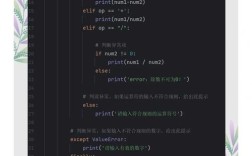

try:

print(f"Fetching {url} with UA: {headers['User-Agent']}")

response = requests.get(url, headers=headers, timeout=10)

# Check if the request was successful

response.raise_for_status() # Raises an HTTPError for bad responses (4xx or 5xx)

print(f"Successfully fetched. Status Code: {response.status_code}")

return response.text

except requests.exceptions.RequestException as e:

print(f"An error occurred: {e}")

return None

# --- Example Usage ---

if __name__ == "__main__":

target_url = 'http://httpbin.org/user-agent' # This URL returns the user agent it sees

html_content = fetch_with_random_ua(target_url)

if html_content:

print("\n--- Server Response ---")

# The response will be a JSON, so we can print it nicely

import json

print(json.dumps(json.loads(html_content), indent=2))

time.sleep(2) # Be a good internet citizen

# Fetch again to see a different user agent

html_content = fetch_with_random_ua(target_url)

if html_content:

print("\n--- Second Server Response ---")

print(json.dumps(json.loads(html_content), indent=2))

Method 2: Using fake_useragent with scrapy (For Web Crawling)

If you are using the scrapy framework, integrating fake_useragent is even easier.

Installation

pip install scrapy fake_useragent

Setup

-

In your

settings.pyfile:# settings.py # Enable the downloader middleware DOWNLOADER_MIDDLEWARES = { # Set a low value to ensure it's loaded before other middlewares 'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware': None, 'fake_useragent.middleware.RandomUserAgentMiddleware': 400, } -

In your

middlewares.pyfile (optional, but good practice):# middlewares.py from fake_useragent import UserAgent class CustomRandomUserAgentMiddleware: def __init__(self, crawler): self.ua = UserAgent() # You can also restrict to specific browsers if you want # self.ua_browsers = [self.ua.chrome, self.ua.firefox, self.ua.safari] @classmethod def from_crawler(cls, crawler): return cls(crawler) def process_request(self, request, spider): # Assign a random user agent to the request request.headers.setdefault('User-Agent', self.ua.random) # Or use a specific browser: # request.headers.setdefault('User-Agent', random.choice(self.ua_browsers))

Now, every request your spider makes will automatically have a new, random user agent.

Method 3: Advanced Control with user-agents Library

The user-agents library is more powerful if you need fine-grained control. It doesn't just generate strings; it provides objects with properties you can inspect.

Installation

pip install user-agents

Basic Usage

from user_agents import parse

# --- Parse a user agent string ---

ua_string = 'Mozilla/5.0 (iPhone; CPU iPhone OS 12_0 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/12.0 Mobile/15E148 Safari/604.1'

user_agent = parse(ua_string)

# --- Check properties ---

print(f"User Agent String: {user_agent}")

print(f"Is Mobile? {user_agent.is_mobile}")

print(f"Is Tablet? {user_agent.is_tablet}")

print(f"Is PC? {user_agent.is_pc}")

print(f"Is Bot? {user_agent.is_bot}")

print(f"Browser Family: {user_agent.browser.family}")

print(f"Browser Version: {user_agent.browser.version_string}")

print(f"OS Family: {user_agent.os.family}")

print(f"OS Version: {user_agent.os.version_string}")

print(f"Device Family: {user_agent.device.family}")

Practical Example: Conditional Logic Based on Device Type

You can use this library to change your scraping behavior based on the detected device.

from user_agents import parse

import requests

def get_page_for_device(url, user_agent_string):

"""

Fetches a page and prints info based on the detected device type.

"""

headers = {'User-Agent': user_agent_string}

response = requests.get(url, headers=headers, timeout=5)

user_agent = parse(user_agent_string)

print(f"\n--- Fetching with UA: {user_agent_string} ---")

if user_agent.is_mobile:

print("Detected a mobile device. Requesting mobile-optimized content.")

elif user_agent.is_tablet:

print("Detected a tablet device. Requesting tablet-optimized content.")

elif user_agent.is_pc:

print("Detected a PC. Requesting desktop content.")

elif user_agent.is_bot:

print("Detected a bot. Acting like a bot.")

else:

print("Unknown device type.")

return response.status_code

# --- Example Usage ---

if __name__ == "__main__":

url = 'http://httpbin.org/user-agent'

mobile_ua = 'Mozilla/5.0 (iPhone; CPU iPhone OS 12_0 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/12.0 Mobile/15E148 Safari/604.1'

desktop_ua = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36'

get_page_for_device(url, mobile_ua)

get_page_for_device(url, desktop_ua)

Important Considerations & Best Practices

- Be Ethical: Always check a website's

robots.txtfile (e.g.,http://example.com/robots.txt) and its Terms of Service before scraping. Respect their rules. - Don't Overdo It: Rotating user agents is good, but making too many requests in a short period will get you blocked regardless. Always add delays between your requests (

time.sleep(1)). - Combine with Other Techniques: User agents are just one tool. For robust scraping, combine them with:

- Proxies: To hide your IP address.

- Sessions: To maintain cookies and login states.

- Handling CAPTCHAs: Be prepared for challenges.

- Update Your List: User agent lists can become outdated. The

fake_useragentlibrary is good because it tries to fetch an updated list from a central repository when you first import it, but it's not perfect. For very advanced needs, you might maintain your own list and update it periodically. - Browser Fingerprinting: Modern anti-bot systems can do more than just check the User Agent. They can look at your screen resolution, installed fonts, browser plugins, and other "browser fingerprints." This is an advanced topic, but it's good to know that User Agent alone is not a silver bullet.