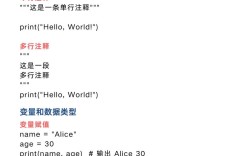

Of course! Let's dive deep into Python's multiprocessing.managers.QueueManager. It's a powerful but often misunderstood tool for inter-process communication (IPC).

What is QueueManager and Why Use It?

Imagine you have multiple processes, and you want them to communicate by sharing a queue. A simple multiprocessing.Queue works perfectly if all processes are running in the same script and are started by the same parent process.

However, what if:

- You want a producer process to put items into a queue.

- A consumer process, running in a completely separate script, needs to get items from that same queue.

- Or, you want to run the consumer on a different machine on a network.

This is where multiprocessing.managers.QueueManager shines. It allows you to create and share queues (and other managed objects) between unrelated processes, even across a network.

Think of it as a centralized server that holds the queue. Other processes (clients) connect to this server to access the queue.

Core Concepts

- Manager Server: A special process that hosts the shared objects (like the queue). It runs a listener that waits for connections from other processes.

- Manager Client: Any process that wants to use the shared object. It connects to the Manager Server and gets a "proxy" object. This proxy behaves like the real object (e.g., you can call

.put()on it), but all the work is actually done by the server process. - Proxy Object: When a client requests the queue, it doesn't get the queue itself. It gets a proxy. This proxy is a lightweight object that communicates with the Manager Server to perform operations (put, get, etc.) on the real queue.

BaseManager: The base class for creating custom manager servers. You subclass it to register the types of objects you want to share (likeQueue).

Step-by-Step Example: Producer and Consumer

Let's create a classic producer-consumer scenario where the producer and consumer are in separate files.

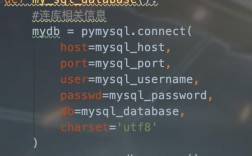

Step 1: Create the Manager Server (server.py)

This script will start the manager, register a queue, and run forever, waiting for clients.

# server.py

import multiprocessing

from multiprocessing.managers import BaseManager

# Define the queue type we want to share

queue = multiprocessing.Queue()

class QueueManager(BaseManager):

"""A custom manager for our queue."""

pass

# Register the queue with the manager

# The first argument is a name to access the queue by.

# The second is a function to create the queue.

QueueManager.register('get_task_queue', callable=lambda: queue)

if __name__ == '__main__':

# The address and port the manager will listen on

# '0.0.0.0' means it will accept connections from any network interface

manager = QueueManager(address=('0.0.0.0', 50000), authkey=b'abracadabra')

# Start the manager process

server = manager.get_server()

print('Server started on 0.0.0.0:50000. Waiting for connections...')

try:

server.serve_forever()

except KeyboardInterrupt:

print("\nServer shutting down.")

server.shutdown()

Key points in server.py:

- We define a standard

multiprocessing.Queue. - We create a

QueueManagerby subclassingBaseManager. QueueManager.register('get_task_queue', ...)is the crucial step. It tells the manager: "When a client asks for 'get_task_queue', create a queue using this function and give them a proxy to it."manager.get_server().serve_forever()starts the server loop.

Step 2: Create the Producer (producer.py)

This script will connect to the server and put items into the queue.

# producer.py

import time

from multiprocessing.managers import BaseManager

# Use the same authkey and address as the server

SERVER_ADDRESS = ('127.0.0.1', 50000)

AUTH_KEY = b'abracadabra'

class QueueManager(BaseManager):

pass

# Register the queue with the manager. The name MUST match the server's.

QueueManager.register('get_task_queue')

if __name__ == '__main__':

# Connect to the server

print('Producer: Connecting to server...')

manager = QueueManager(address=SERVER_ADDRESS, authkey=AUTH_KEY)

manager.connect()

# Get a proxy to the queue

task_queue = manager.get_task_queue()

print('Producer: Connected and got queue proxy.')

# Put some tasks into the queue

for i in range(10):

print(f'Producer: Putting task {i} into the queue.')

task_queue.put(f'Task {i}')

time.sleep(1)

print('Producer: All tasks sent. Shutting down.')

Key points in producer.py:

- It uses the exact same

QueueManagersubclass and registration ('get_task_queue'). - It doesn't create a queue; it connects to the manager using

manager.connect(). - It calls

manager.get_task_queue()to get a proxy to the queue. - It interacts with the proxy (

task_queue.put()) as if it were the real queue.

Step 3: Create the Consumer (consumer.py)

This script will also connect to the server and get items from the queue.

# consumer.py

from multiprocessing.managers import BaseManager

# Use the same authkey and address as the server

SERVER_ADDRESS = ('127.0.0.1', 50000)

AUTH_KEY = b'abracadabra'

class QueueManager(BaseManager):

pass

# Register the queue with the manager. The name MUST match the server's.

QueueManager.register('get_task_queue')

if __name__ == '__main__':

# Connect to the server

print('Consumer: Connecting to server...')

manager = QueueManager(address=SERVER_ADDRESS, authkey=AUTH_KEY)

manager.connect()

# Get a proxy to the queue

task_queue = manager.get_task_queue()

print('Consumer: Connected and got queue proxy.')

# Get tasks from the queue until it's empty

while True:

try:

# Use a timeout to prevent the consumer from blocking forever

# if the producer is done but the server is still running.

task = task_queue.get(timeout=5)

print(f'Consumer: Got {task}. Processing...')

time.sleep(2) # Simulate work

except Exception as e:

# If the queue is empty and the timeout is reached

if "Empty" in str(e):

print('Consumer: Queue is empty. Shutting down.')

break

else:

print(f"An error occurred: {e}")

break

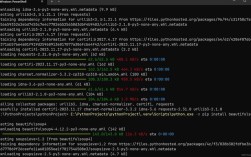

How to Run It

-

First, start the server:

python server.py

Output:

Server started on 0.0.0.0:50000. Waiting for connections... -

In a new terminal, start the producer:

python producer.py

Output:

Producer: Connecting to server... Producer: Connected and got queue proxy. Producer: Putting task 0 into the queue. Producer: Putting task 1 into the queue. ... Producer: Putting task 9 into the queue. Producer: All tasks sent. Shutting down. -

In a third terminal, start the consumer:

python consumer.py

Output:

Consumer: Connecting to server... Consumer: Connected and got queue proxy. Consumer: Got Task 0. Processing... Consumer: Got Task 1. Processing... ... Consumer: Got Task 9. Processing... Consumer: Queue is empty. Shutting down.

You have successfully shared a queue between three completely separate processes!

Important Considerations and Best Practices

-

Security (

authkey):- The

authkeyis like a password. Any process that knows it can connect to your manager and access your objects. - Never use a default or easily guessable key in a production environment.

- For network-wide use, generate a random, secure key and distribute it only to trusted clients.

- The

-

Error Handling:

- Network connections can fail. Always wrap your

manager.connect()andmanager.get_server()calls intry...exceptblocks. - If the server goes down, clients will get connection errors. They need a strategy to reconnect or fail gracefully.

- Network connections can fail. Always wrap your

-

Shutting Down Gracefully:

- The server runs forever with

serve_forever(). You need a way to stop it, typically with aKeyboardInterrupt(as shown) or a separate management process. - When the server shuts down, all client proxies become invalid. Any operation on them will raise an error.

- The server runs forever with

-

Performance (Proxy Overhead):

- Using a proxy adds a layer of network communication. Every call (

.put(),.get(),.qsize()) involves sending data over a socket. - For very high-performance, low-latency needs between processes on the same machine, a simple

multiprocessing.Queue(without a manager) will be faster because it avoids this overhead. QueueManageris ideal for decoupling processes or for network communication, not for maximum speed in a tightly-coupled application.

- Using a proxy adds a layer of network communication. Every call (

-

When to Use

QueueManager:- ✅ You need to share a queue between scripts.

- ✅ You want to distribute work across multiple machines.

- ✅ You want to decouple a producer from its consumers. The producer doesn't need to know or care about the consumers, and vice-versa. They just need to know the manager's address.

-

When to Use a Standard

multiprocessing.Queue:- ✅ All your processes are spawned from a single parent script.

- ✅ All processes are on the same machine.

- ✅ You need the absolute best possible performance for IPC.