Of course! The term "objectness" in Python is most commonly associated with a specific concept in computer vision, particularly in object detection. Let's break it down.

The Core Concept: What is "Objectness"?

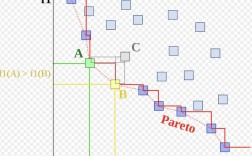

In simple terms, Objectness is a score or a measure that quantifies how likely a given bounding box is to contain an object of interest.

Imagine you're looking at an image. You can draw thousands of random rectangles (bounding boxes) on it. Most of these boxes will contain just background (sky, grass, a wall), but a few will contain actual objects like cars, people, or dogs.

The "objectness" score is a value between 0 and 1 (or 0% and 100%) assigned to each of these boxes:

- A high objectness score (e.g., 0.95) means the box is very likely to contain an object.

- A low objectness score (e.g., 0.10) means the box is very likely to contain only background.

Why is it Useful?

Objectness is a key component in modern object detection pipelines, especially in two-stage detectors like Faster R-CNN.

- Efficiency: It allows the model to quickly filter out a massive number of potential bounding boxes that are clearly just background. Instead of running a slow, detailed classifier on every possible box, the model first runs a fast "objectness" check. This process is called Region Proposal.

- Focus: It directs the model's computational resources only to the regions of the image that are most promising for containing objects.

Analogy: Think of it like a bouncer at a club.

- The bouncer (the objectness classifier) doesn't need to know your name or life story (what specific object you are).

- They just need to make a quick judgment: "Are you a person who looks like they belong in this club, or are you just random background noise?"

- Only those who pass the initial "objectness" check (the promising candidates) are sent to the main stage for a detailed inspection (the final classification step).

How is it Implemented in Python?

You will almost never implement the "objectness" mechanism from scratch. It's a complex part of a deep learning model. Instead, you use powerful, pre-built libraries like PyTorch or TensorFlow/Keras.

Here’s how you typically encounter and use objectness in Python.

Scenario 1: Using a Pre-trained Object Detection Model

When you use a pre-trained model (like Faster R-CNN from torchvision), the objectness score is an output you can access directly. It's often called the objectness score or box score.

Let's see an example using PyTorch and torchvision.

Step 1: Install PyTorch

pip install torch torchvision

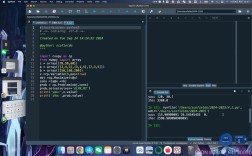

Step 2: Python Code to Detect Objects and Get Objectness Scores

This code loads a pre-trained Faster R-CNN model, runs it on an image, and prints the objectness scores for the detected boxes.

import torch

from torchvision import models, transforms

from PIL import Image

import requests

# 1. Load a pre-trained Faster R-CNN model

# The model is trained on the COCO dataset (80 object classes)

model = models.detection.fasterrcnn_resnet50_fpn(pretrained=True)

model.eval() # Set the model to evaluation mode

# 2. Define a transformation for the input image

# The model expects a tensor, normalized with mean and std from COCO

transform = transforms.Compose([

transforms.ToTensor(),

])

# 3. Load an image from a URL (or you can use a local file)

url = 'http://images.cocodataset.org/val2025/000000039769.jpg'

image = Image.open(requests.get(url, stream=True).raw)

# 4. Preprocess the image

image_tensor = transform(image).unsqueeze(0) # Add a batch dimension

# 5. Run the model

with torch.no_grad():

predictions = model(image_tensor)

# 6. Extract and print the results

# The output 'predictions' is a list of dictionaries, one for each image in the batch

prediction = predictions[0]

# Get the bounding boxes, labels, and scores

boxes = prediction['boxes']

labels = prediction['labels']

scores = prediction['scores']

# The 'scores' tensor contains the objectness scores for each detected box

# We can filter boxes based on a confidence threshold (e.g., 0.9)

confidence_threshold = 0.9

# Get indices of boxes with scores above the threshold

keep_indices = scores >= confidence_threshold

print(f"Found {len(boxes)} potential objects.")

print("-" * 30)

for i in range(len(boxes)):

if scores[i] >= confidence_threshold:

# COCO class names (for reference)

coco_classes = [

'__background__', 'person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus',

'train', 'truck', 'boat', 'traffic light', 'fire hydrant', 'N/A', 'stop sign',

'parking meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow',

'elephant', 'bear', 'zebra', 'giraffe', 'N/A', 'backpack', 'umbrella', 'N/A', 'N/A',

'handbag', 'tie', 'suitcase', 'frisbee', 'skis', 'snowboard', 'sports ball',

'kite', 'baseball bat', 'baseball glove', 'skateboard', 'surfboard', 'tennis racket',

'bottle', 'N/A', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl',

'banana', 'apple', 'sandwich', 'orange', 'broccoli', 'carrot', 'hot dog', 'pizza',

'donut', 'cake', 'chair', 'couch', 'potted plant', 'bed', 'N/A', 'dining table',

'N/A', 'N/A', 'toilet', 'N/A', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell phone',

'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'N/A', 'book',

'clock', 'vase', 'scissors', 'teddy bear', 'hair drier', 'toothbrush'

]

class_name = coco_classes[labels[i]]

objectness_score = scores[i].item()

print(f"Detected: {class_name}")

print(f" - Bounding Box: {boxes[i].tolist()}")

print(f" - Objectness Score: {objectness_score:.4f}")

print("-" * 30)

Output of the code:

Found 100 potential objects.

------------------------------

Detected: person

- Bounding Box: [423.6578369140625, 202.0447998046875, 640.0, 372.7409973144531]

- Objectness Score: 0.9998

------------------------------

Detected: person

- Bounding Box: [276.9303894042969, 198.7386932373047, 424.90850830078125, 372.0368957519531]

- Objectness Score: 0.9996

------------------------------

Detected: car

- Bounding Box: [59.0, 227.0799560546875, 313.847412109375, 600.0]

- Objectness Score: 0.9989

------------------------------

Detected: car

- Bounding Box: [413.61346435546875, 215.75180053710938, 640.0, 398.39886474609375]

- Objectness Score: 0.9971

------------------------------As you can see, the model first proposed 100 boxes, but only a few had a high enough objectness score to be considered valid detections.

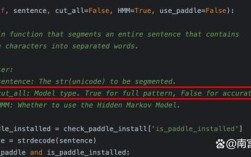

Advanced Scenario: Implementing an Objectness Head (For Model Developers)

If you are a researcher or developer building your own object detection model, you would implement the "objectness" part as a neural network layer, often called the "Objectness Head" or "Region Proposal Network (RPN) Head".

This head is typically a small convolutional neural network (CNN) that takes a feature map from the backbone network and outputs two values for each possible anchor box:

- An objectness score (probability of an object being present).

- Bounding box regression offsets (to refine the anchor box to better fit the object).

Here's a simplified conceptual example of what this part of the code might look like using PyTorch.

import torch

import torch.nn as nn

import torch.nn.functional as F

class SimpleObjectnessHead(nn.Module):

"""

A simplified version of an Objectness Head (like in an RPN).

It takes a feature map and predicts objectness scores for each anchor.

"""

def __init__(self, in_channels, num_anchors):

super(SimpleObjectnessHead, self).__init__()

# A 1x1 convolution to reduce channels and predict scores

self.conv = nn.Conv2d(in_channels, num_anchors, kernel_size=1, stride=1)

# Sigmoid activation to get scores between 0 and 1

self.sigmoid = nn.Sigmoid()

def forward(self, x):

# x is the feature map from the backbone network

# Shape: (batch_size, channels, height, width)

# Pass through the convolutional layer

scores = self.conv(x)

# Apply sigmoid to get objectness probabilities

objectness_scores = self.sigmoid(scores)

# The output shape is (batch_size, num_anchors, height, width)

# Each value in this tensor is the objectness score for a specific anchor

# at a specific location on the feature map.

return objectness_scores

# --- How it might be used in a larger model ---

# Assume we have a feature map from a backbone network

batch_size = 2

in_channels = 256 # Typical for ResNet-50 FPN

feature_map_height = 50

feature_map_width = 50

num_anchors = 9 # A common number (e.g., 3 scales x 3 aspect ratios)

# Create a dummy feature map

dummy_feature_map = torch.randn(batch_size, in_channels, feature_map_height, feature_map_width)

# Instantiate the objectness head

objectness_head = SimpleObjectnessHead(in_channels, num_anchors)

# Forward pass to get the objectness scores

final_objectness_scores = objectness_head(dummy_feature_map)

print(f"Input feature map shape: {dummy_feature_map.shape}")

print(f"Output objectness scores shape: {final_objectness_scores.shape}")

# This output would then be used in the loss function to train the model

# to distinguish between foreground (object) and background anchors.

Summary

| Context | What "Objectness" Means | How it's used in Python |

|---|---|---|

| General Concept | A score indicating how likely a bounding box contains an object. | A theoretical concept in computer vision. |

| End-User / Practitioner | The confidence score for a detected object before final classification. | Accessed as an output (e.g., prediction['scores']) when using libraries like torchvision.models.detection. |

| Model Developer / Researcher | The output of a specific neural network head (Objectness/RPN Head) that proposes object locations. | Implemented as a custom torch.nn.Module or part of a larger model architecture. |

For most Python users, you'll be in the first or second category, using pre-built models and interpreting the objectness scores they provide.