Of course! The term itcastitem is not a standard Python library or keyword. It almost certainly refers to a custom class or object that you would create yourself, typically as part of a web scraping or data parsing project.

The name itself gives us a big clue:

itcast: This is the name of a famous Chinese online education platform (传智播客). They have public web pages listing their courses.item: This is a very common term in programming, especially in the context of web scraping (like with the Scrapy framework), where it represents a single piece of scraped data, like a product, a news article, or in this case, a course.

Therefore, an ItcastItem is a Python class designed to hold the information scraped for a single course from the itcast website.

Let's break down what this would look like, from a simple example to a more robust, Scrapy-style implementation.

The Concept: Why Use an ItcastItem?

When you scrape a webpage, you get a lot of messy HTML. You need to extract specific pieces of information (e.g., course title, teacher, duration, price) and store them in a structured way.

An ItcastItem class is the perfect tool for this. It acts as a blueprint or a container for your data.

Benefits of using an Item class:

- Structure: It forces you to define what data you expect to collect.

- Clarity: Your code becomes more readable.

item['title']is much clearer than a generic dictionary key. - Data Validation: You can add checks to ensure the data is correct.

- Compatibility: It integrates seamlessly with data processing pipelines in frameworks like Scrapy.

A Simple, Pure Python Implementation

You don't need a complex framework to create an item class. A simple Python class is often enough.

Let's imagine we want to scrape course information from a hypothetical page. We want to get:: The name of the course.

teacher: The name of the instructor.duration: How long the course is.

# itcast_item.py

class ItcastItem:

"""

A simple class to represent a single course item scraped from itcast.com.

"""

def __init__(self, title, teacher, duration):

self.title = title

self.teacher = teacher

self.duration = duration

def __repr__(self):

"""

Provides a developer-friendly string representation of the object.

"""

return f"ItcastItem(title='{self.title}', teacher='{self.teacher}', duration='{self.duration}')"

# --- Example Usage ---

# Imagine this data was extracted from a web page using a library like BeautifulSoup

course_data = {: 'Python入门到精通',

'teacher': 'Alex',

'duration': '6个月'

}

# Create an instance of our ItcastItem

python_course = ItcastItem(course_data['title'],

teacher=course_data['teacher'],

duration=course_data['duration']

)

# Now you have a clean, structured object

print(python_course.title)

# Output: Python入门到精通

print(python_course)

# Output: ItcastItem(title='Python入门到精通', teacher='Alex', duration='6个月')

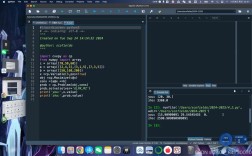

The "Scrapy Way" (More Common & Powerful)

In the world of web scraping, the Scrapy framework is the industry standard. Scrapy has a built-in Item class that is more powerful and is designed to work with its data pipelines.

This is the most likely meaning of itcastitem in a real-world project.

Step 1: Define the Item in Scrapy

First, you'd create a Scrapy project and define your item in a file like items.py.

# itcast_crawler/items.py

import scrapy

class ItcastItem(scrapy.Item):

"""

This is the Scrapy Item for itcast courses.

Scrapy Items are dictionaries extended to provide additional safety and functionality.

"""

# Define the fields for your item= scrapy.Field()

teacher = scrapy.Field()

duration = scrapy.Field()

# You can add more fields as needed

# students_count = scrapy.Field()

scrapy.Item: We inherit from Scrapy's baseItemclass.scrapy.Field(): Each field is defined usingscrapy.Field(). This doesn't enforce a specific data type, but it allows Scrapy to know about the structure of your item.

Step 2: Use the Item in a Spider

Your spider would parse the HTML and populate this ItcastItem.

# itcast_crawler/spiders/itcast_spider.py

import scrapy

from itcast_crawler.items import ItcastItem # Import the item you defined

class ItcastSpider(scrapy.Spider):

name = 'itcast'

allowed_domains = ['itcast.cn']

# Note: The actual URL might be different, this is an example

start_urls = ['http://www.itcast.cn/channel/teacher.shtml']

def parse(self, response):

"""

This method parses the teacher page and extracts course information.

"""

# Select all the course boxes on the page

# The selectors are hypothetical and would need to be real

courses = response.css('div.con_list li')

for course in courses:

# Create an instance of our ItcastItem

item = ItcastItem()

# Extract data and populate the item using CSS selectors

item['title'] = course.css('h3::text').get().strip()

item['teacher'] = course.css('h4::text').get().strip()

item['duration'] = course.css('span::text').get().strip()

# Yield the item. This passes it to the Scrapy pipeline for processing.

yield item

Step 3: Process the Item in a Pipeline

Finally, you can define a pipeline to save this data to a file, a database, etc.

# itcast_crawler/pipelines.py

class ItcastPipeline:

def open_spider(self, spider):

"""This method is called when the spider is opened."""

self.file = open('itcast_courses.json', 'w', encoding='utf-8')

# Write the opening bracket for the JSON array

self.file.write('[\n')

def close_spider(self, spider):

"""This method is called when the spider is closed."""

# Write the closing bracket for the JSON array

self.file.write('\n]')

self.file.close()

self.logger.info("Spider closed and file saved.")

def process_item(self, item, spider):

"""

This method is called for each item scraped by the spider.

"""

# Convert the item to a dictionary and then to a JSON string

line = str(dict(item)) + ",\n"

self.file.write(line)

return item # Must return the item

Summary

| Aspect | Description |

|---|---|

What is itcastitem? |

It's not a built-in Python thing. It's a custom class you create to store structured data, likely scraped from the itcast.cn website. |

| Why use it? | To move from raw, messy HTML data to clean, structured, and manageable Python objects. |

| Simple Version | A plain Python class with an __init__ method to hold attributes like title, teacher, etc. |

| Scrapy Version | A class that inherits from scrapy.Item, using scrapy.Field() to define data fields. This is the standard for serious web scraping projects. |

| How it fits in | A Spider extracts data from a page and puts it into an ItcastItem. The ItcastItem is then passed to a Pipeline to be saved (e.g., to a JSON file or database). |