Of course! Let's dive into Python and TensorFlow. This is a powerful combination for building and training machine learning models, especially deep neural networks.

I'll structure this guide from the ground up, covering:

- What is TensorFlow? (The "Why")

- Core Concepts: The fundamental building blocks.

- Installation: How to get it on your machine.

- Your First TensorFlow Program: A simple "Hello, World!" equivalent.

- Building a Neural Network: A complete, practical example for image classification.

- Key Tools in the TensorFlow Ecosystem: Keras, TensorFlow Hub, etc.

- Next Steps & Best Practices.

What is TensorFlow?

TensorFlow is an open-source end-to-end machine learning platform developed by Google.

Think of it as a massive toolkit for doing anything related to machine learning:

- Numerical Computation: At its heart, it's a powerful library for performing complex mathematical operations, especially on large arrays of data (called tensors).

- Automatic Differentiation: This is its superpower. When you build a model, TensorFlow can automatically calculate the gradients (the derivatives) of the model's error with respect to its internal parameters (weights and biases). This is the core of how models "learn" through a process called backpropagation.

- GPU/TPU Acceleration: It's designed to efficiently run on specialized hardware like GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units), which can perform calculations orders of magnitude faster than a standard CPU. This is crucial for training large models.

- Deployment: It provides tools to deploy your trained models on servers, mobile devices (Android, iOS), and even in web browsers.

Analogy: If you think of building a machine learning model like building a car, TensorFlow is the entire factory: it provides the assembly line, the robotic arms (for computation), the quality control system (automatic differentiation), and the shipping department (deployment tools).

Core Concepts

Before writing code, you need to understand a few key terms:

- Tensor: The central data unit in TensorFlow. It's a multi-dimensional array, similar to a NumPy

ndarray. A 0D tensor is a scalar, a 1D tensor is a vector, a 2D tensor is a matrix, and so on. - TensorFlow Graph: TensorFlow builds a computational graph of your operations. Instead of executing operations line-by-line like in standard Python, it defines the entire series of operations first. Then, it runs this graph in an optimized manner. This graph-based approach is key to its performance.

- Eager Execution (Default in TF2): This is the modern way of working. It allows you to run operations immediately and inspect results, just like regular Python. It makes TensorFlow much more intuitive and easier to debug, while still allowing you to build graphs for performance when needed.

tf.data.Dataset: The high-performance API for building input pipelines. It efficiently loads and preprocesses data from various sources (files, databases, etc.) and feeds it to your model.- Keras: Keras is TensorFlow's high-level API for building and training models. It provides a simple, intuitive, and highly productive way to define neural layers and connect them. In modern TensorFlow,

tf.kerasis the official and recommended way to build models.

Installation

The easiest way to install TensorFlow is using pip. It's highly recommended to do this inside a Python virtual environment to manage dependencies.

# Create a virtual environment (optional but good practice) python -m venv tf-env source tf-env/bin/activate # On Windows: tf-env\Scripts\activate # Install TensorFlow pip install tensorflow

You can also install a version with GPU support if you have the correct NVIDIA drivers and CUDA toolkit installed. The installation instructions for this can be found on the official TensorFlow website.

To verify your installation, run this in a Python interpreter:

import tensorflow as tf

print("TensorFlow version:", tf.__version__)

Your First TensorFlow Program: Constant Tensors

This is the "Hello, World!" of TensorFlow. It demonstrates the basic idea of creating tensors and performing operations.

import tensorflow as tf

# Create two constant tensors

a = tf.constant(5)

b = tf.constant(10)

# Perform an addition operation

c = tf.add(a, b)

# To see the value, you need to run it in a session or use .numpy() in eager execution

# In eager execution (default in TF2), you can just convert to a NumPy array

print("Tensor 'a':", a)

print("Tensor 'b':", b)

print("Result of a + b:", c)

print("Value of result as a NumPy array:", c.numpy())

Output:

Tensor 'a': tf.Tensor(5, shape=(), dtype=int32)

Tensor 'b': tf.Tensor(10, shape=(), dtype=int32)

Result of a + b: tf.Tensor(15, shape=(), dtype=int32)

Value of result as a NumPy array: 15Building a Neural Network: A Practical Example

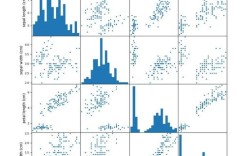

Let's build a simple neural network to classify images of clothing from the Fashion MNIST dataset. This is a classic "getting started" problem.

Step 1: Import Libraries and Load Data

import tensorflow as tf

from tensorflow import keras

import numpy as np

import matplotlib.pyplot as plt

# Load the Fashion MNIST dataset

fashion_mnist = keras.datasets.fashion_mnist

(train_images, train_labels), (test_images, test_labels) = fashion_mnist.load_data()

# Class names for the labels

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

# Let's look at the data shape

print("Training data shape:", train_images.shape) # (60000, 28, 28)

print("Number of training labels:", len(train_labels))

print("Test data shape:", test_images.shape) # (10000, 28, 28)

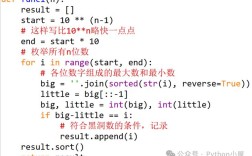

Step 2: Preprocess the Data

The pixel values of the images are integers from 0 to 255. We need to scale them to a range of 0 to 1 for the neural network to train effectively.

# Scale the pixel values to a range of 0 to 1 train_images = train_images / 255.0 test_images = test_images / 255.0 # Let's visualize one of the images to see what it looks like plt.figure() plt.imshow(train_images[0], cmap=plt.cm.binary) plt.colorbar() plt.grid(False) plt.xlabel(class_names[train_labels[0]]) plt.show()

Step 3: Build the Model

We'll use a tf.keras.Sequential model, which is a simple stack of layers.

Flatten: This layer transforms the 2D image (28x28 pixels) into a 1D array (784 pixels). It doesn't have any learnable parameters.Dense: A fully-connected neural layer.- The first

Denselayer has 128 neurons (or nodes) and uses thereluactivation function. - The second (and last)

Denselayer has 10 neurons, one for each class. It uses thesoftmaxactivation function to return an array of 10 probability scores that sum to 1.

- The first

model = keras.Sequential([

keras.layers.Flatten(input_shape=(28, 28)), # Input layer

keras.layers.Dense(128, activation='relu'), # Hidden layer

keras.layers.Dense(10, activation='softmax') # Output layer

])

Step 4: Compile the Model

Before the model is ready for training, it needs a few more settings. These are added during the model's compile step:

- Optimizer: This is how the model updates itself based on the data it sees and its loss function.

adamis a popular and effective choice. - Loss Function: This measures how accurate the model is during training. We want to minimize this function.

SparseCategoricalCrossentropyis used for multi-class classification when the labels are integers. - Metrics: Used to monitor the training and testing steps. Here, we'll just use

accuracy.

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(),

metrics=['accuracy'])

Step 5: Train the Model

Training the neural network is done by calling the model.fit method. We "fit" the model to the training data.

# Train the model

print("\n--- Training the model ---")

model.fit(train_images, train_labels, epochs=10)

An epoch is one full pass through the entire training dataset. You'll see the accuracy and loss improve with each epoch.

Step 6: Evaluate Accuracy

Now, we check how the model performs on the test dataset, which it has never seen before.

# Evaluate the model on the test data

print("\n--- Evaluating the model ---")

test_loss, test_acc = model.evaluate(test_images, test_labels, verbose=2)

print('\nTest accuracy:', test_acc)

You'll notice that the test accuracy is usually a bit lower than the training accuracy. This is called overfitting—the model gets really good at the training data but is less generalizable to new data.

Step 7: Make Predictions

Finally, we can use our trained model to make predictions on some images.

# Make predictions

predictions = model.predict(test_images)

# A prediction is an array of 10 numbers. They represent the model's "confidence"

# that the image corresponds to each of the 10 articles of clothing.

print("\n--- Prediction for the first test image ---")

print("Prediction array:", predictions[0])

print("Predicted label:", np.argmax(predictions[0]))

print("True label:", test_labels[0])

# Let's visualize the prediction

def plot_image(i, predictions_array, true_label, img):

true_label, img = true_label[i], img[i]

plt.grid(False)

plt.xticks([])

plt.yticks([])

plt.imshow(img, cmap=plt.cm.binary)

predicted_label = np.argmax(predictions_array)

if predicted_label == true_label:

color = 'blue'

else:

color = 'red'

plt.xlabel("{} {:2.0f}% ({})".format(class_names[predicted_label],

100*np.max(predictions_array),

class_names[true_label]),

color=color)

def plot_value_array(i, predictions_array, true_label):

true_label = true_label[i]

plt.grid(False)

plt.xticks(range(10))

plt.yticks([])

thisplot = plt.bar(range(10), predictions_array, color="#777777")

plt.ylim([0, 1])

predicted_label = np.argmax(predictions_array)

thisplot[predicted_label].set_color('red')

thisplot[true_label].set_color('blue')

# Plot the first X test images, their predicted labels, and the true labels.

# Color correct predictions in blue and incorrect predictions in red.

num_rows = 5

num_cols = 3

num_images = num_rows*num_cols

plt.figure(figsize=(2*2*num_cols, 2*num_rows))

for i in range(num_images):

plt.subplot(num_rows, 2*num_cols, 2*i+1)

plot_image(i, predictions[i], test_labels, test_images)

plt.subplot(num_rows, 2*num_cols, 2*i+2)

plot_value_array(i, predictions[i], test_labels)

plt.tight_layout()

plt.show()

Key Tools in the TensorFlow Ecosystem

- Keras (

tf.keras): The high-level API for building models. It's user-friendly and flexible. - TensorFlow Hub (

tfhub.dev): A repository of pre-trained models. You can download a model trained on a massive dataset (like ImageNet) and use it for your own tasks, either as is or by fine-tuning it. This is a huge time-saver. - TensorFlow Lite: Used for deploying TensorFlow models on mobile and embedded devices (Android, iOS, Raspberry Pi).

- TensorFlow.js: Used for running TensorFlow models in the browser or in Node.js environments.

- TensorFlow Extended (TFX): A production-grade MLOps platform for building and managing end-to-end ML pipelines at scale.

Next Steps & Best Practices

- Start with Tutorials: The official TensorFlow Tutorials are excellent and cover a wide range of topics, from beginner to advanced.

- Experiment with Datasets: Try other datasets like CIFAR-10, CIFAR-100, or any dataset from Kaggle.

- Learn to use

tf.data: For larger datasets, learning to build efficient input pipelines withtf.data.Datasetis crucial. - Understand Overfitting: Learn techniques to combat it, such as Dropout and Data Augmentation.

- Explore Architectures: Once you're comfortable with the basics, explore more complex models like Convolutional Neural Networks (CNNs) for image data and Recurrent Neural Networks (RNNs) for sequential data. Keras makes these easy to implement.