Of course! The DataLoader is one of the most fundamental and important classes in the PyTorch ecosystem. It's the workhorse that feeds data to your neural network for training and evaluation.

Let's break it down, from the basics to best practices.

What is a DataLoader and Why Do We Need It?

Imagine you have a massive dataset of images and labels. You can't possibly load all of them into your computer's memory at once. Even if you could, feeding them all to your neural network at once would be incredibly inefficient and might not even fit on your GPU.

This is where the DataLoader comes in. It solves several key problems:

- Batching: It groups your data into small batches. Training on batches is more efficient and leads to better, more stable convergence than "stochastic" (one sample at a time) or "full-batch" (all samples at once) training.

- Shuffling: For training, it shuffles the data at the beginning of each epoch. This is crucial to prevent the model from learning the order of the training data, which can introduce bias.

- Parallel Data Loading: It can load data in parallel using multiple worker processes, which dramatically speeds up the data loading process, especially when your data preprocessing is complex (e.g., image decoding and augmentation).

- Memory Management: It only loads one batch at a time into memory, making it possible to work with datasets much larger than your available RAM.

The Core Components

To use a DataLoader, you first need two things:

- A Dataset: This is an object that accesses your data. It must implement at least two methods:

__len__(): Returns the total number of items in the dataset.__getitem__(idx): Returns the item at the given indexidx. This is where you load the actual data (e.g., read an image file, read a row from a CSV) and perform any necessary transformations (e.g., convert to a tensor, resize an image).

PyTorch provides several pre-built datasets (like torchvision.datasets.CIFAR10, torchtext.datasets.IMDB), but you'll often create your own by subclassing torch.utils.data.Dataset.

- A DataLoader: This is the object that wraps your dataset and provides the batching, shuffling, and parallel loading logic.

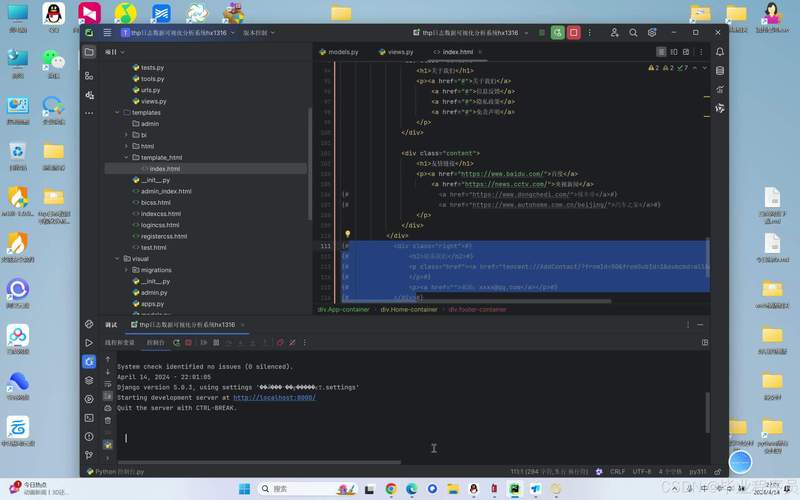

A Simple, Complete Example

Let's walk through creating a custom Dataset and then using a DataLoader with it.

Step 1: Create a Custom Dataset

We'll create a dummy dataset of sine waves. Each "sample" will be a segment of a sine wave, and the "label" will be the cosine of that same segment.

import torch

from torch.utils.data import Dataset, DataLoader

import numpy as np

# 1. Define a custom Dataset

class SineWaveDataset(Dataset):

"""

A custom PyTorch Dataset for generating sine and cosine waves.

"""

def __init__(self, num_samples=1000, length=100):

"""

Args:

num_samples (int): Total number of samples to generate.

length (int): The length of each sine wave sequence.

"""

self.num_samples = num_samples

self.length = length

# Generate all the data upfront for this simple example

self.x_data = torch.linspace(0, 4 * np.pi, num_samples * length).reshape(num_samples, length)

def __len__(self):

"""Returns the total number of samples in the dataset."""

return self.num_samples

def __getitem__(self, idx):

"""

Generates one sample of data.

The input is a sine wave, the target is the corresponding cosine wave.

"""

# Get the sine wave for the given index

sine_wave = torch.sin(self.x_data[idx])

# The target is the cosine of the same x values

cosine_wave = torch.cos(self.x_data[idx])

return sine_wave, cosine_wave

# 2. Instantiate the Dataset

# We'll create a dataset with 1000 samples, each 100 points long

train_dataset = SineWaveDataset(num_samples=1000, length=100)

print(f"Dataset size: {len(train_dataset)}")

# Get one sample

sample, target = train_dataset[0]

print(f"Shape of a single sample: {sample.shape}")

print(f"Shape of a single target: {target.shape}")

Step 2: Create and Use the DataLoader

Now, let's wrap our train_dataset in a DataLoader.

# 3. Instantiate the DataLoader

# We'll use a batch size of 32 and shuffle the data

train_dataloader = DataLoader(

dataset=train_dataset,

batch_size=32,

shuffle=True,

num_workers=4 # Use 4 subprocesses to load data in parallel

)

# 4. Iterate over the DataLoader

# This is how you would use it in a training loop

print("\n--- Iterating through the DataLoader ---")

for batch_idx, (data, targets) in enumerate(train_dataloader):

# 'data' and 'targets' are batches of samples and labels

print(f"Batch {batch_idx}:")

print(f" Data shape: {data.shape}") # Should be [batch_size, sequence_length]

print(f" Targets shape: {targets.shape}") # Should be [batch_size, sequence_length]

# In a real training loop, you would do:

# 1. Forward pass: outputs = model(data)

# 2. Calculate loss: loss = loss_function(outputs, targets)

# 3. Backward pass: loss.backward()

# 4. Optimizer step: optimizer.step()

# Let's just look at the first two batches

if batch_idx == 1:

break

Key DataLoader Arguments Explained:

dataset: TheDatasetobject to load data from. (Required)batch_size: The number of samples per batch. (Required)shuffle: IfTrue, data will be shuffled at the beginning of each epoch. Crucial for training, should beFalsefor validation/testing.num_workers: How many subprocesses to use for data loading.0means the data will be loaded in the main process. A value > 0 (e.g., 4, 8) enables parallel loading, which is a huge performance boost for I/O-bound tasks like reading images from disk. Start with 0 and increase if you see your GPU is idle waiting for data.pin_memory: IfTrue, the data loader will copy Tensors into CUDA pinned memory before returning them. This speeds up the transfer of data from CPU to GPU. Set this toTrueif you are training on a GPU.drop_last: IfTrueand the dataset size is not divisible by the batch size, the last incomplete batch will be dropped. This is useful to ensure all batches have the same size.

Best Practices and Common Patterns

Separate Datasets for Training and Validation

You should always have a separate validation (or test) set to evaluate your model. You typically do not shuffle the validation set.

# Create a validation dataset (usually smaller)

val_dataset = SineWaveDataset(num_samples=200, length=100)

# Create a DataLoader for validation (no shuffling!)

val_dataloader = DataLoader(

dataset=val_dataset,

batch_size=32,

shuffle=False, # No shuffling for validation!

num_workers=4

)

Using torchvision for Image Data

For image datasets, torchvision is your best friend. It provides standard datasets and, more importantly, transforms for augmenting your data on the fly.

import torchvision

from torchvision import transforms

# Define a series of transformations to apply to each image

# These are applied in the __getitem__ method of the dataset

transform = transforms.Compose([

transforms.Resize((256, 256)),

transforms.RandomHorizontalFlip(), # Data augmentation

transforms.ToTensor(), # Convert PIL Image to PyTorch Tensor

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) # Normalize

])

# Load a standard dataset with the transform

train_cifar10 = torchvision.datasets.CIFAR10(

root='./data',

train=True,

download=True,

transform=transform

)

# Create the DataLoader

train_loader_cifar10 = DataLoader(

dataset=train_cifar10,

batch_size=64,

shuffle=True,

num_workers=2

)

Using Samplers for Advanced Shuffling

The shuffle argument is a convenient shortcut. Under the hood, it uses a RandomSampler. If you need more control, you can pass a Sampler object directly.

RandomSampler: Samples elements randomly (used whenshuffle=True).SequentialSampler: Samples elements sequentially (used whenshuffle=False).SubsetRandomSampler: Samples from a given list of indices randomly. Useful for creating validation splits from a single large dataset.

from torch.utils.data import SubsetRandomSampler # Let's say we have a big dataset full_dataset = SineWaveDataset(num_samples=10000, length=100) # Create indices for training and validation num_train = 8000 indices = list(range(len(full_dataset))) np.random.shuffle(indices) train_indices, val_indices = indices[:num_train], indices[num_train:] # Create Samplers train_sampler = SubsetRandomSampler(train_indices) val_sampler = SubsetRandomSampler(val_indices) # Create DataLoaders with the samplers train_loader = DataLoader(full_dataset, batch_size=32, sampler=train_sampler) val_loader = DataLoader(full_dataset, batch_size=32, sampler=val_sampler)

Summary

| Component | Role | Key Methods |

|---|---|---|

Dataset |

Stores and provides access to the raw data. | __len__(), __getitem__(idx) |

DataLoader |

Wraps a Dataset to provide batches, shuffling, and parallel loading. |

(Iterator) |

The typical workflow is:

- Prepare your data (files, folders, etc.).

- Create a

Datasetclass that knows how to load and transform one item of data. - Instantiate your

Dataset. - Wrap it in a

DataLoader, configuringbatch_size,shuffle, andnum_workers. - Iterate through the

DataLoaderin your training and evaluation loops.