Of course! Here is a comprehensive guide on using Conditional Random Fields (CRF) for Natural Language Processing (NLP) tasks in a Windows environment with Python.

This guide will cover:

- What is a CRF? (A simple, non-technical explanation)

- Why use Python for CRFs? (The ecosystem)

- Step-by-Step Installation on Windows (Using Anaconda, the recommended method)

- A Complete Code Example (Named Entity Recognition)

- How to Use the Model for Prediction

- Key CRF Features & Hyperparameters to Tune

- Troubleshooting Common Issues

What is a CRF? (The Simple Version)

Imagine you're trying to label words in a sentence. For example, in the sentence "Apple is looking at buying a U.K. startup", you want to identify "Apple" as a company (ORG) and "U.K." as a location (GPE).

A simple model might look at the word "Apple" and say, "It starts with a capital letter, so it's likely a named entity." This is a good start, but it's not enough.

A Conditional Random Field (CRF) is a more advanced model that looks at the entire sentence and the relationships between words to make a better decision.

- It considers not just the word "Apple" but also the words around it ("is", "looking", "at").

- It learns patterns, like: "A word that comes after 'the' is likely not the start of a named entity," or "A word that comes after a company name is often a verb."

By considering this context, a CRF can make much more accurate and consistent predictions than a simpler model.

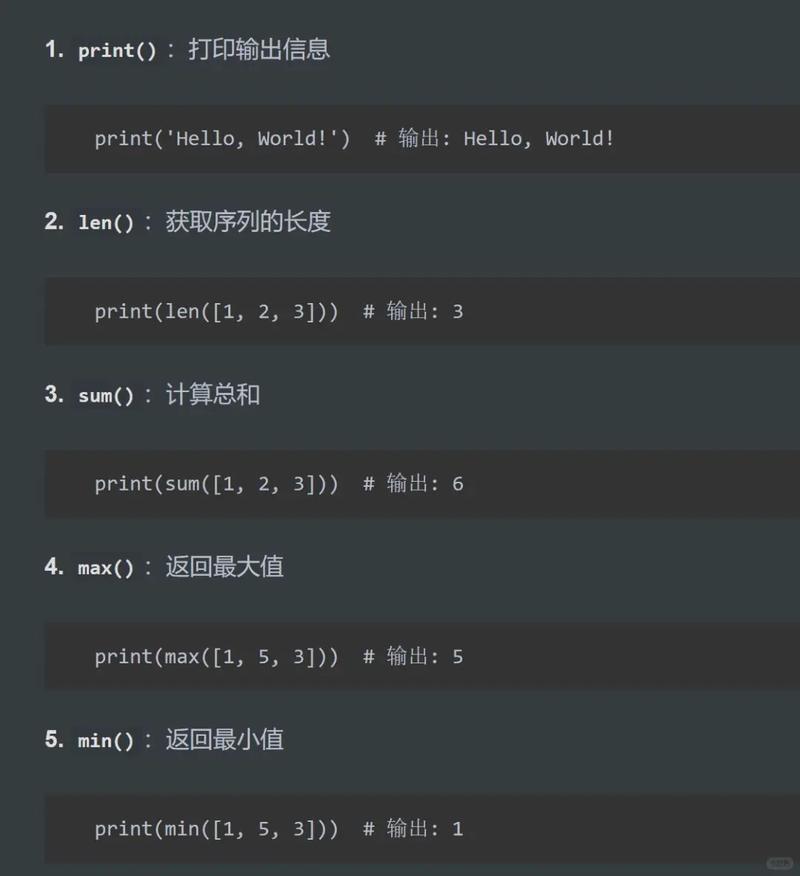

Why Use Python for CRFs?

Python is the dominant language for machine learning and NLP, and for good reason:

- Libraries: There are excellent, well-maintained libraries for CRFs.

- Ease of Use: The syntax is relatively easy to read and write.

- Ecosystem: It seamlessly integrates with other data science tools like Pandas, NumPy, and Scikit-learn.

The most popular library for CRFs in Python is sklearn-crfsuite. It provides a familiar Scikit-learn-like interface, making it easy to integrate into existing projects.

Step-by-Step Installation on Windows

The easiest and most robust way to manage Python environments on Windows is with Anaconda.

Step 1: Install Anaconda

If you don't have it, download and install the latest Anaconda installer for Windows from the official website. It's a graphical installer, so just follow the prompts. During installation, make sure to check the box that says "Add Anaconda to my PATH environment variable".

Step 2: Create a Dedicated Environment

It's best practice to create a separate environment for each project to avoid dependency conflicts.

- Open the Anaconda Prompt. You can find this in your Start Menu.

- Create a new environment for your CRF project. Let's call it

crf_projectand use Python 3.9.conda create -n crf_project python=3.9

- Activate the environment. You'll see the name of the environment in your prompt.

conda activate crf_project

Step 3: Install the CRF Library

Now, with your environment active, install the necessary libraries. sklearn-crfsuite is the main one. We'll also install scikit-learn for data splitting and evaluation, and numpy which is a dependency.

pip install sklearn-crfsuite pip install scikit-learn pip install numpy

That's it! Your Windows environment is now ready for CRF modeling.

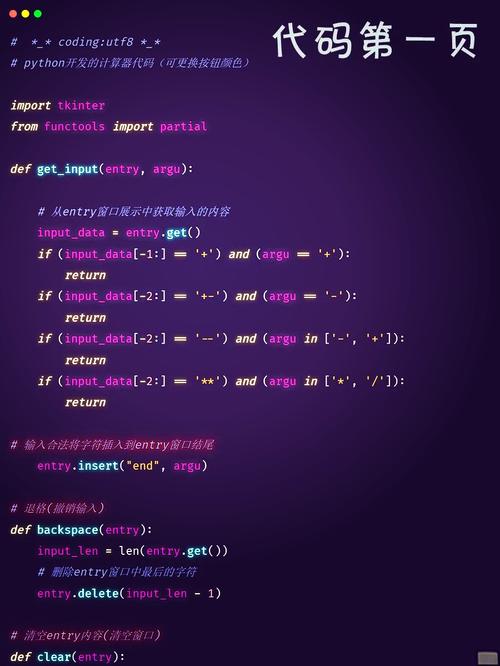

A Complete Code Example (Named Entity Recognition)

Let's build a model to identify Named Entities (Persons, Organizations, Locations) in sentences. We'll use a classic, small dataset for demonstration.

The Code

import sklearn_crfsuite

from sklearn_crfsuite import metrics

from sklearn.model_selection import train_test_split

import numpy as np

# --- 1. Sample Data ---

# Each sentence is a list of tuples (word, pos_tag, chunk_tag, ner_tag)

# For simplicity, we'll use a small dataset. In a real project, you'd use a larger one.

sentences = [

[

("Apple", "NNP", "B-NP", "B-ORG"),

("is", "VBZ", "B-VP", "O"),

("looking", "VBG", "I-VP", "O"),

("at", "IN", "B-PP", "O"),

("buying", "VBG", "I-PP", "O"),

("a", "DT", "I-PP", "O"),

("U.K.", "NNP", "I-PP", "B-LOC"),

("startup", "NN", "I-PP", "O"),

],

[

("San", "NNP", "B-NP", "B-PER"),

("Francisco", "NNP", "I-NP", "I-PER"),

("is", "VBZ", "B-VP", "O"),

("a", "DT", "B-NP", "O"),

("big", "JJ", "I-NP", "O"),

("city", "NN", "I-NP", "O"),

],

[

("Google", "NNP", "B-NP", "B-ORG"),

("was", "VBD", "B-VP", "O"),

("founded", "VBN", "I-VP", "O"),

("in", "IN", "B-PP", "O"),

("Mountain", "NNP", "B-NP", "B-LOC"),

("View", "NNP", "I-NP", "I-LOC"),

],

[

("Elon", "NNP", "B-NP", "B-PER"),

("Musk", "NNP", "I-NP", "I-PER"),

("works", "VBZ", "B-VP", "O"),

("at", "IN", "B-PP", "O"),

("Tesla", "NNP", "I-PP", "B-ORG"),

(".", ".", "O", "O"),

]

]

# --- 2. Feature Extraction ---

# This is the most critical part. We define what features the CRF can use.

def word2features(sent, i):

word = sent[i][0]

postag = sent[i][1]

features = {

'bias': 1.0,

'word.lower()': word.lower(),

'word[-3:]': word[-3:],

'word[-2:]': word[-2:],

'word.isupper()': word.isupper(),

'word.istitle()': word.istitle(),

'word.isdigit()': word.isdigit(),

'postag': postag,

'postag[:2]': postag[:2],

}

# Features for the previous word

if i > 0:

prev_word = sent[i-1][0]

prev_postag = sent[i-1][1]

features.update({

'prev_word.lower()': prev_word.lower(),

'prev_word.istitle()': prev_word.istitle(),

'prev_postag': prev_postag,

'prev_postag[:2]': prev_postag[:2],

})

else:

features['BOS'] = True # Beginning of sentence

# Features for the next word

if i < len(sent) - 1:

next_word = sent[i+1][0]

next_postag = sent[i+1][1]

features.update({

'next_word.lower()': next_word.lower(),

'next_word.istitle()': next_word.istitle(),

'next_postag': next_postag,

'next_postag[:2]': next_postag[:2],

})

else:

features['EOS'] = True # End of sentence

return features

def sent2features(sent):

return [word2features(sent, i) for i in range(len(sent))]

def sent2labels(sent):

return [label for token, postag, chunk, label in sent]

def sent2tokens(sent):

return [token for token, postag, chunk, label in sent]

# --- 3. Prepare Data ---

X = [sent2features(s) for s in sentences]

y = [sent2labels(s) for s in sentences]

# Split data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# --- 4. Train the CRF Model ---

crf = sklearn_crfsuite.CRF(

algorithm='lbfgs',

c1=0.1,

c2=0.1,

max_iterations=100,

all_possible_transitions=True

)

crf.fit(X_train, y_train)

# --- 5. Evaluate the Model ---

y_pred = crf.predict(X_test)

# Print the classification report

print("Classification Report:")

print(metrics.flat_classification_report(

y_test, y_pred, labels=crf.classes_, digits=4

))

# You can also inspect the learned transitions

print("\nTop likely transitions:")

print(crf.transition_features_[:10])

print("\nTop unlikely transitions:")

print(crf.transition_features_[-10:])

How to Run the Code

- Make sure your

crf_projectconda environment is active in the Anaconda Prompt. - Save the code above as a Python file (e.g.,

train_crf.py). - Run it from the prompt:

python train_crf.py

You should see the classification report and the transition features printed to your console.

How to Use the Model for Prediction

Now that you have a trained crf object, you can use it to label new, unseen sentences.

# Let's create a new sentence to predict

new_sentence = [

("Microsoft", "NNP", "B-NP", "O"), # We put dummy tags here, the model ignores them

("is", "VBZ", "B-VP", "O"),

("in", "IN", "B-PP", "O"),

("Seattle", "NNP", "I-PP", "O")

]

# You MUST apply the same feature extraction function

new_sentence_features = [sent2features(new_sentence)]

# Predict the labels

predicted_labels = crf.predict(new_sentence_features)

# The output is a list of lists, so we take the first one

predicted_labels = predicted_labels[0]

# Print the results

print("\n--- Prediction on New Sentence ---")

for token, label in zip(sent2tokens(new_sentence), predicted_labels):

print(f"{token:15} -> {label}")

Expected Output:

--- Prediction on New Sentence ---

Microsoft -> B-ORG

is -> O

in -> O

Seattle -> B-LOCNotice how the model correctly identified "Microsoft" as an organization and "Seattle" as a location, even though it had never seen this exact sentence before. This is the power of context!

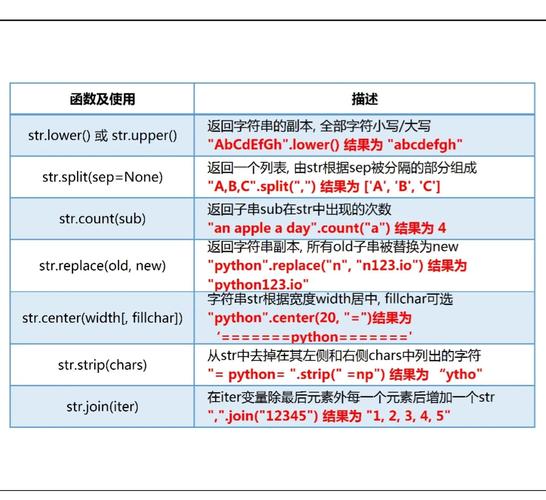

Key CRF Features & Hyperparameters to Tune

The performance of your CRF heavily depends on the features you provide and the hyperparameters you set.

Features (word2features function)

- Word-level features:

word.lower(),word[-3:](suffix),word.isupper(),word.istitle(). - Contextual features:

prev_word.lower(),next_word.lower(). These are crucial for CRFs. - Part-of-Speech (POS) tags:

postag. These are very powerful features. If you don't have them pre-labeled, you can use a POS-tagger library likespaCyorNLTKto generate them first.

Hyperparameters (in the CRF constructor)

algorithm: The optimization algorithm.'lbfgs'is a good default.'l2sgd'is another option.c1: L1 regularization coefficient. It controls the sparsity of the feature weights. A higher value can lead to fewer features being used.c2: L2 regularization coefficient. It controls the magnitude of the feature weights.max_iterations: The maximum number of iterations for the optimizer.all_possible_transitions: IfTrue, the model will consider all possible transitions between labels (e.g., fromI-PERtoB-ORG). IfFalse, it will only consider transitions that appear in the training data. Setting this toTrueis often better for generalization.

Tuning Tip: Use sklearn.model_selection.GridSearchCV to find the best c1 and c2 values for your specific dataset.

Troubleshooting Common Issues

-

ImportError: No module named 'sklearn_crfsuite'- Cause: You are trying to run the code in a Python environment where the library is not installed.

- Solution: Make sure your Anaconda Prompt shows

(crf_project)at the beginning of the line. If not, activate it withconda activate crf_project. Then, runpip install sklearn-crfsuiteagain.

-

ValueError: could not convert string to float: 'Apple'- Cause: This error happens when you accidentally pass raw text (strings) to the CRF model's

fitmethod. Thefitmethod expects a list of feature dictionaries (which are numbers or booleans), not a list of words. - Solution: Double-check that you are calling your feature extraction function (

sent2features) before passing the data tocrf.fit(X_train, y_train).

- Cause: This error happens when you accidentally pass raw text (strings) to the CRF model's

-

Model is very slow to train

- Cause: CRFs can be computationally intensive, especially on large datasets or with many features.

- Solution:

- Reduce the number of features.

- Use a smaller subset of your data for initial testing.

- Experiment with different

algorithmsettings. - Increase regularization (

c1,c2) to simplify the model.