Of course! The nltk (Natural Language Toolkit) library in Python provides several powerful and easy-to-use methods for finding similar words. The best method depends on what you mean by "similar."

Here’s a breakdown of the main approaches, from the simplest to the most advanced.

Using WordNet with synsets

This is the classic NLTK approach. "Similarity" here means words that are related by their meaning, such as synonyms, hyponyms (more specific terms), or hypernyms (more general terms).

How it works: NLTK interfaces with the WordNet database, a large lexical database of English. Words are grouped into sets of cognitive synonyms called synsets. We can find similarities by looking at these synsets.

Example: Finding Synonyms and Related Words

Let's find words similar to "car".

import nltk

from nltk.corpus import wordnet

# You might need to download these resources first

# nltk.download('wordnet')

# nltk.download('omw-1.4') # Open Multilingual Wordnet

# Get all synsets for the word "car"

car_synsets = wordnet.synsets("car")

print(f"Found {len(car_synsets)} synsets for 'car':")

for i, syn in enumerate(car_synsets):

print(f"{i+1}. {syn.name()} - {syn.definition()}")

# --- Let's use the first synset, which is the most common ---

car_synset = car_synsets[0]

# Get all lemmas (words) in this synset

lemmas = car_synset.lemmas()

print("\n--- Synonyms (lemmas) from the first synset ---")

for lemma in lemmas:

print(lemma.name()) # Prints 'car' and 'auto', 'automobile', etc.

# Get hyponyms (more specific words)

hyponyms = car_synset.hyponyms()

print("\n--- Hyponyms (more specific words) ---")

for syn in hyponyms[:5]: # Print first 5 for brevity

print(syn.name().replace('_', ' '))

# Get hypernyms (more general words)

hypernyms = car_synset.hypernyms()

print("\n--- Hypernyms (more general words) ---")

for syn in hypernyms:

print(syn.name().replace('_', ' '))

Example: Calculating Semantic Similarity between Two Words

WordNet also allows you to calculate a similarity score between two words based on the structure of the synset graph. The most common method is path_similarity.

# Get synsets for 'car' and 'bus'

car_synset = wordnet.synsets("car")[0]

bus_synset = wordnet.synsets("bus")[0]

# Calculate path similarity (a value between 0 and 1)

# The shorter the path between synsets, the higher the similarity

similarity = car_synset.path_similarity(bus_synset)

print(f"Path similarity between 'car' and 'bus': {similarity:.2f}")

# Compare with a less similar word

apple_synset = wordnet.synsets("apple")[0] # The fruit

similarity_apple = car_synset.path_similarity(apple_synset)

print(f"Path similarity between 'car' and 'apple': {similarity_apple:.2f}")

Pros:

- Based on a rich, human-curated semantic database.

- Good for finding synonyms and exploring word relationships (hypernyms/hyponyms).

Cons:

- Not very good with modern slang, new words, or context.

- Similarity scores can be limited.

Using Pre-trained Word Embeddings (Word2Vec, GloVe)

This is the modern, more powerful approach. "Similarity" here means words that appear in similar contexts in a large corpus of text. This method captures nuanced semantic relationships (e.g., king - man + woman ≈ queen).

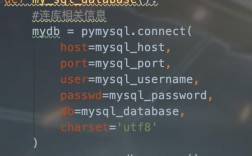

NLTK doesn't have its own pre-trained models, but it can load models trained with other libraries like Gensim. You'll need to download a pre-trained model first.

Example: Finding Most Similar Words using Gensim and NLTK

First, you need to install gensim and download a model.

pip install gensim # Download a pre-trained model (e.g., Google News model is large, good results) # wget -c "https://s3.amazonaws.com/dl4j-distribution/GoogleNews-vectors-negative300.bin.gz"

Now, let's use it in Python.

import gensim.downloader as api

# Load a pre-trained model (this will download it on the first run)

# It can take a while and use ~1.6GB of RAM.

print("Loading pre-trained model...")

model = api.load("word2vec-google-news-300")

print("Model loaded.")

# Find the 10 most similar words to 'car'

similar_words = model.most_similar('car', topn=10)

print("\n--- Most similar words to 'car' (Word2Vec) ---")

for word, score in similar_words:

print(f"{word}: {score:.4f}")

# You can also perform vector math

# The classic example: king - man + woman = queen

result = model.most_similar(positive=['woman', 'king'], negative=['man'], topn=1)

print("\n--- Result of 'woman' + 'king' - 'man' ---")

print(f"{result[0][0]}: {result[0][1]:.4f}")

Pros:

- Captures deep, contextual meaning.

- Excellent for finding words used in similar contexts.

- Allows for powerful vector arithmetic.

Cons:

- Requires a large pre-trained model (big download, lots of RAM).

- Less interpretable than WordNet's definitions.

Using Contextual Embeddings (Transformers like BERT)

This is the state-of-the-art approach. Unlike Word2Vec where every word has one fixed vector, contextual embeddings give a different vector to a word based on the sentence it's in (e.g., the vector for "bank" in "river bank" is different from "bank account").

NLTK has some transformer support, but the most popular library for this is Hugging Face Transformers.

Example: Finding Similar Sentences or Words with sentence-transformers

This library is built on Hugging Face and is perfect for finding similar text.

First, install the library:

pip install sentence-transformers

Now, let's find sentences similar to a query.

from sentence_transformers import SentenceTransformer, util

# Load a pre-trained model

model = SentenceTransformer('all-MiniLM-L6-v2')

# Our query and a list of candidate sentences

query_embedding = model.encode("A car is driving down the street")

candidate_sentences = [

"A vehicle is moving along the road.",

"The man is eating a delicious apple.",

"An automobile speeds down the highway.",

"A person is reading a book in the library."

]

# Encode the candidate sentences

candidate_embeddings = model.encode(candidate_sentences)

# Compute cosine similarity between the query and all candidates

cosine_scores = util.cos_sim(query_embedding, candidate_embeddings)

# Find the most similar sentences

top_results = cosine_scores[0].topk(3)

print(f"Query: 'A car is driving down the street'\n")

print("--- Top 3 most similar sentences ---")

for score, idx in zip(top_results[0], top_results[1]):

print(f"{candidate_sentences[idx]} - Score: {score:.4f}")

Pros:

- The most accurate method for understanding context.

- Excellent for semantic search, question answering, and paraphrase detection.

Cons:

- Can be slower and more resource-intensive.

- Requires a more complex library setup.

Summary: Which one should you use?

| Method | Best For | Pros | Cons |

|---|---|---|---|

| NLTK WordNet | Finding synonyms, antonyms, hyponyms/hypernyms. Simple, human-readable definitions. | Easy to use, great for exploring word relationships. | Not context-aware, limited vocabulary. |

| Word Embeddings (Gensim) | Finding words used in similar contexts, semantic analogy tasks. | Captures deep meaning, allows vector math. | Needs large models, less interpretable. |

| Contextual Embeddings (Transformers) | High-accuracy semantic search, understanding meaning in context. | Most accurate, handles context and nuance. | Slower, more complex setup, heavier models. |

For most "find similar words" tasks in Python, starting with Word2Vec/Gensim is the best modern choice. If you need to understand relationships like "is a type of," WordNet is still very useful. For the highest accuracy with sentences or complex queries, use Transformers.