Of course! Using Python with Kubernetes is a very common and powerful combination. You can use Python to automate cluster management, deploy applications, create custom controllers, and build developer tools.

Here's a comprehensive guide covering the main ways to interact with Kubernetes from Python.

The Official Kubernetes Client Library (client-python)

This is the most direct and powerful way to interact with the Kubernetes API. It's a Python client that generates code directly from the Kubernetes OpenAPI (Swagger) specification. It allows you to do anything the kubectl command can do, programmatically.

Installation

First, install the library:

pip install kubernetes

Basic Concepts

The library works by:

- Loading a Configuration: It needs to know how to connect to your Kubernetes cluster (e.g., the URL, a certificate, a token). It's smart enough to automatically find the configuration from your local

kubeconfigfile (the same onekubectluses), which is the most common scenario. - Creating an API Client: You instantiate a client object for each Kubernetes API group you want to interact with (e.g.,

CoreV1Api,AppsV1Api). - Making API Calls: You use the client's methods to perform actions (e.g.,

list_namespaced_pod,create_namespaced_deployment).

Example: Listing Pods

This is the "Hello, World!" of Kubernetes Python programming.

from kubernetes import client, config

# 1. Load the kubeconfig file (usually from ~/.kube/config)

# This will work if you have a kubectl context set up.

try:

config.load_kube_config()

except config.ConfigException:

# If not running locally, you might need to load an in-cluster config

# This is common when running inside a Pod in the cluster itself.

config.load_incluster_config()

# 2. Create an API client instance

v1 = client.CoreV1Api()

# 3. List all pods in the "default" namespace

print("Listing pods with their IPs:")

ret = v1.list_pod_for_all_namespaces(watch=False)

for item in ret.items:

print(f"Namespace: {item.metadata.namespace}, Name: {item.metadata.name}, IP: {item.status.pod_ip}")

Example: Creating a Deployment

This example shows how to create a simple NGINX deployment.

import yaml

from kubernetes import client, config

# Load config (same as before)

config.load_kube_config()

apps_v1 = client.AppsV1Api()

# Define the deployment manifest

# You can also load this from a YAML file using yaml.safe_load()

deployment_manifest = {

'apiVersion': 'apps/v1',

'kind': 'Deployment',

'metadata': {

'name': 'nginx-deployment-python',

'labels': {

'app': 'nginx'

}

},

'spec': {

'replicas': 3,

'selector': {

'matchLabels': {

'app': 'nginx'

}

},

'template': {

'metadata': {

'labels': {

'app': 'nginx'

}

},

'spec': {

'containers': [{

'name': 'nginx',

'image': 'nginx:1.21.0',

'ports': [{

'containerPort': 80

}]

}]

}

}

}

}

# Create the deployment

try:

resp = apps_v1.create_namespaced_deployment(

body=deployment_manifest,

namespace="default"

)

print(f"Deployment created. Status: '{resp.status}'")

except client.exceptions.ApiException as e:

print(f"Exception when calling AppsV1Api->create_namespaced_deployment: {e}")

Pro Tip: Use a YAML Manifest File

Instead of building the dictionary manually, it's much cleaner to define your Kubernetes resources in a YAML file and load them.

import yaml

from kubernetes import client, config

config.load_kube_config()

v1 = client.CoreV1Api()

# Load a service manifest from a file

with open("my-service.yaml", "r") as f:

service_manifest = yaml.safe_load(f)

# Create the service

try:

resp = v1.create_namespaced_service(

body=service_manifest,

namespace="default"

)

print(f"Service created. Name: {resp.metadata.name}")

except client.exceptions.ApiException as e:

print(f"Exception when creating service: {e}")

The kubectl Wrapper (kubernetes-cli)

If you're more comfortable with kubectl or have complex, multi-file YAML configurations, you can simply execute kubectl commands from Python using the subprocess module.

Installation

You just need kubectl installed on your system and available in your PATH. No special Python library is needed for this approach.

Example: Executing kubectl get pods

import subprocess

def get_pods(namespace="default"):

command = ["kubectl", "get", "pods", "-n", namespace]

try:

# Execute the command and capture the output

result = subprocess.run(command, check=True, capture_output=True, text=True)

print("Successfully got pods:")

print(result.stdout)

except subprocess.CalledProcessError as e:

print(f"Error executing command: {e}")

print(f"Stderr: {e.stderr}")

# Call the function

get_pods(namespace="kube-system")

When to use this method:

- Quick & Simple Scripts: For simple automation where you just need to run one or two

kubectlcommands. - Complex Manifests: When dealing with Helm charts or multi-file

kubectl applyscenarios that are hard to manage programmatically with the client library.

When to avoid it:

- Performance: Spawning a new process for every command is slower than using the client library.

- Error Handling: Parsing the text output of

kubectlfor errors is more brittle than checking for API exceptions. - Platform Dependency: Your script will only work on systems where

kubectlis installed.

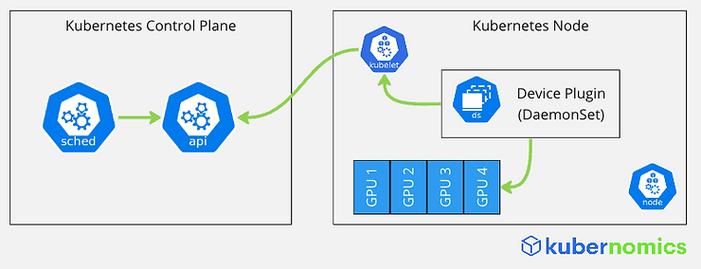

Building Custom Controllers (Kubebuilder or Operator Framework)

For advanced use cases, you might want to build a custom controller. A controller is a loop that watches the state of your cluster and takes action to move the current state towards the desired state. The most famous example is the Deployment controller, which ensures the correct number of Pod replicas are always running.

The Operator Framework is a set of tools (like kubebuilder) in the CNCF that makes building operators in Python (or Go) easier.

This is a complex topic, but the basic idea is:

- Watch Resources: Your Python application uses the

client-pythonlibrary to watch for changes to a Custom Resource (CR) you define (e.g.,MyDatabase). - Reconcile Loop: When a change is detected, your "reconciler" logic runs.

- Act on the Cluster: The reconciler uses the

client-pythonlibrary to create, update, or delete other Kubernetes resources (like Deployments, Services, Secrets) to fulfill the desired state defined in your CR.

This is the most "Kubernetes-native" way to build automation and is used for managing complex applications like databases, message queues, etc.

Summary and Comparison

| Method | Best For | Pros | Cons |

|---|---|---|---|

client-python |

Most use cases. Programmatic control, building tools, CI/CD pipelines, custom controllers. | - Direct API access - High performance - Rich error handling (API exceptions) - Pythonic and powerful |

- Steeper learning curve than kubectl wrapper- Requires understanding Kubernetes API objects |

kubectl Wrapper |

Simple scripts, quick automation, or when dealing with existing complex kubectl commands. |

- Very easy if you know kubectl- Leverages existing YAML/Helm charts directly |

- Slow (spawns processes) - Brittle text parsing for errors - Platform-dependent (needs kubectl) |

| Custom Operator | Advanced automation of complex applications, managing stateful services. | - Deeply integrated with Kubernetes - Declarative model - Can handle complex logic and failure scenarios |

- Very high complexity - Overkill for simple tasks - Requires understanding controllers and CRDs |

Getting Started: Your First Python-Kubernetes Project

-

Install Prerequisites:

- Python 3.6+

- A running Kubernetes cluster (e.g., Minikube, Docker Desktop, GKE, EKS).

kubectlconfigured to talk to your cluster (kubectl get nodesshould work).

-

Create a project directory:

mkdir k8s-python-project cd k8s-python-project

-

Install the library:

pip install kubernetes

-

Create a file

list_pods.pywith the first example code above. -

Run it:

python list_pods.py

You should see a list of the pods running in your cluster! This is the foundation for all your Kubernetes automation with Python.