Of course! Here is a comprehensive guide to StandardScaler in Python, including its purpose, how it works, when to use it, and code examples.

What is StandardScaler?

StandardScaler is a preprocessing tool from the popular machine learning library, Scikit-learn. Its primary purpose is to standardize the features of a dataset.

Standardization is a type of feature scaling that transforms the data to have a mean of 0 and a standard deviation of 1.

The formula for standardizing a single feature value x is:

$$ z = \frac{(x - \mu)}{\sigma} $$

Where:

zis the standardized value.xis the original value.- (mu) is the mean of the feature's column.

- (sigma) is the standard deviation of the feature's column.

Why is Standardization Important?

Many machine learning algorithms perform better or converge faster when the input features are on a similar scale. Here’s why:

-

Algorithms that use Gradient Descent: Algorithms like Linear Regression, Logistic Regression, and Neural Networks use gradient descent to find the optimal parameters. If features are on vastly different scales (e.g., age from 20-70 and income from $30,000-$150,000), the gradient descent can take a long time to converge or get stuck in a suboptimal solution. Standardization helps the algorithm navigate the cost function more efficiently.

-

Algorithms that use Distance Metrics: Algorithms like K-Nearest Neighbors (KNN) and Support Vector Machines (SVM) with a 'RBF' kernel rely on calculating distances between data points. If one feature has a much larger range than others, it will dominate the distance calculation, making the other features almost irrelevant.

(图片来源网络,侵删)

(图片来源网络,侵删) -

Principal Component Analysis (PCA): PCA is sensitive to the variances of the features. Standardizing ensures that all features contribute equally to the principal components.

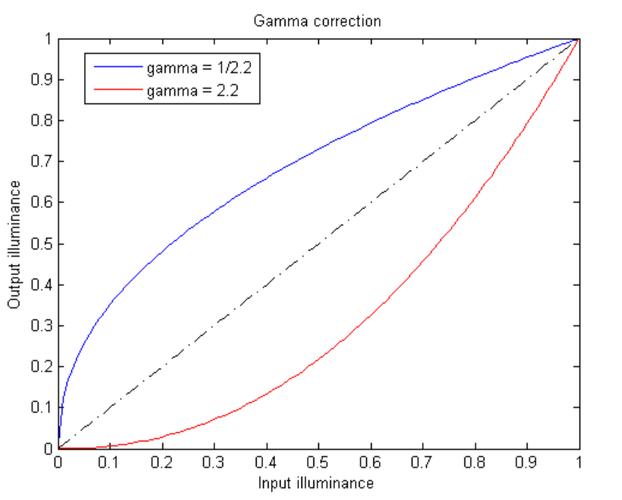

When to Use StandardScaler vs. MinMaxScaler

This is a crucial question. Both are scaling techniques, but they are used in different scenarios.

| Feature | StandardScaler |

MinMaxScaler |

|---|---|---|

| Transformation | Rescales to a standard normal distribution (mean=0, std=1). | Rescales to a fixed range, typically [0, 1]. |

| Formula | z = (x - mean) / std_dev |

x_scaled = (x - min) / (max - min) |

| Effect of Outliers | Highly sensitive. A single outlier can drastically change the mean and standard deviation, compressing the rest of the data. | Sensitive. Outliers can squash the non-outlier values into a very small range. |

| Best For | Algorithms that assume a Gaussian (normal) distribution. Works well even if the data isn't perfectly normal. | Algorithms that do not make distributional assumptions, like K-Nearest Neighbors and Neural Networks. Useful when you need bounded features. |

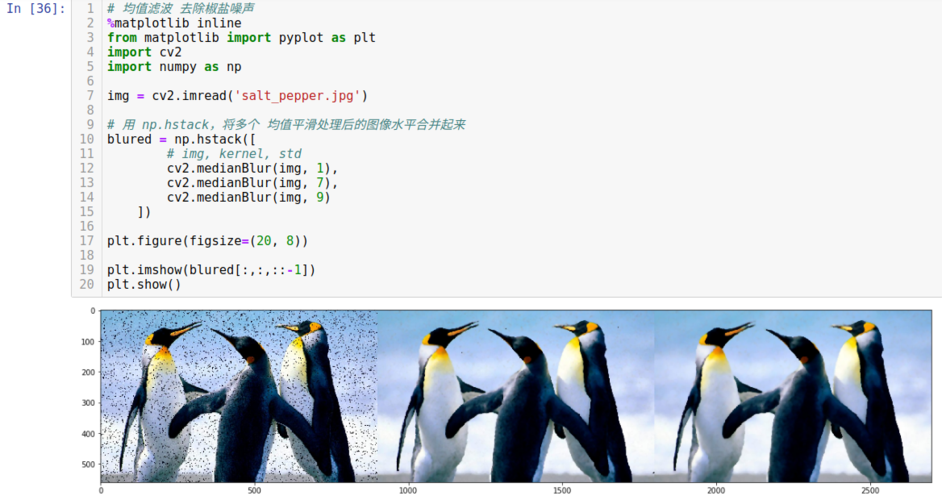

| Use Case | Linear/Logistic Regression, PCA, LDA. | Image processing (pixel values 0-255), Neural Networks, KNN. |

Rule of Thumb: If your algorithm is sensitive to the variance of the features (like PCA) or uses gradient descent, StandardScaler is often a good first choice. If your algorithm relies on bounded data or distance metrics and you don't have significant outliers, MinMaxScaler can be a good option.

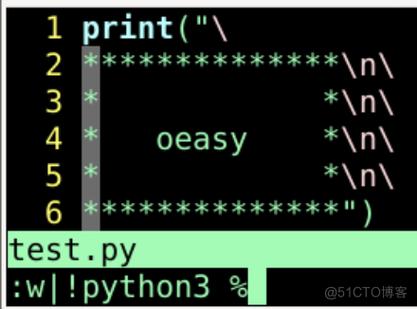

How to Use StandardScaler (Code Examples)

Let's walk through a complete workflow.

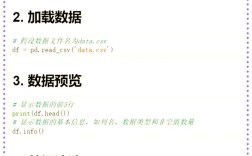

Step 1: Import Libraries

import numpy as np import pandas as pd from sklearn.preprocessing import StandardScaler from sklearn.model_selection import train_test_split

Step 2: Create Sample Data

We'll create a simple dataset with two features on very different scales.

# Sample data: Age (20-70) and Income ($30k-$150k)

data = {

'Age': [25, 30, 45, 50, 22, 35, 60, 28],

'Income': [50000, 60000, 120000, 150000, 45000, 80000, 180000, 55000],

'Purchased': [0, 1, 1, 1, 0, 1, 1, 0] # Target variable

}

df = pd.DataFrame(data)

print("Original Data:")

print(df)

Original Data:

Age Income Purchased

0 25 50000 0

1 30 60000 1

2 45 120000 1

3 50 150000 1

4 22 45000 0

5 35 80000 1

6 60 180000 1

7 28 55000 0Step 3: Separate Features and Target

It's important to only scale the features, not the target variable.

# Separate features (X) and target (y) X = df[['Age', 'Income']] y = df['Purchased']

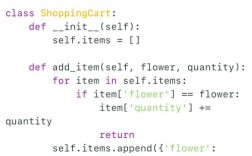

Step 4: Initialize and Fit the Scaler

The .fit() method calculates the mean and standard deviation for each feature in your training data. It is crucial to only fit the scaler on the training data to avoid data leakage from the test set.

# Initialize the scaler

scaler = StandardScaler()

# Fit the scaler on the training data and transform it

# We'll use all data for this simple example, but in a real project, you'd split first.

X_scaled = scaler.fit_transform(X)

# The output is a NumPy array

print("\nScaled Data (NumPy Array):")

print(X_scaled)

Scaled Data (NumPy Array):

[[-1.01835024 -0.85574211]

[-0.72496453 -0.70989526]

[ 0.15286484 0.59064358]

[ 0.44675867 0.93576883]

[-1.18073334 -0.95427712]

[-0.33166113 -0.43234796]

[ 1.63989007 1.50603626]

[-0.98420435 -0.81018458]]Notice how the values are now centered around 0.

Step 5: Convert Back to a DataFrame (Optional but Recommended)

It's much easier to work with a DataFrame because you can see the column names.

# Convert the scaled NumPy array back to a DataFrame

X_scaled_df = pd.DataFrame(X_scaled, columns=X.columns)

print("\nScaled Data (DataFrame):")

print(X_scaled_df)

Scaled Data (DataFrame):

Age Income

0 -1.018350 -0.855742

1 -0.724965 -0.709895

2 0.152865 0.590644

3 0.446759 0.935769

4 -1.180733 -0.954277

5 -0.331661 -0.432348

6 1.639890 1.506036

7 -0.984204 -0.810185Step 6: The Correct Workflow (Train/Test Split)

This is the most important part. You must fit the scaler on the training data and use that same fitted scaler to transform the test data.

# 1. Split the data first

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

print("\n--- Correct Workflow ---")

print(f"X_train shape: {X_train.shape}")

print(f"X_test shape: {X_test.shape}")

# 2. Initialize a new scaler

scaler_wf = StandardScaler()

# 3. Fit the scaler ONLY on the training data

scaler_wf.fit(X_train)

# 4. Transform both the training and testing data

X_train_scaled = scaler_wf.transform(X_train)

X_test_scaled = scaler_wf.transform(X_test)

print("\nScaled Training Data (first 3 rows):")

print(X_train_scaled[:3])

print("\nScaled Testing Data (first 3 rows):")

print(X_test_scaled[:3])

# You can check the parameters learned by the scaler

print("\nScaler Mean (learned from X_train):")

print(scaler_wf.mean_)

print("\nScaler Scale (Standard Deviation, learned from X_train):")

print(scaler_wf.scale_)

Key Takeaways and Best Practices

- Always Scale After Train/Test Split: This prevents information from your test set "leaking" into your training process, which would lead to an overly optimistic evaluation